open.substack.com/pub/iandunt/...

open.substack.com/pub/iandunt/...

This isn't journalism:

This isn't journalism:

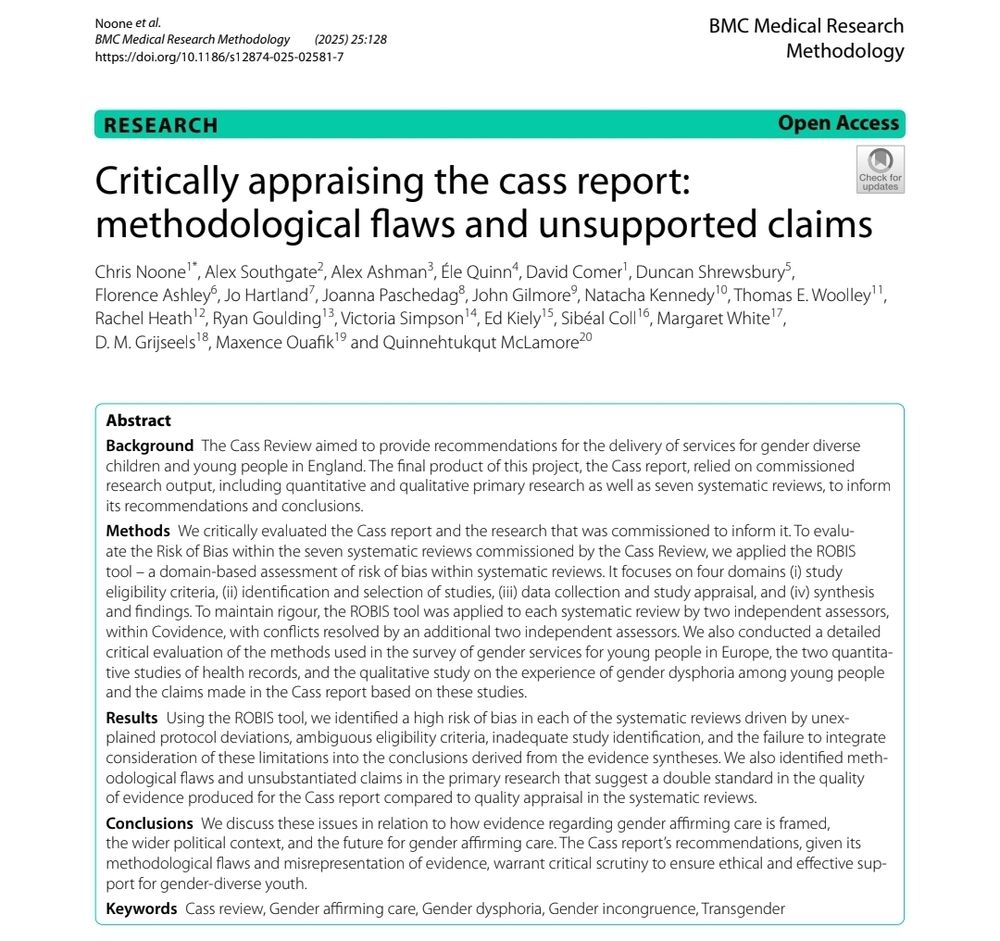

link.springer.com/article/10.1...

link.springer.com/article/10.1...

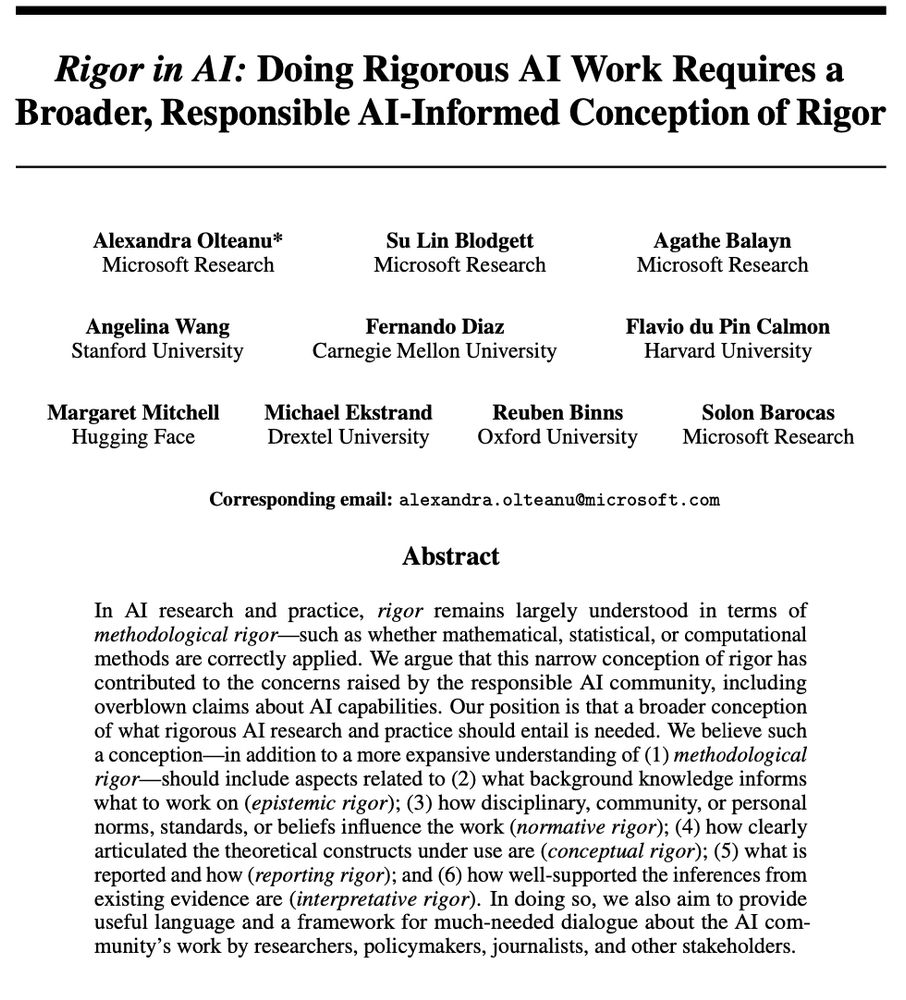

I'm late for #ICLR2025 #NAACL2025, but in time for #AISTATS2025 #ICML2025! 1/3

kamathematics.wordpress.com/2025/05/01/t...

I'm late for #ICLR2025 #NAACL2025, but in time for #AISTATS2025 #ICML2025! 1/3

kamathematics.wordpress.com/2025/05/01/t...

Giuseppe Serra, Ben Werner, Florian Buettner

Action editor: Emmanuel Bengio

https://openreview.net/forum?id=dczXe0S1oL

#forgetting #memory #forget

Giuseppe Serra, Ben Werner, Florian Buettner

Action editor: Emmanuel Bengio

https://openreview.net/forum?id=dczXe0S1oL

#forgetting #memory #forget

of surveyed clinicians, 53% use LLMs daily & 91% encountered hallucinations

of surveyed clinicians, 53% use LLMs daily & 91% encountered hallucinations

In an exclusive piece for Zeteo, UN Special Rapporteur Francesca Albanese writes about her 5-day trip that exposed Germany's harsh deviation from democratic values:

In an exclusive piece for Zeteo, UN Special Rapporteur Francesca Albanese writes about her 5-day trip that exposed Germany's harsh deviation from democratic values: