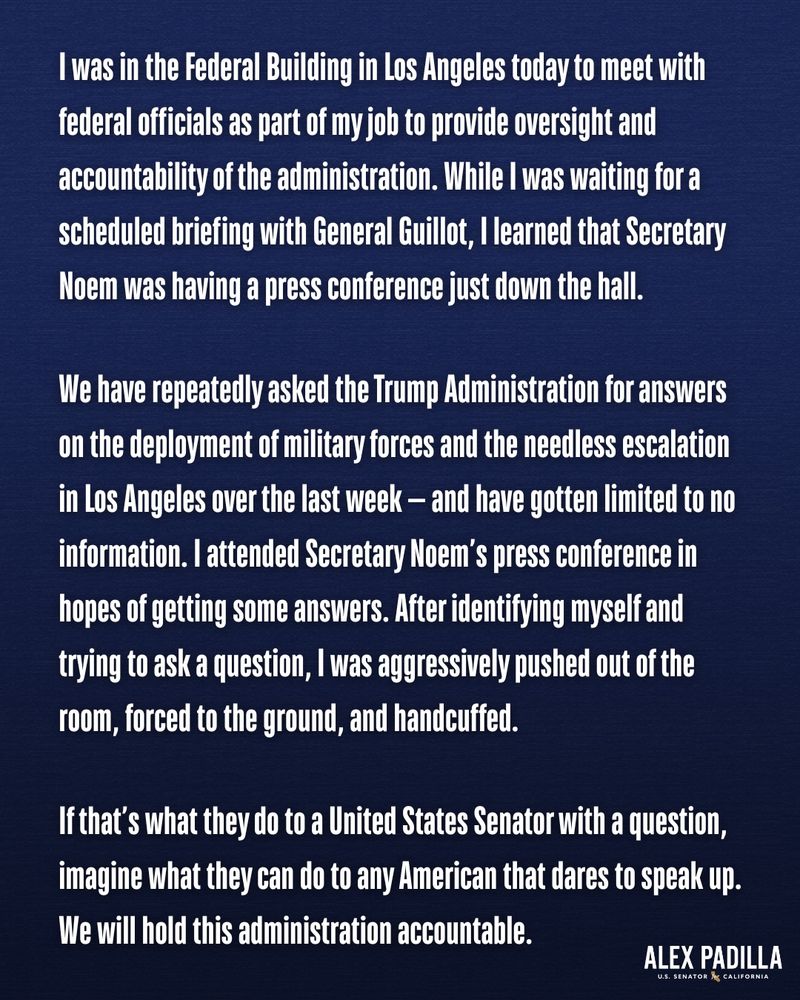

This political prosecution is an attack on all of our First Amendment rights. I’m not backing down, and we’re going to win.

This political prosecution is an attack on all of our First Amendment rights. I’m not backing down, and we’re going to win.

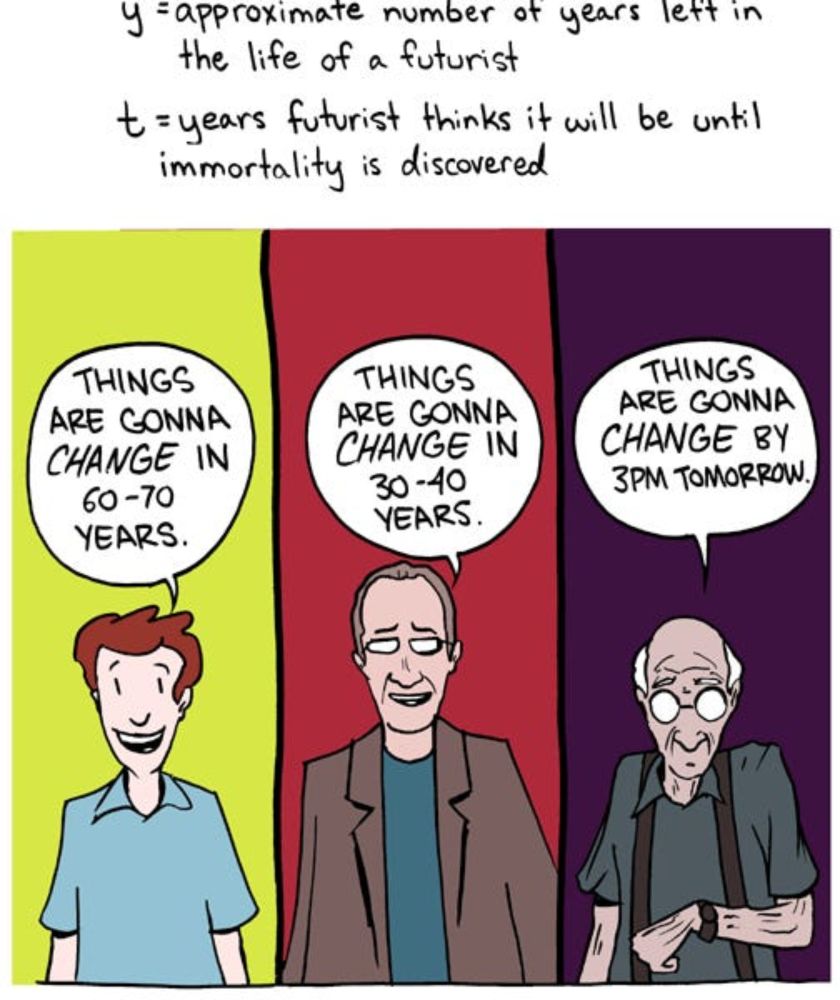

I argue that this is not a coincidence at all, and its predictions are all wrong.

www.verysane.ai/p/agi-probab...

I argue that this is not a coincidence at all, and its predictions are all wrong.

www.verysane.ai/p/agi-probab...

This is not a simple budget increase. It is an explosion - making ICE bigger than the FBI, US Bureau of Prisons, DEA,& others combined.

It is setting up to make what’s happening now look like child’s play. And people are disappearing.

This is not a simple budget increase. It is an explosion - making ICE bigger than the FBI, US Bureau of Prisons, DEA,& others combined.

It is setting up to make what’s happening now look like child’s play. And people are disappearing.

transformer-circuits.pub

transformer-circuits.pub

They discover numbers are represented in these LLMs as a generalized helix, which is strongly causally implicated for the tasks of addition and subtraction, and is also causally relevant for integer division, multiplication, and modular arithmetic.

They discover numbers are represented in these LLMs as a generalized helix, which is strongly causally implicated for the tasks of addition and subtraction, and is also causally relevant for integer division, multiplication, and modular arithmetic.

It summarises the state of the science on AI capabilities and risks, and how to mitigate those risks. 🧵

Full Report: assets.publishing.service.gov.uk/media/679a0c...

1/21

It summarises the state of the science on AI capabilities and risks, and how to mitigate those risks. 🧵

Full Report: assets.publishing.service.gov.uk/media/679a0c...

1/21

the loudest ai skeptics are not at all interested in why these models work so well despite their simplicity

the loudest ai skeptics are not at all interested in why these models work so well despite their simplicity

Trying to build NLP interfaces is taking my team an extremely long time and is extremely brittle

Trying to build NLP interfaces is taking my team an extremely long time and is extremely brittle