Head of Signal, Meredith Whittaker, on so-called "agentic AI" and the difference between how it's described in the marketing and what access and control it would actually require to work as advertised.

Head of Signal, Meredith Whittaker, on so-called "agentic AI" and the difference between how it's described in the marketing and what access and control it would actually require to work as advertised.

We are thrilled to announce the Common Pile v0.1, an 8TB dataset of openly licensed and public domain text. We train 7B models for 1T and 2T tokens and match the performance similar models like LLaMA 1 & 2

We are thrilled to announce the Common Pile v0.1, an 8TB dataset of openly licensed and public domain text. We train 7B models for 1T and 2T tokens and match the performance similar models like LLaMA 1 & 2

2⃣ If we're in denial (or simply not paying attention), we could lose the chance to shape this technology when it matters most.

2/3

2⃣ If we're in denial (or simply not paying attention), we could lose the chance to shape this technology when it matters most.

2/3

There are many issues with AI & many things that need critique, but pretending it is going away is not helpful.

Law students using o1-preview had the quality of work on most tasks increase (up to 28%) & time savings of 12-28%

There were a few hallucinations, but a RAG-based AI with access to legal material reduced those to human level

There are many issues with AI & many things that need critique, but pretending it is going away is not helpful.

Let's take a look at the claim "data doesn't lie", shall we? 🧵

Let's take a look at the claim "data doesn't lie", shall we? 🧵

www.nbcnews.com/politics/dog...

digital.canada.ca/2025/02/18/w...

digital.canada.ca/2025/02/18/w...

time.com/7259395/ai-c...

time.com/7259395/ai-c...

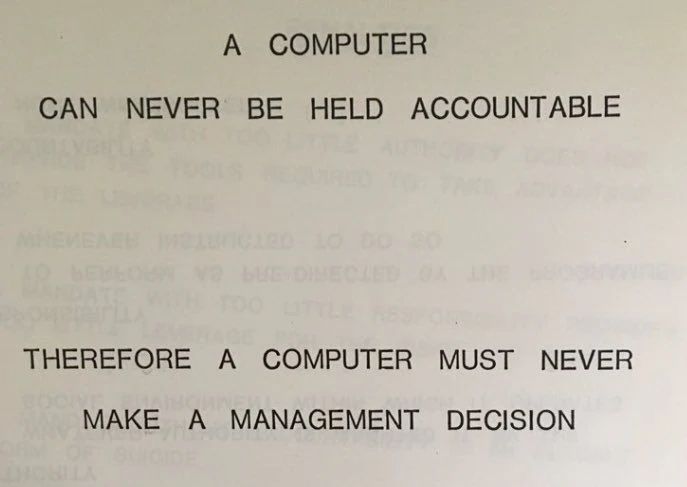

We explain why Fully Autonomous Agents Should Not be Developed, breaking “AI Agent” down into its components & examining through ethical values.

With @evijit.io, @giadapistilli.com and @sashamtl.bsky.social

huggingface.co/papers/2502....

It's all about culture and process.

medium.com/@supergovern...

It's all about culture and process.

medium.com/@supergovern...

2/2

2/2

time.com/7213096/uk-p...

time.com/7213096/uk-p...

www.nbcnews.com/business/bus...

www.nbcnews.com/business/bus...

AI capabilities have improved remarkably quickly, fuelled by the explosive scale-up of resources being used to train the leading models. But the scaling laws that inspired this rush actually show very poor returns to scale. What’s going on?

1/

www.tobyord.com/writing/the-...

AI capabilities have improved remarkably quickly, fuelled by the explosive scale-up of resources being used to train the leading models. But the scaling laws that inspired this rush actually show very poor returns to scale. What’s going on?

1/

www.tobyord.com/writing/the-...

This chart is on a log scale. This year there have been just 7 cases of guinea worm.

ourworldindata.org/grapher/numb...

This chart is on a log scale. This year there have been just 7 cases of guinea worm.

ourworldindata.org/grapher/numb...

TL;DR- any kind of estimate is a proxy, and instead of wasting our time and energy, we should demand👏🏼 accountability👏🏼

TL;DR- any kind of estimate is a proxy, and instead of wasting our time and energy, we should demand👏🏼 accountability👏🏼

-o1 system card

-o1 system card

The coming psychopolitical regime "directs the environments where our ideas are born, developed, and expressed. Its power lies in its intimacy—it infiltrates our subjectivity.

We will be playing an imitation game that ultimately plays us."

The coming psychopolitical regime "directs the environments where our ideas are born, developed, and expressed. Its power lies in its intimacy—it infiltrates our subjectivity.

We will be playing an imitation game that ultimately plays us."

www.anthropic.com/research/ali...

www.anthropic.com/research/ali...