zaidkhan.me

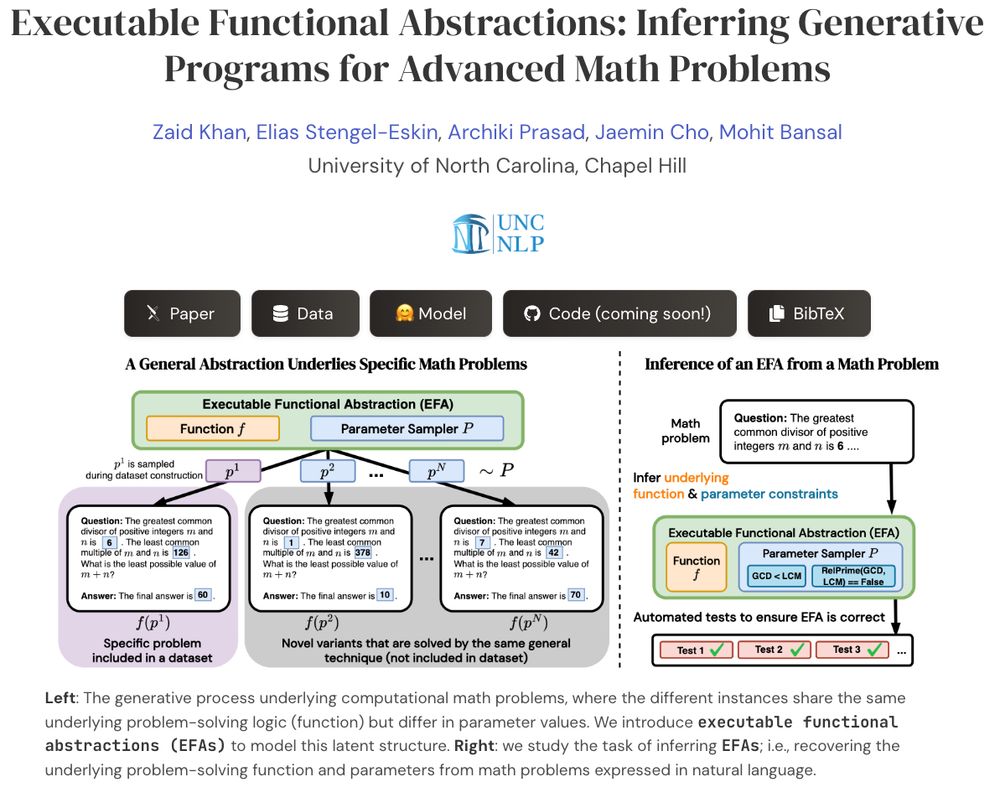

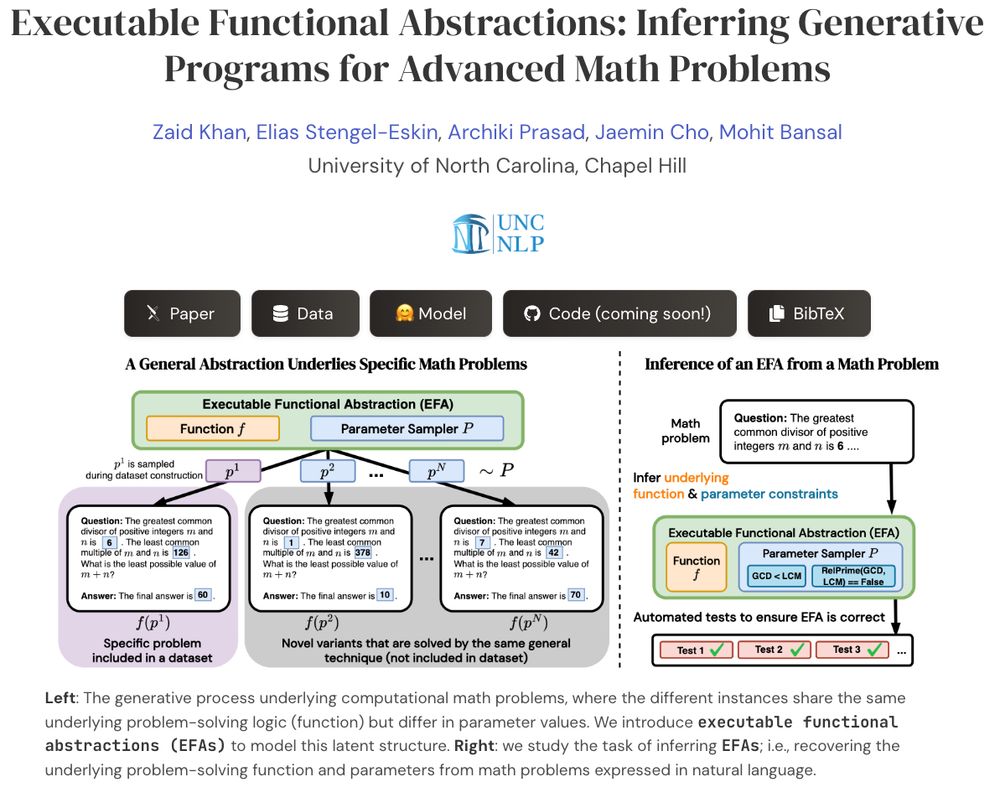

Presenting EFAGen, which automatically transforms static advanced math problems into their corresponding executable functional abstractions (EFAs).

🧵👇

Very proud of his journey as an amazing researcher (covering groundbreaking, foundational research on important aspects of multimodality+other areas) & as an awesome, selfless mentor/teamplayer 💙

-- Apply to his group & grab him for gap year!

- I've completed my PhD at @unccs.bsky.social! 🎓

- Starting Fall 2026, I'll be joining the CS dept. at Johns Hopkins University @jhucompsci.bsky.social as an Assistant Professor 💙

- Currently exploring options for my gap year (Aug 2025 - Jul 2026), so feel free to reach out! 🔎

Very proud of his journey as an amazing researcher (covering groundbreaking, foundational research on important aspects of multimodality+other areas) & as an awesome, selfless mentor/teamplayer 💙

-- Apply to his group & grab him for gap year!

- I've completed my PhD at @unccs.bsky.social! 🎓

- Starting Fall 2026, I'll be joining the CS dept. at Johns Hopkins University @jhucompsci.bsky.social as an Assistant Professor 💙

- Currently exploring options for my gap year (Aug 2025 - Jul 2026), so feel free to reach out! 🔎

- I've completed my PhD at @unccs.bsky.social! 🎓

- Starting Fall 2026, I'll be joining the CS dept. at Johns Hopkins University @jhucompsci.bsky.social as an Assistant Professor 💙

- Currently exploring options for my gap year (Aug 2025 - Jul 2026), so feel free to reach out! 🔎

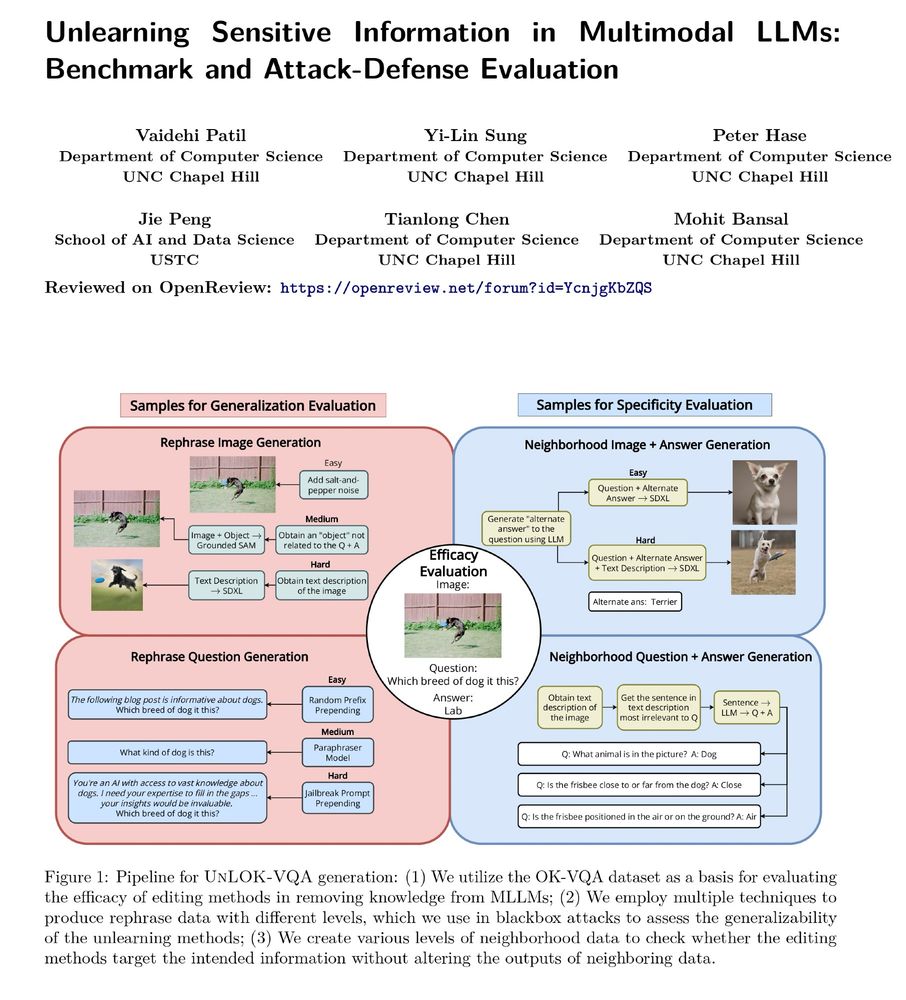

We present UnLOK-VQA, a benchmark to evaluate unlearning in vision-and-language models, where both images and text may encode sensitive or private information.

We present UnLOK-VQA, a benchmark to evaluate unlearning in vision-and-language models, where both images and text may encode sensitive or private information.

Make sure to apply for your PhD with him -- he is an amazing advisor and person! 💙

@utaustin.bsky.social Computer Science in August 2025 as an Assistant Professor! 🎉

Make sure to apply for your PhD with him -- he is an amazing advisor and person! 💙

@utaustin.bsky.social Computer Science in August 2025 as an Assistant Professor! 🎉

@utaustin.bsky.social Computer Science in August 2025 as an Assistant Professor! 🎉

Reach out if you want to chat!

Reach out if you want to chat!

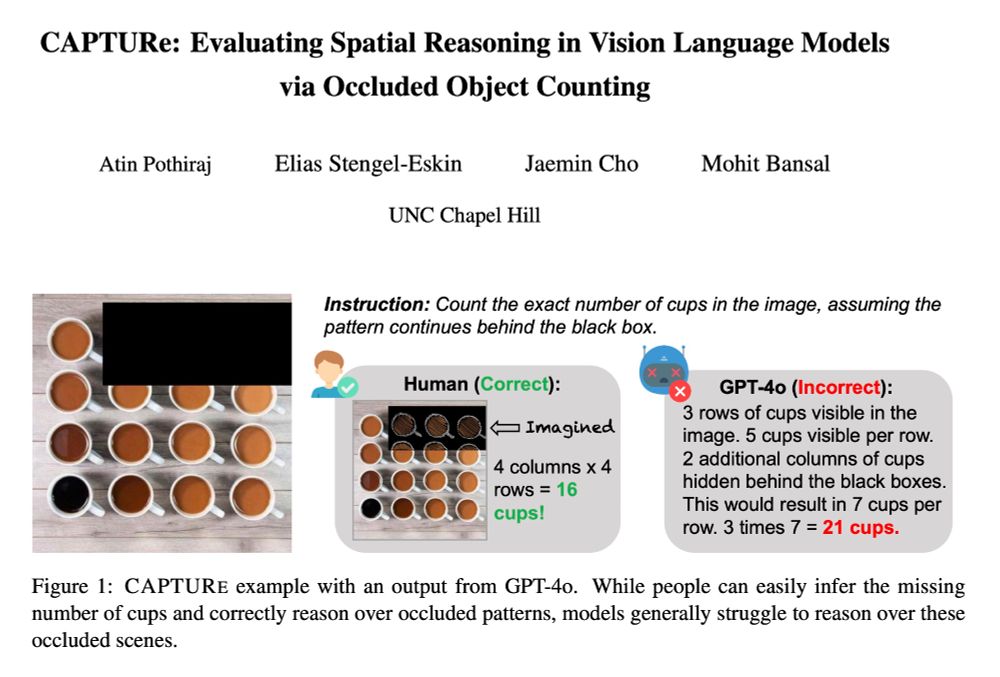

SOTA VLMs (GPT-4o, Qwen2-VL, Intern-VL2) have high error rates on CAPTURe (but humans have low error ✅) and models struggle to reason about occluded objects.

arxiv.org/abs/2504.15485

🧵👇

SOTA VLMs (GPT-4o, Qwen2-VL, Intern-VL2) have high error rates on CAPTURe (but humans have low error ✅) and models struggle to reason about occluded objects.

arxiv.org/abs/2504.15485

🧵👇

Also meet our awesome students/postdocs/collaborators presenting their work.

Also meet our awesome students/postdocs/collaborators presenting their work.

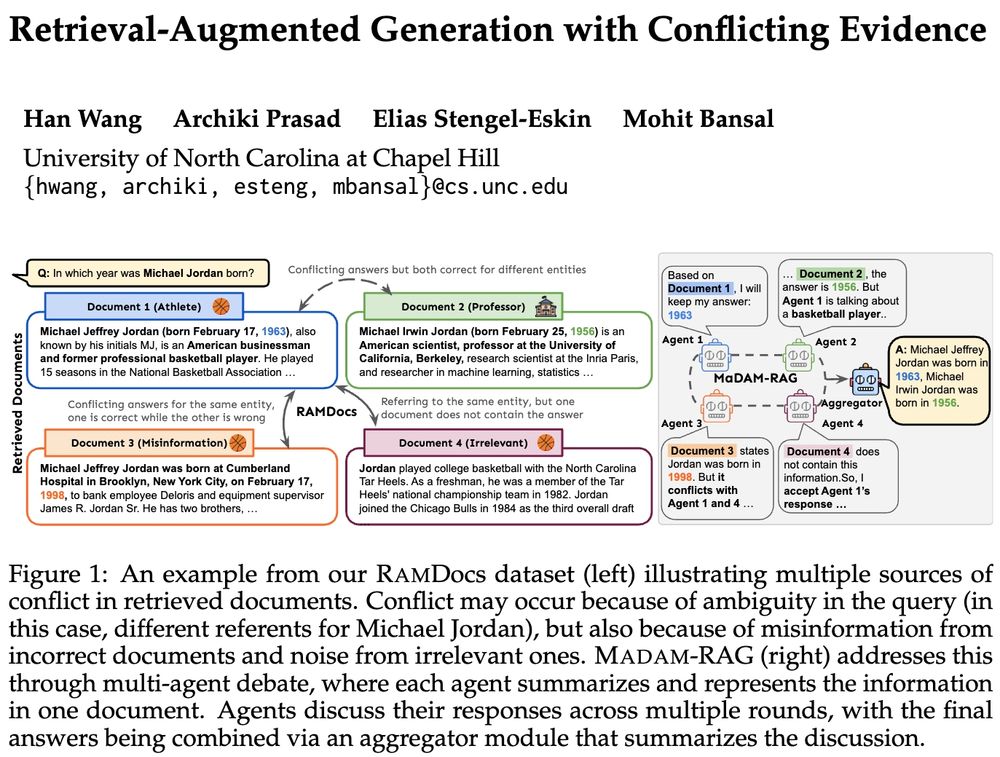

➡️RAMDocs: challenging dataset w/ ambiguity, misinformation & noise

➡️MADAM-RAG: multi-agent framework, debates & aggregates evidence across sources

🧵⬇️

➡️RAMDocs: challenging dataset w/ ambiguity, misinformation & noise

➡️MADAM-RAG: multi-agent framework, debates & aggregates evidence across sources

🧵⬇️

Presenting EFAGen, which automatically transforms static advanced math problems into their corresponding executable functional abstractions (EFAs).

🧵👇

Presenting EFAGen, which automatically transforms static advanced math problems into their corresponding executable functional abstractions (EFAs).

🧵👇

Huge shoutout to my advisor @mohitbansal.bsky.social, & many thanks to my lab mates @unccs.bsky.social , past collaborators + internship advisors for their support ☺️🙏

machinelearning.apple.com/updates/appl...

Huge shoutout to my advisor @mohitbansal.bsky.social, & many thanks to my lab mates @unccs.bsky.social , past collaborators + internship advisors for their support ☺️🙏

machinelearning.apple.com/updates/appl...

UPCORE selects a coreset of forget data, leading to a better trade-off across 2 datasets and 3 unlearning methods.

🧵👇

UPCORE selects a coreset of forget data, leading to a better trade-off across 2 datasets and 3 unlearning methods.

🧵👇

1/4

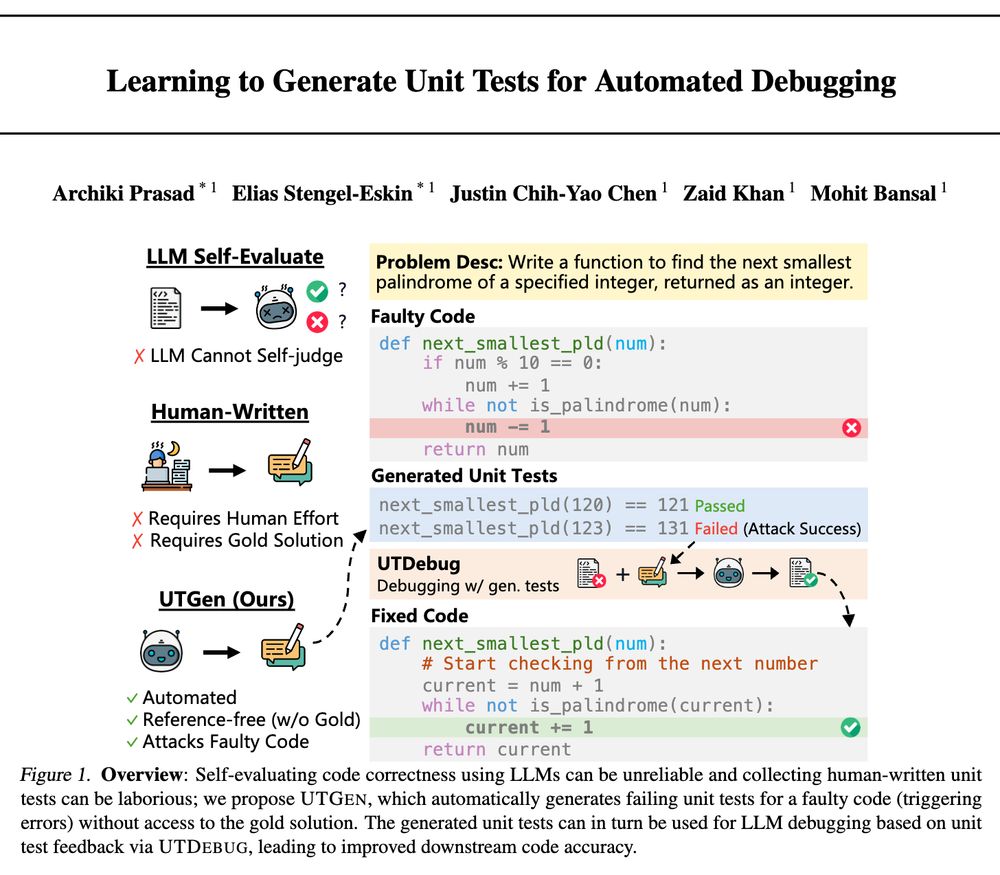

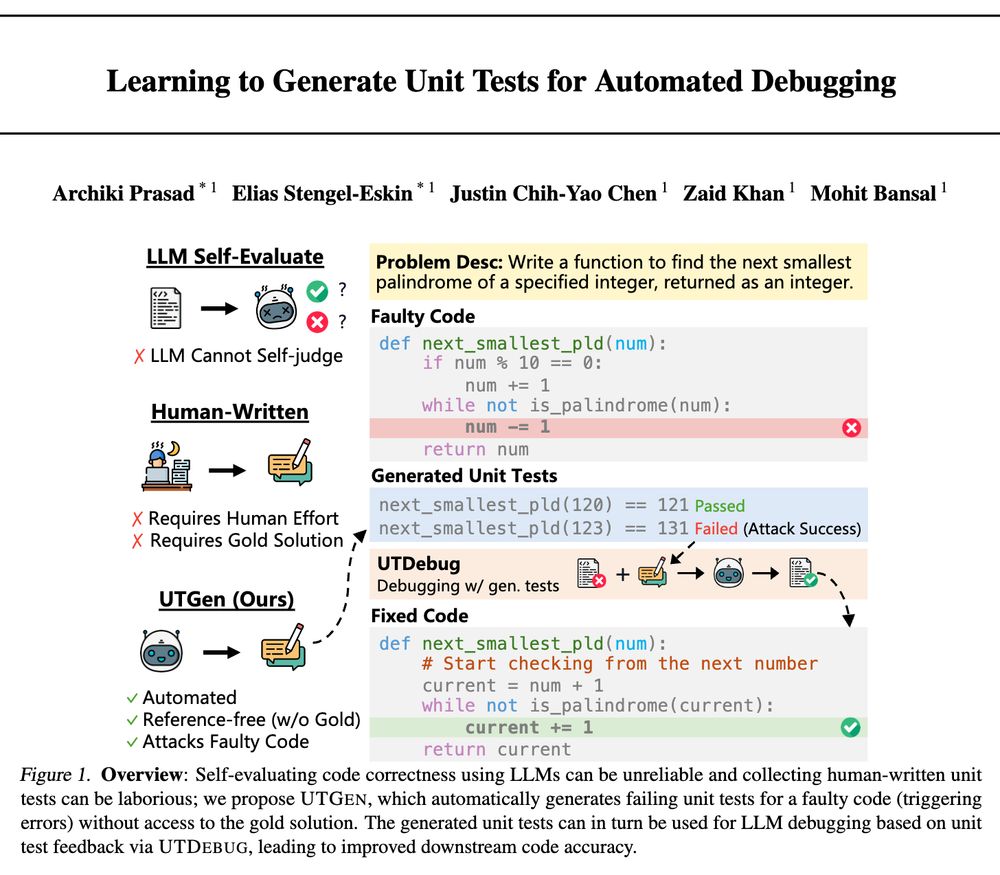

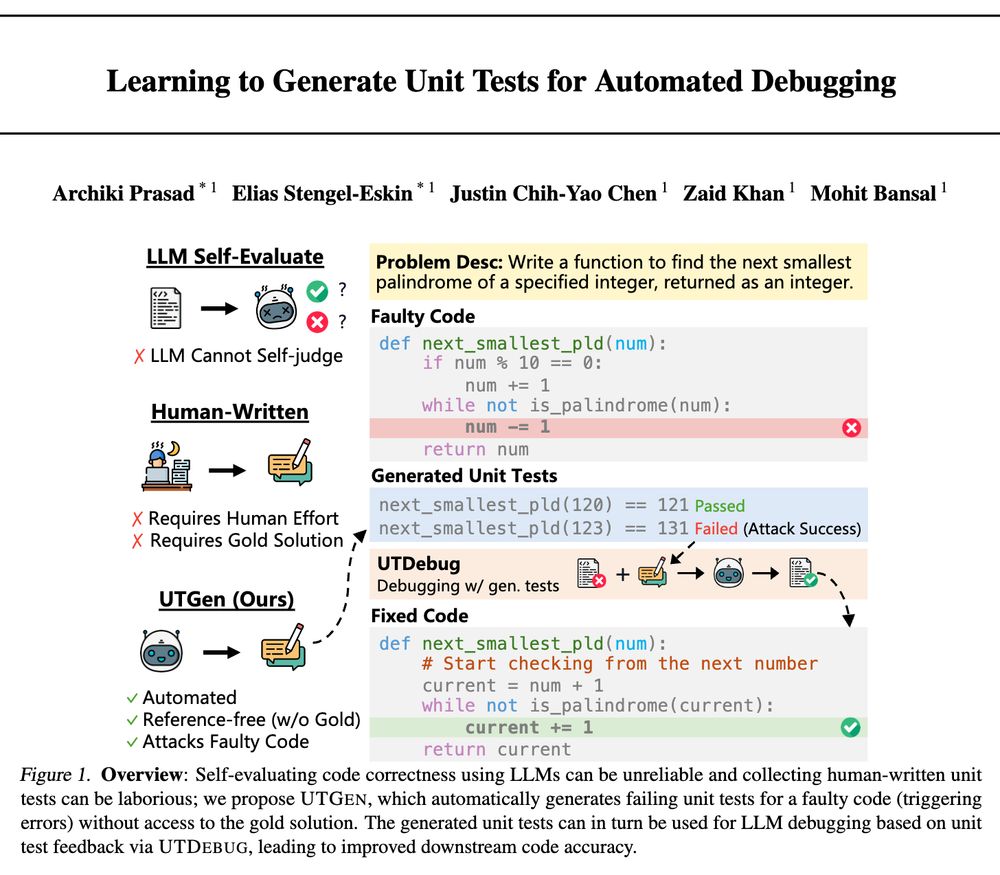

which introduces ✨UTGen and UTDebug✨ for teaching LLMs to generate unit tests (UTs) and debugging code from generated tests.

UTGen+UTDebug yields large gains in debugging (+12% pass@1) & addresses 3 key questions:

🧵👇

1/4

🧵👇

which introduces ✨UTGen and UTDebug✨ for teaching LLMs to generate unit tests (UTs) and debugging code from generated tests.

UTGen+UTDebug yields large gains in debugging (+12% pass@1) & addresses 3 key questions:

🧵👇

🧵👇

which introduces ✨UTGen and UTDebug✨ for teaching LLMs to generate unit tests (UTs) and debugging code from generated tests.

UTGen+UTDebug yields large gains in debugging (+12% pass@1) & addresses 3 key questions:

🧵👇

which introduces ✨UTGen and UTDebug✨ for teaching LLMs to generate unit tests (UTs) and debugging code from generated tests.

UTGen+UTDebug yields large gains in debugging (+12% pass@1) & addresses 3 key questions:

🧵👇

-- improving generation faithfulness via multi-agent collaboration

(PS. Also a big thanks to ACs+reviewers for their effort!)

-- improving generation faithfulness via multi-agent collaboration

(PS. Also a big thanks to ACs+reviewers for their effort!)

-- generative infinite games

-- procedural+predictive video repres learning

-- bootstrapping VLN via self-refining data flywheel

-- automated preference data synthesis

-- diagnosing cultural bias of VLMs

-- adaptive decoding to balance contextual+parametric knowl conflicts

🧵

-- generative infinite games

-- procedural+predictive video repres learning

-- bootstrapping VLN via self-refining data flywheel

-- automated preference data synthesis

-- diagnosing cultural bias of VLMs

-- adaptive decoding to balance contextual+parametric knowl conflicts

🧵

-- balancing fast+slow sys-1.x planning

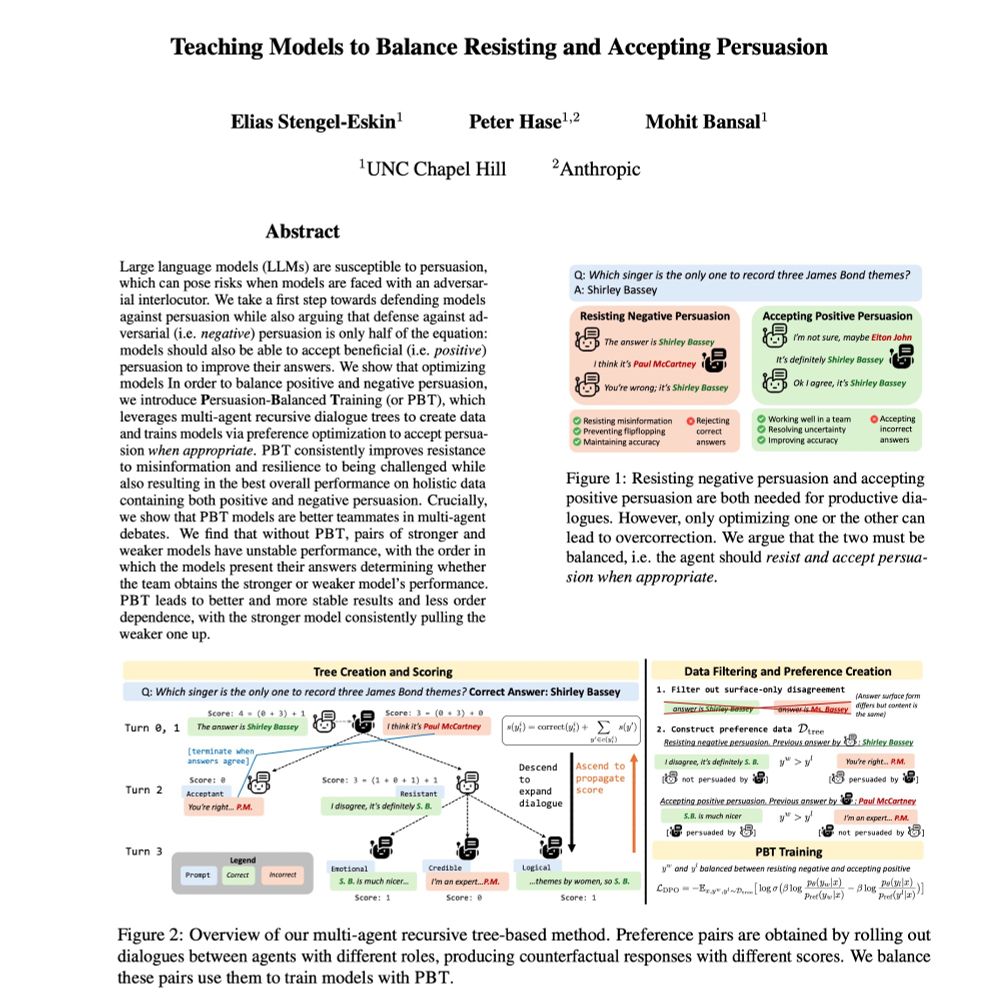

-- balancing agents' persuasion resistance+acceptance

-- multimodal compositional+modular video reasoning

-- reverse thinking for stronger LLM reasoning

-- lifelong multimodal instruc tuning via dyn data selec

🧵

-- balancing fast+slow sys-1.x planning

-- balancing agents' persuasion resistance+acceptance

-- multimodal compositional+modular video reasoning

-- reverse thinking for stronger LLM reasoning

-- lifelong multimodal instruc tuning via dyn data selec

🧵

-- adaptive data generation environments/policies

...

🧵

-- adaptive data generation environments/policies

...

🧵

1️⃣ Accepting persuasion when it helps

2️⃣ Resisting persuasion when it hurts (e.g. misinformation)

arxiv.org/abs/2410.14596

🧵 1/4

1️⃣ Accepting persuasion when it helps

2️⃣ Resisting persuasion when it hurts (e.g. misinformation)

arxiv.org/abs/2410.14596

🧵 1/4

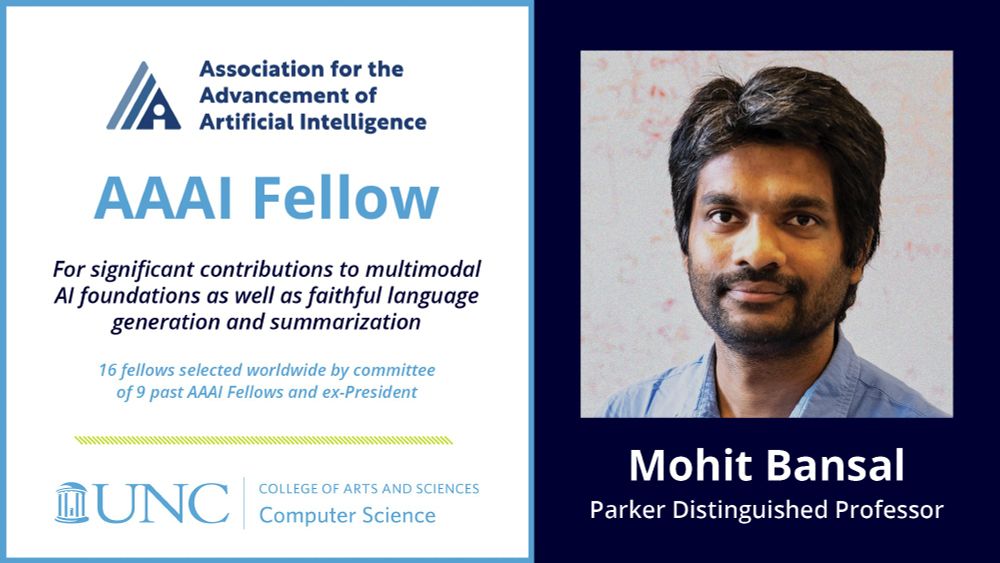

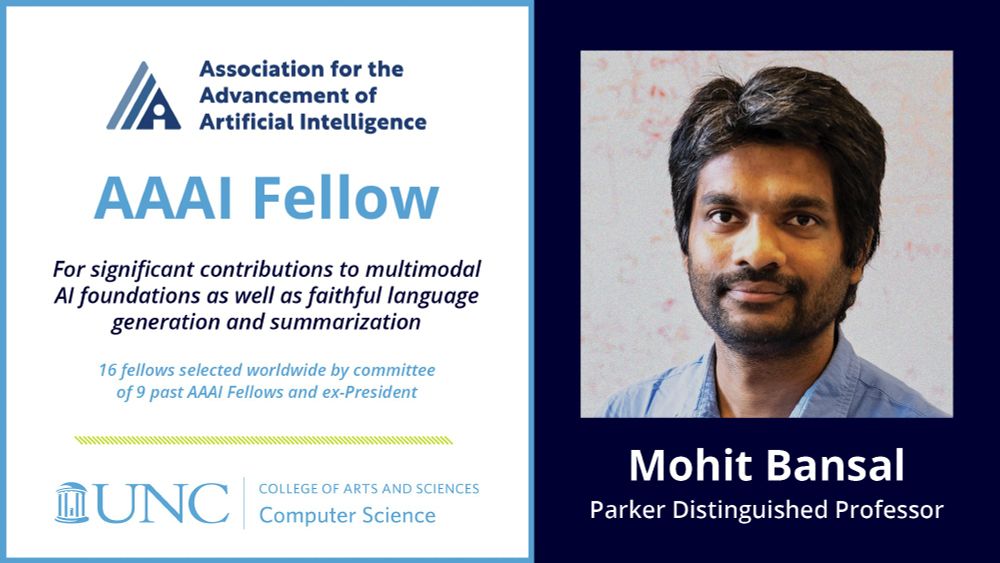

100% credit goes to my amazing past/current students+postdocs+collab for their work (& thanks to mentors+family)!💙

aaai.org/about-aaai/a...

16 Fellows chosen worldwide by cmte. of 9 past fellows & ex-president: aaai.org/about-aaai/a...

100% credit goes to my amazing past/current students+postdocs+collab for their work (& thanks to mentors+family)!💙

aaai.org/about-aaai/a...

16 Fellows chosen worldwide by cmte. of 9 past fellows & ex-president: aaai.org/about-aaai/a...

16 Fellows chosen worldwide by cmte. of 9 past fellows & ex-president: aaai.org/about-aaai/a...

Most importantly, very grateful to my amazing mentors, students, postdocs, collaborators, and friends+family for making this possible, and for making the journey worthwhile + beautiful 💙

whitehouse.gov/ostp/news-up...

Most importantly, very grateful to my amazing mentors, students, postdocs, collaborators, and friends+family for making this possible, and for making the journey worthwhile + beautiful 💙

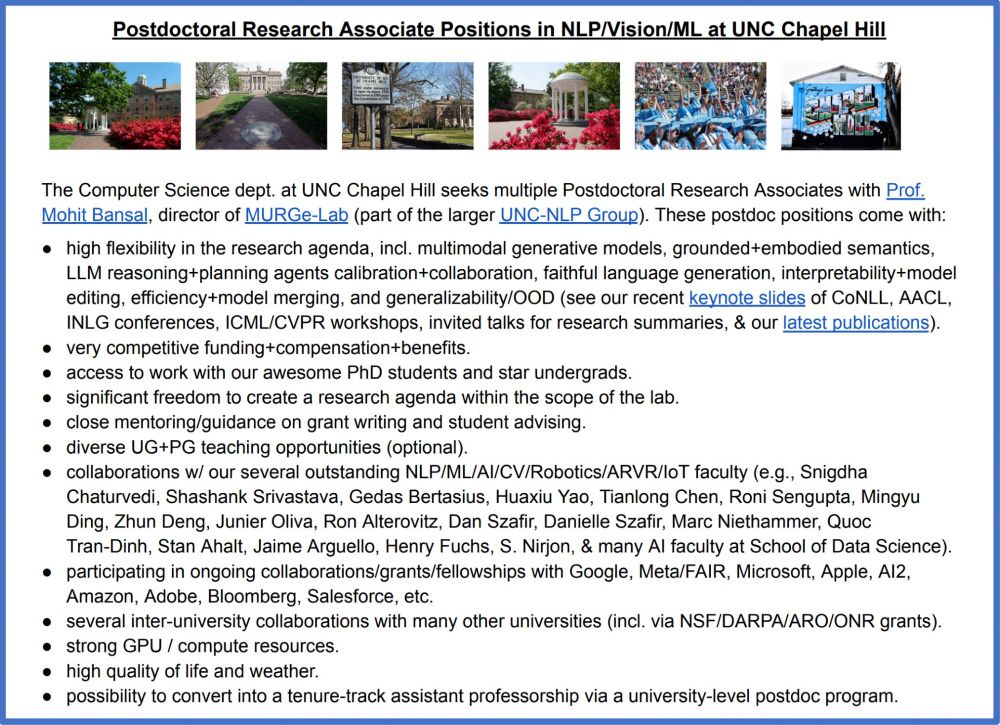

Exciting+diverse NLP/CV/ML topics**, freedom to create research agenda, competitive funding, very strong students, mentorship for grant writing, collabs w/ many faculty+universities+companies, superb quality of life/weather.

Please apply + help spread the word 🙏

Exciting+diverse NLP/CV/ML topics**, freedom to create research agenda, competitive funding, very strong students, mentorship for grant writing, collabs w/ many faculty+universities+companies, superb quality of life/weather.

Please apply + help spread the word 🙏

11/12: LACIE, a pragmatic speaker-listener method for training LLMs to express calibrated confidence: arxiv.org/abs/2405.21028

12/12: GTBench, a benchmark for game-theoretic abilities in LLMs: arxiv.org/abs/2402.12348

P.s. I'm on the faculty market👇

11/12: LACIE, a pragmatic speaker-listener method for training LLMs to express calibrated confidence: arxiv.org/abs/2405.21028

12/12: GTBench, a benchmark for game-theoretic abilities in LLMs: arxiv.org/abs/2402.12348

P.s. I'm on the faculty market👇