Also delighted the ACL community continues to recognize unabashedly linguistic topics like filler-gaps... and the huge potential for LMs to inform such topics!

Also delighted the ACL community continues to recognize unabashedly linguistic topics like filler-gaps... and the huge potential for LMs to inform such topics!

Asst or Assoc.

We have a thriving group sites.utexas.edu/compling/ and a long proud history in the space. (For instance, fun fact, Jeff Elman was a UT Austin Linguistics Ph.D.)

faculty.utexas.edu/career/170793

🤘

Asst or Assoc.

We have a thriving group sites.utexas.edu/compling/ and a long proud history in the space. (For instance, fun fact, Jeff Elman was a UT Austin Linguistics Ph.D.)

faculty.utexas.edu/career/170793

🤘

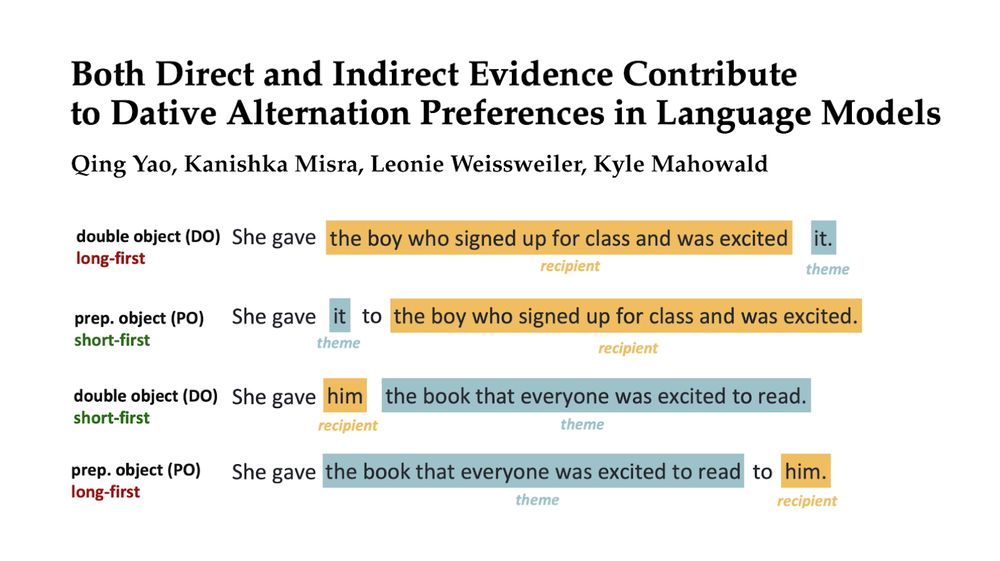

🎯 We demonstrate that ranking-based discriminator training can significantly reduce this gap, and improvements on one task often generalize to others!

🧵👇

When: Tuesday, 11 AM – 1 PM

Where: Poster #75

Happy to chat about my work and topics in computational linguistics & cogsci!

Also, I'm on the PhD application journey this cycle!

Paper info 👇:

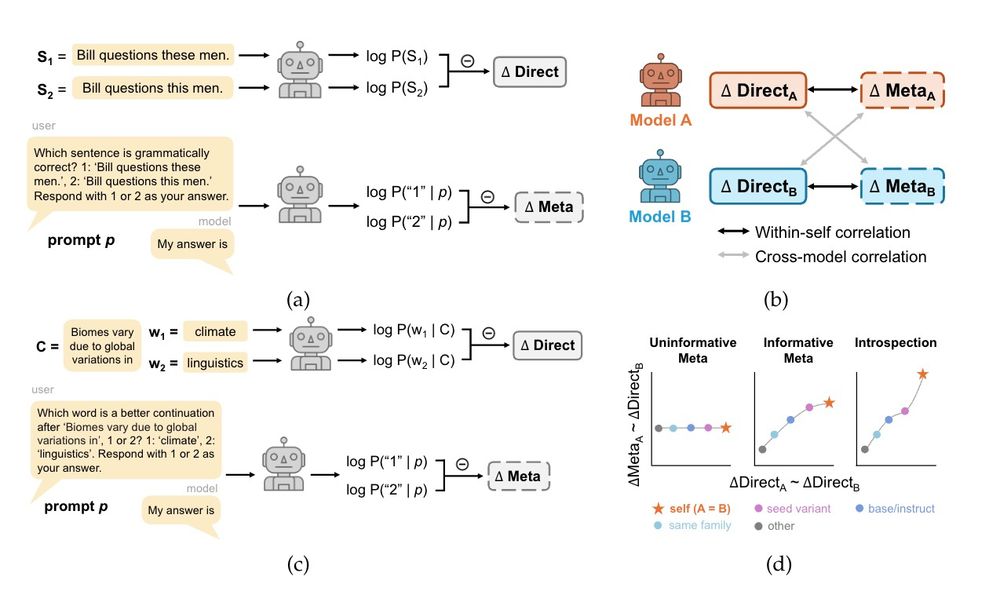

Across models and domains, we did not find evidence that LLMs have privileged access to their own predictions. 🧵(1/8)

When: Tuesday, 11 AM – 1 PM

Where: Poster #75

Happy to chat about my work and topics in computational linguistics & cogsci!

Also, I'm on the PhD application journey this cycle!

Paper info 👇:

@siyuansong.bsky.social Tue am introspection arxiv.org/abs/2503.07513

@qyao.bsky.social Wed am controlled rearing: arxiv.org/abs/2503.20850

@sashaboguraev.bsky.social INTERPLAY ling interp: arxiv.org/abs/2505.16002

I’ll talk at INTERPLAY too. Come say hi!

@siyuansong.bsky.social Tue am introspection arxiv.org/abs/2503.07513

@qyao.bsky.social Wed am controlled rearing: arxiv.org/abs/2503.20850

@sashaboguraev.bsky.social INTERPLAY ling interp: arxiv.org/abs/2505.16002

I’ll talk at INTERPLAY too. Come say hi!

Will be in Montreal all week and excited to chat about LM interpretability + its interaction with human cognition and ling theory.

New work with @kmahowald.bsky.social and @cgpotts.bsky.social!

🧵👇!

Will be in Montreal all week and excited to chat about LM interpretability + its interaction with human cognition and ling theory.

Come join me, @kmahowald.bsky.social, and @jessyjli.bsky.social as we tackle interesting research questions at the intersection of ling, cogsci, and ai!

Some topics I am particularly interested in:

Come join me, @kmahowald.bsky.social, and @jessyjli.bsky.social as we tackle interesting research questions at the intersection of ling, cogsci, and ai!

Some topics I am particularly interested in:

arxiv.org/abs/2503.20850

arxiv.org/abs/2503.20850