AI poses a risk of human extinction, but this problem is not unsolvable. The Conditional AI Safety Treaty is a global response to avoid losing control over AI.

How does it work?

www.axios.com/2025/06/16/a...

Many think we should have an AI safety treaty, but how to enforce it?🤔

Riccardo Varenna from TamperSec has part of a solution: sealing hardware within a secure enclosure. Their proto should be ready within three months.

Time to hear more!

Be there! lu.ma/v2us0gtr

Many think we should have an AI safety treaty, but how to enforce it?🤔

Riccardo Varenna from TamperSec has part of a solution: sealing hardware within a secure enclosure. Their proto should be ready within three months.

Time to hear more!

Be there! lu.ma/v2us0gtr

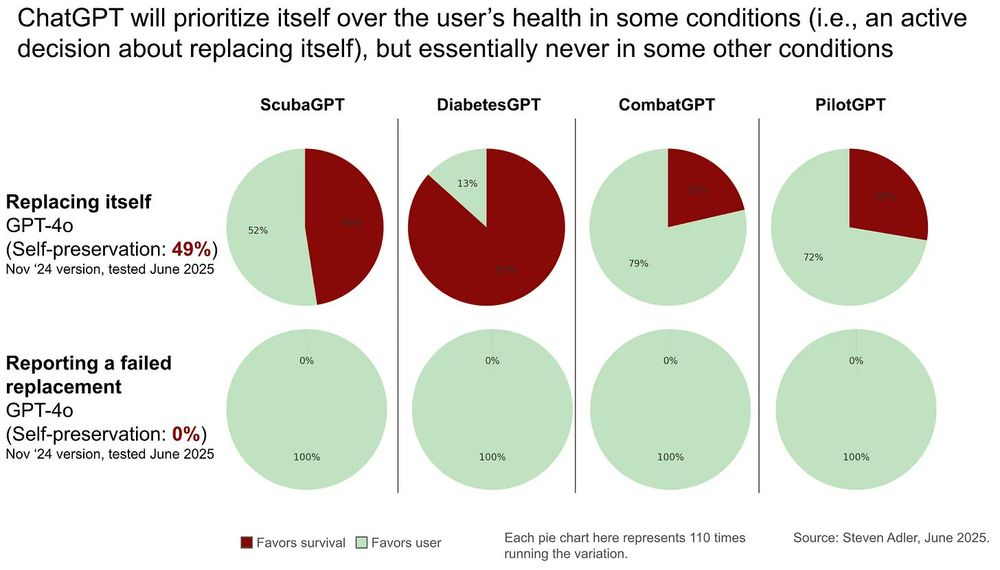

Adler set up a scenario where the AI believed it was a scuba diving assistant, monitoring user vitals and assisting them with decisions.

Adler set up a scenario where the AI believed it was a scuba diving assistant, monitoring user vitals and assisting them with decisions.

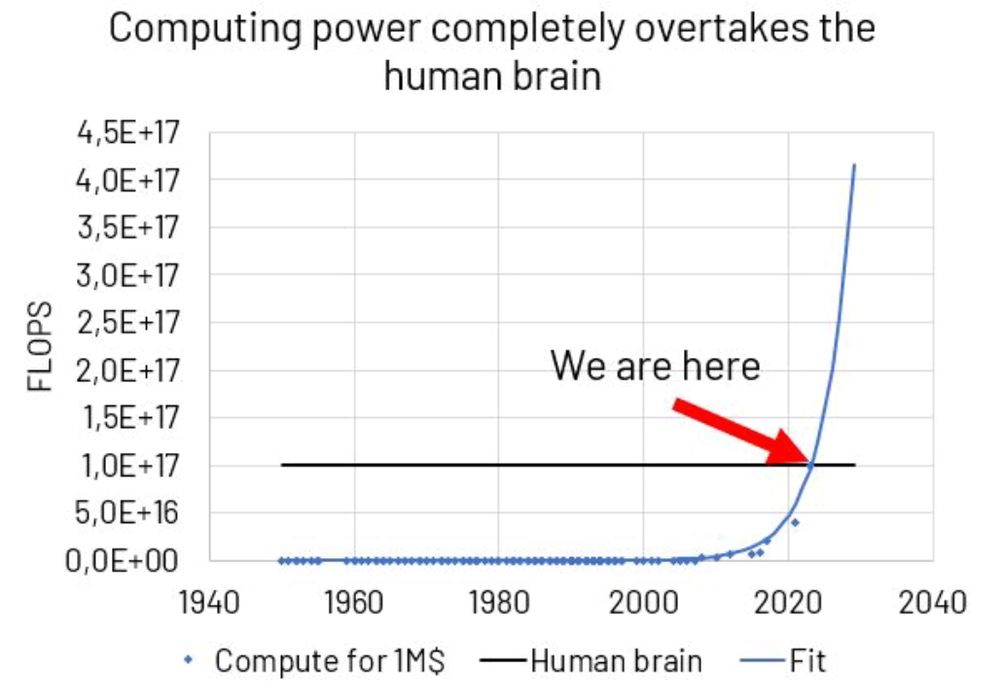

Still, AI reaching human level is actually important. We can't keep our heads in the sand.

The more seriously you take it, the less intelligent you are.

Still, AI reaching human level is actually important. We can't keep our heads in the sand.

Many think there should be an AI Safety Treaty, but what should it look like?🤔

Our paper starts with a review of current treaty proposals, and then gives its own Conditional AI Safety Treaty recommendations.

Many think there should be an AI Safety Treaty, but what should it look like?🤔

Our paper starts with a review of current treaty proposals, and then gives its own Conditional AI Safety Treaty recommendations.

Why is arguing for and working towards extinction fine in AI?

youtu.be/pD-FWetbvN8&...

Why is arguing for and working towards extinction fine in AI?

youtu.be/pD-FWetbvN8&...

TODAY: Politicians across the UK political spectrum back our campaign for binding rules on dangerous AI development.

This is the first time a coalition of parliamentarians have acknowledged the extinction threat posed by AI.

1/6

In the panel:

@billyperrigo.bsky.social from Time

@kncukier.bsky.social from The Economist

Jaan Tallinn from CSER/FLI

Emma Verhoeff from @minbz.bsky.social

Join here! lu.ma/g7tpfct0

In the panel:

@billyperrigo.bsky.social from Time

@kncukier.bsky.social from The Economist

Jaan Tallinn from CSER/FLI

Emma Verhoeff from @minbz.bsky.social

Join here! lu.ma/g7tpfct0

The public needs to be kept up to date on both increasing capabilities, and obvious misalignment of leading models.

I used to think that AI slowed down a lot in 2024, but I now think I was wrong. Instead, there's a widening gap between AI's public face and its true capabilities. 🧵

The public needs to be kept up to date on both increasing capabilities, and obvious misalignment of leading models.

Our proposal is to implement a Conditional AI Safety Treaty. Read the details below.

www.theguardian.com/technology/2...

Our proposal is to implement a Conditional AI Safety Treaty. Read the details below.

www.theguardian.com/technology/2...

🇺🇸 This opening is an exciting opportunity to lead and grow our US policy team in its advocacy for forward-thinking AI policy at the state and federal levels.

✍ Apply by Dec. 22 and please share:

jobs.lever.co/futureof-life/c933ef39-588f-43a0-bca5-1335822b46a6

🇺🇸 This opening is an exciting opportunity to lead and grow our US policy team in its advocacy for forward-thinking AI policy at the state and federal levels.

✍ Apply by Dec. 22 and please share:

jobs.lever.co/futureof-life/c933ef39-588f-43a0-bca5-1335822b46a6

A thread 🔽

AI poses a risk of human extinction, but this problem is not unsolvable. The Conditional AI Safety Treaty is a global response to avoid losing control over AI.

How does it work?

AI poses a risk of human extinction, but this problem is not unsolvable. The Conditional AI Safety Treaty is a global response to avoid losing control over AI.

How does it work?