Preprint: arxiv.org/abs/2510.02523

Preprint: arxiv.org/abs/2510.02523

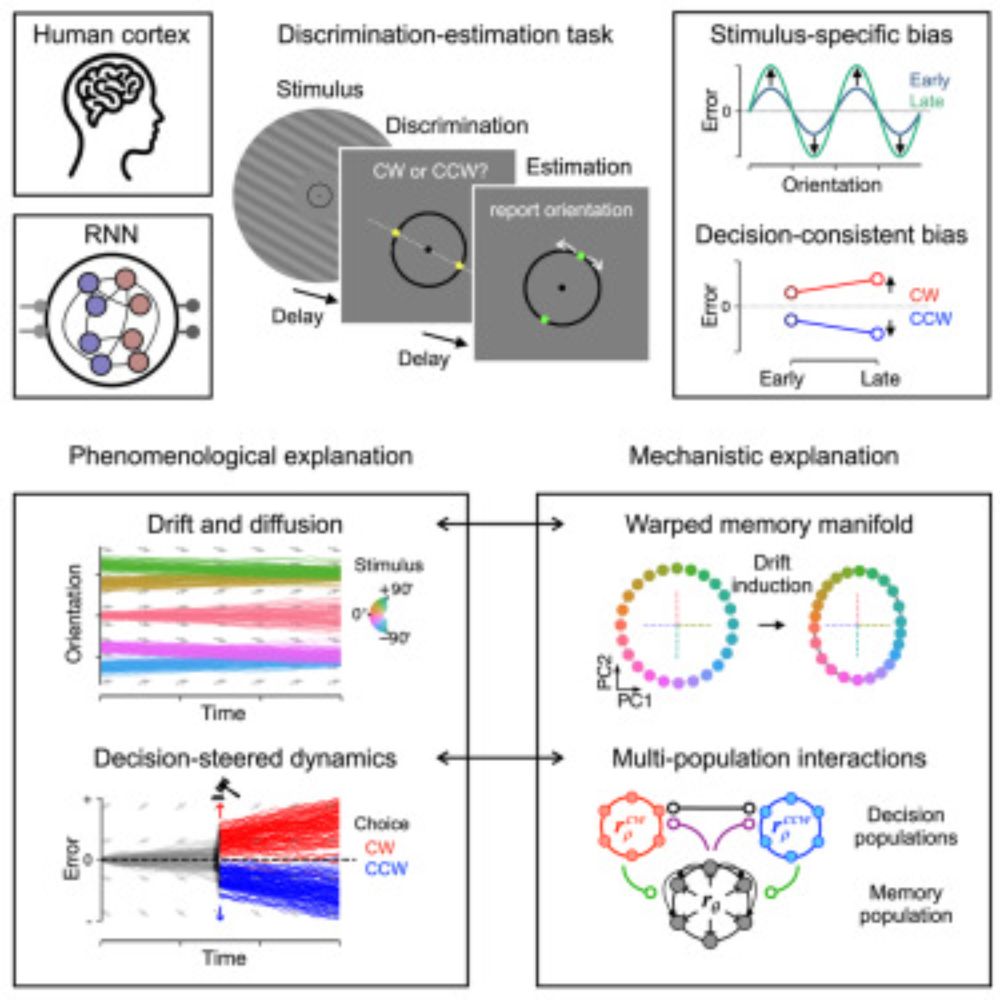

Our perception isn't a perfect mirror of the world. It's often biased by our expectations and beliefs. How do these biases unfold over time, and what shapes their trajectory? A summary thread. (1/13)

Our perception isn't a perfect mirror of the world. It's often biased by our expectations and beliefs. How do these biases unfold over time, and what shapes their trajectory? A summary thread. (1/13)

I keep coming back to it whenever I’m writing about models of perception.

I especially like this quote; it took me a while to fully wrap my head around it, but I think it touches on something quite fundamental.

🧠📈

I keep coming back to it whenever I’m writing about models of perception.

I especially like this quote; it took me a while to fully wrap my head around it, but I think it touches on something quite fundamental.

🧠📈

This may seem like a diss, but 'is the exposure you get from Nature worth 10k?' has a different answer than 'is it worth paying 10k to publish a PDF online?' (which was always a strawman)

"I published my paper on arxiv and I'm hoping to get it reprinted in Nature"

This may seem like a diss, but 'is the exposure you get from Nature worth 10k?' has a different answer than 'is it worth paying 10k to publish a PDF online?' (which was always a strawman)

Also, a curated feed just for vision science content.

Also, a curated feed just for vision science content.

Cognitive neuroscience: bsky.app/starter-pack...

Neural engineering & computational neuroscience: bsky.app/starter-pack...

Affective science: bsky.app/starter-pack...

Women in neuroscience: bsky.app/starter-pack...

Cognitive neuroscience: bsky.app/starter-pack...

Neural engineering & computational neuroscience: bsky.app/starter-pack...

Affective science: bsky.app/starter-pack...

Women in neuroscience: bsky.app/starter-pack...