Focusing on #reliability in #llm execution with #symbolic #reasoning resulting in +30% improvements in accuracy while reducing the hard failing requests. It's generally a big win, but it will be extremely hard to adopt

Focusing on #reliability in #llm execution with #symbolic #reasoning resulting in +30% improvements in accuracy while reducing the hard failing requests. It's generally a big win, but it will be extremely hard to adopt

New to MarkItDown? It is a #Python tool for converting files and #office documents to #Markdown.

#AI #LLM #☠️

www.linkedin.com/posts/daniel...

#AI #LLM #☠️

www.linkedin.com/posts/daniel...

I think it's nice to keep these strategies in mind while there are new concepts every day. I like the combination with #TextGrad for some crazy performance increases #AI #LLM #performance

I think it's nice to keep these strategies in mind while there are new concepts every day. I like the combination with #TextGrad for some crazy performance increases #AI #LLM #performance

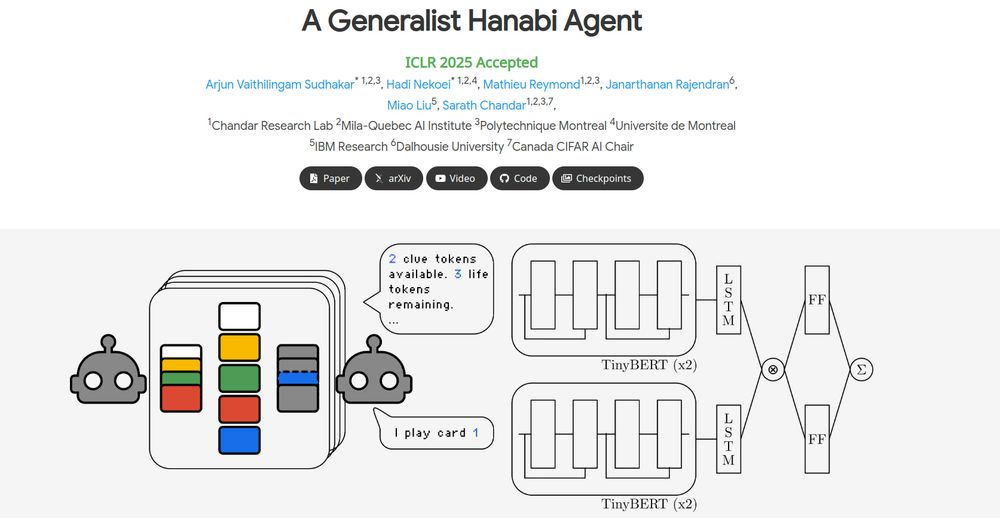

We explore this in our ICLR 2025 paper: A Generalist Hanabi Agent. We develop R3D2, the first agent to master all Hanabi settings and generalize to novel partners! 🚀 #ICLR2025 1/n

We explore this in our ICLR 2025 paper: A Generalist Hanabi Agent. We develop R3D2, the first agent to master all Hanabi settings and generalize to novel partners! 🚀 #ICLR2025 1/n

Providing first thoughts on structuring larger agentic systems and scale operations in a trustworthy manner

Providing first thoughts on structuring larger agentic systems and scale operations in a trustworthy manner