Grateful our research on this was featured in @washingtonpost.com by @nitasha.bsky.social!

Evidence is mounting that this poses unintended risks, includ. chats from peer-reviewed research, OpenAI's "sycophancy" debacle, & Character ai lawsuits www.washingtonpost.com/technology/2...

Grateful our research on this was featured in @washingtonpost.com by @nitasha.bsky.social!

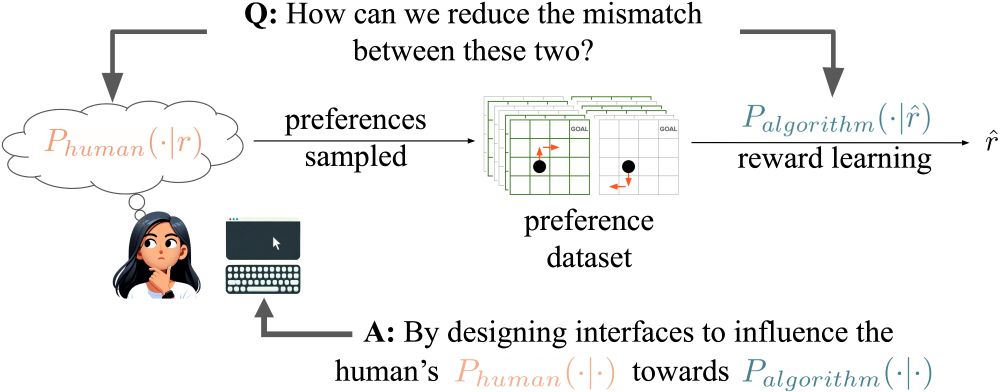

arxiv.org/abs/2412.17128

arxiv.org/abs/2412.17128

#AI #MachineLearning #RLHF #Alignment (1/n)

#AI #MachineLearning #RLHF #Alignment (1/n)