Named after Quetzalcoatl, the Aztec god of creation, Quetzal is a simple yet scalable model for building 3D molecules atom by atom.

📜 arxiv.org/abs/2505.13791

[1/4]

Named after Quetzalcoatl, the Aztec god of creation, Quetzal is a simple yet scalable model for building 3D molecules atom by atom.

📜 arxiv.org/abs/2505.13791

[1/4]

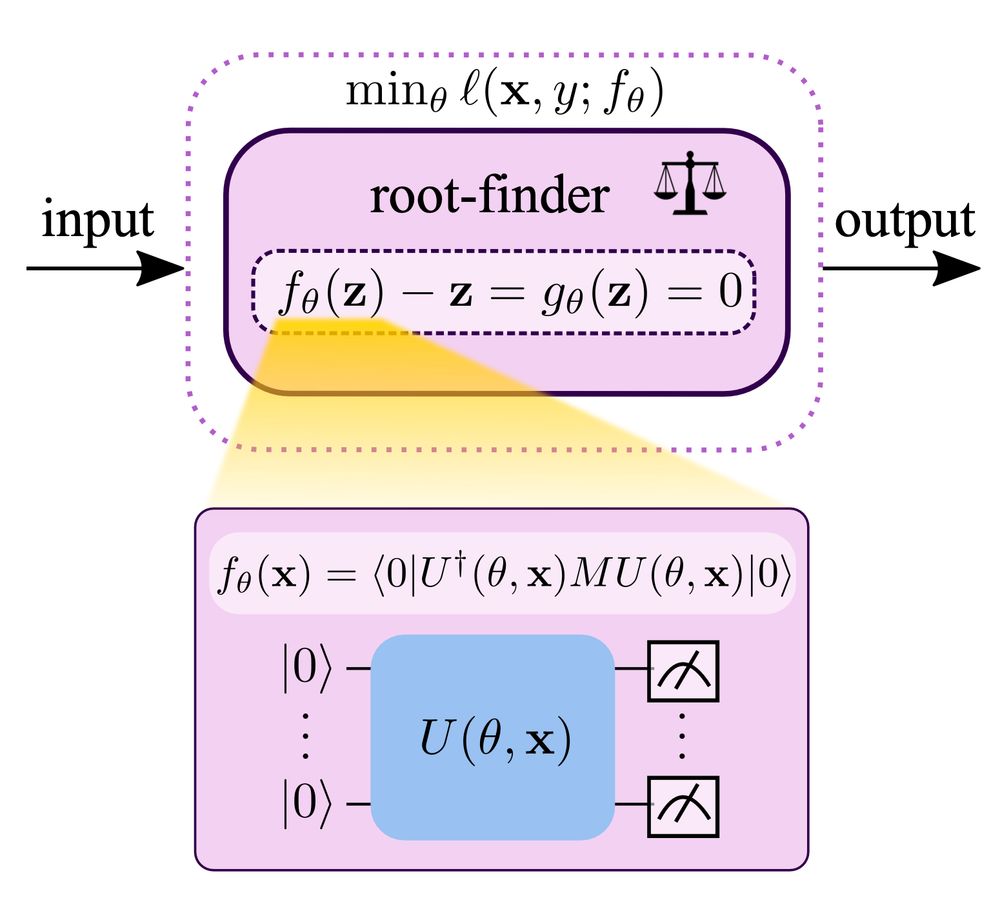

📃 arxiv.org/abs/2505.14941

[1/3]

📃 arxiv.org/abs/2505.14941

[1/3]

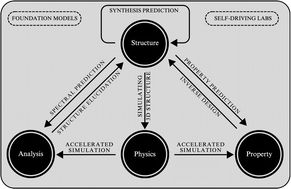

A tomographic representation of materials or molecular descriptors clarifies the ideas of “forward” and “inverse” design, treating them equally.

arxiv.org/abs/2501.18163

A tomographic representation of materials or molecular descriptors clarifies the ideas of “forward” and “inverse” design, treating them equally.

arxiv.org/abs/2501.18163

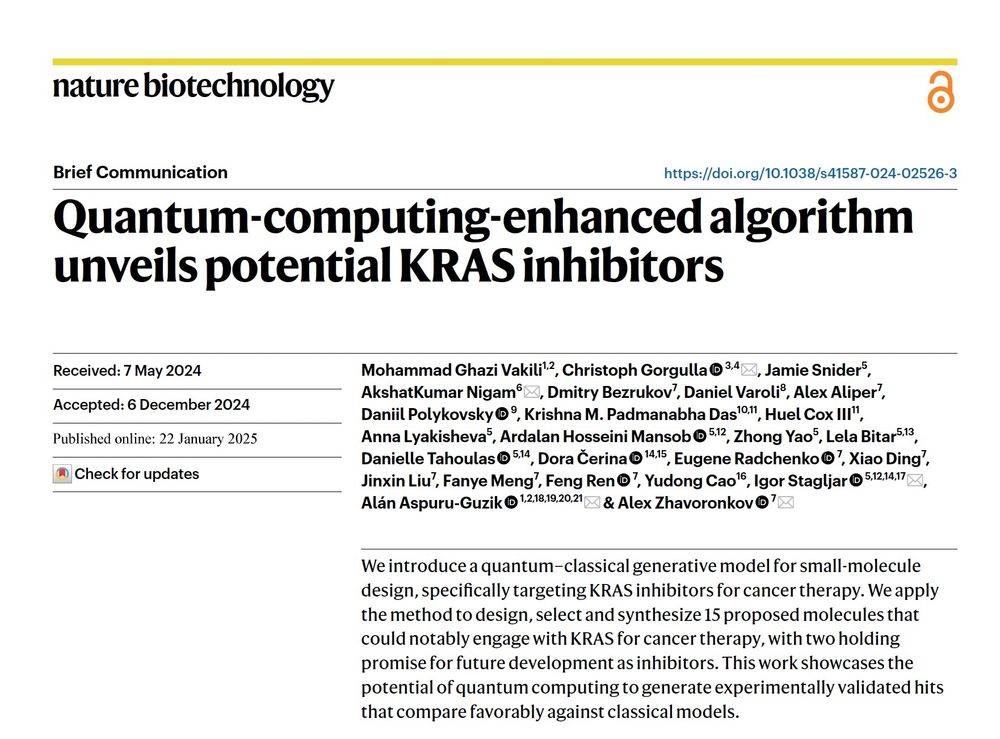

#QuantumComputing #KRAS

#QuantumComputing #KRAS

@ghazivakili.bsky.social @elonverse.bsky.social Igor Stagljar @thematterlab.bsky.social

@ghazivakili.bsky.social @elonverse.bsky.social Igor Stagljar @thematterlab.bsky.social

Coauthors: Domenico Maisto, Francesco Gregoretti, Giovanni Pezzulo

www.sciencedirect.com/science/arti...

Coauthors: Domenico Maisto, Francesco Gregoretti, Giovanni Pezzulo

www.sciencedirect.com/science/arti...

SAEs are interpreter models that help us understand how language models process information internally by decomposing neural activations into interpretable features.

SAEs are interpreter models that help us understand how language models process information internally by decomposing neural activations into interpretable features.

Yann's facebook post (and following comments) is here:

Yann's facebook post (and following comments) is here:

🚀 Introducing SuperDiff 🦹♀️ – a principled method for efficiently combining multiple pre-trained diffusion models solely during inference!

🚀 Introducing SuperDiff 🦹♀️ – a principled method for efficiently combining multiple pre-trained diffusion models solely during inference!

They "Google" provide a comprehensive evaluation of transformer models’ graph reasoning capabilities and demonstrate that they often outperform more domain-specific graph neural networks.

research.google/blog/underst...

They "Google" provide a comprehensive evaluation of transformer models’ graph reasoning capabilities and demonstrate that they often outperform more domain-specific graph neural networks.

research.google/blog/underst...

🔗 website: sites.google.com/view/fpiwork...

🔥 Call for papers: sites.google.com/view/fpiwork...

more details in thread below👇 🧵

w/ Edwin Yu, Naruki Yoshikawa, @valencekjell.com @aspuru.bsky.social

➡️ doi.org/10.26434/che...

w/ Edwin Yu, Naruki Yoshikawa, @valencekjell.com @aspuru.bsky.social

➡️ doi.org/10.26434/che...

⚛️ Quantum computers are becoming more powerful—but also more complex.

🤔 How do we build AI systems that know how to operate them?

📄 Read the paper: arxiv.org/abs/2412.07978

#AI #LLM #QuantumComputing

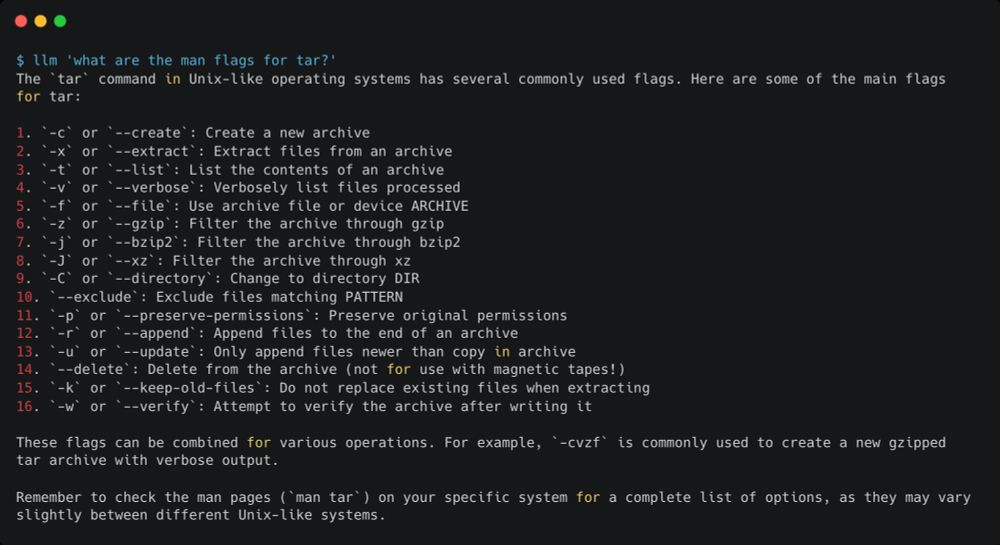

to get tmux to do the heavy lifting to make ShellSage work is genius! Thanks to this, ShellSage is <100 lines of code (plus comments and prompts).

But the result is amazing. Try it out and tell us how you go.

Details here: www.answer.ai/posts/2024-1...

to get tmux to do the heavy lifting to make ShellSage work is genius! Thanks to this, ShellSage is <100 lines of code (plus comments and prompts).

But the result is amazing. Try it out and tell us how you go.

Details here: www.answer.ai/posts/2024-1...

This means "open" AI… isn't very open.

📄 From me, @meredithmeredith.bsky.social and @smw.bsky.social in Nature:

www.nature.com/articles/s41...

This means "open" AI… isn't very open.

📄 From me, @meredithmeredith.bsky.social and @smw.bsky.social in Nature:

www.nature.com/articles/s41...