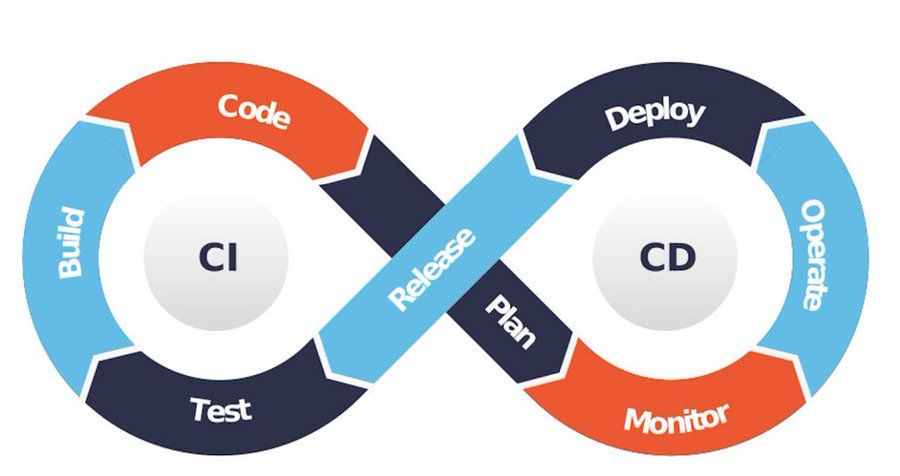

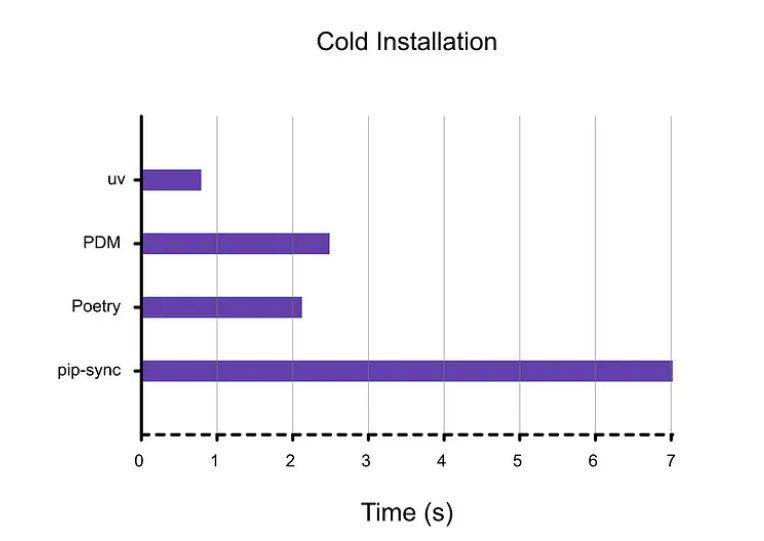

That's a win for devops who are constantly optimizing for speed and resource usage, and that has a big impact on the overall business.

That's a win for devops who are constantly optimizing for speed and resource usage, and that has a big impact on the overall business.

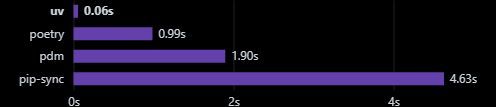

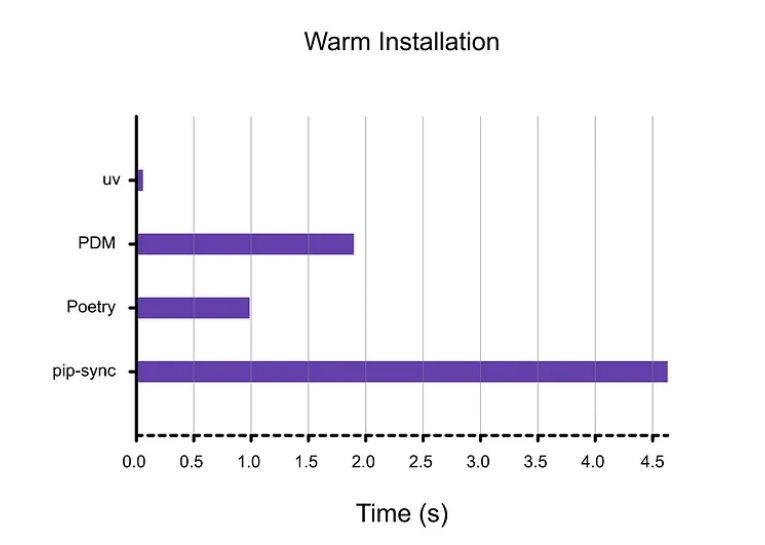

It's not just some script, it's a serious approach to speed; that blazingly fast reputation is well deserved.

It's not just some script, it's a serious approach to speed; that blazingly fast reputation is well deserved.

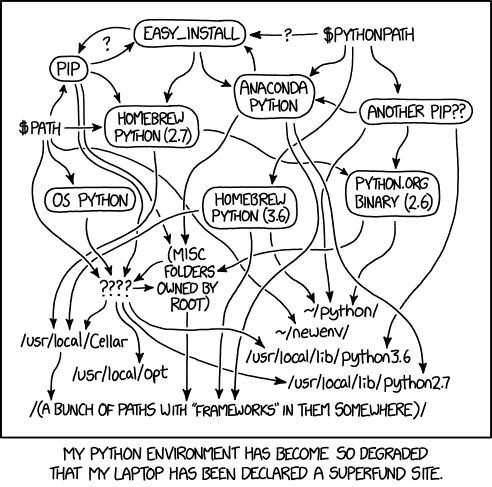

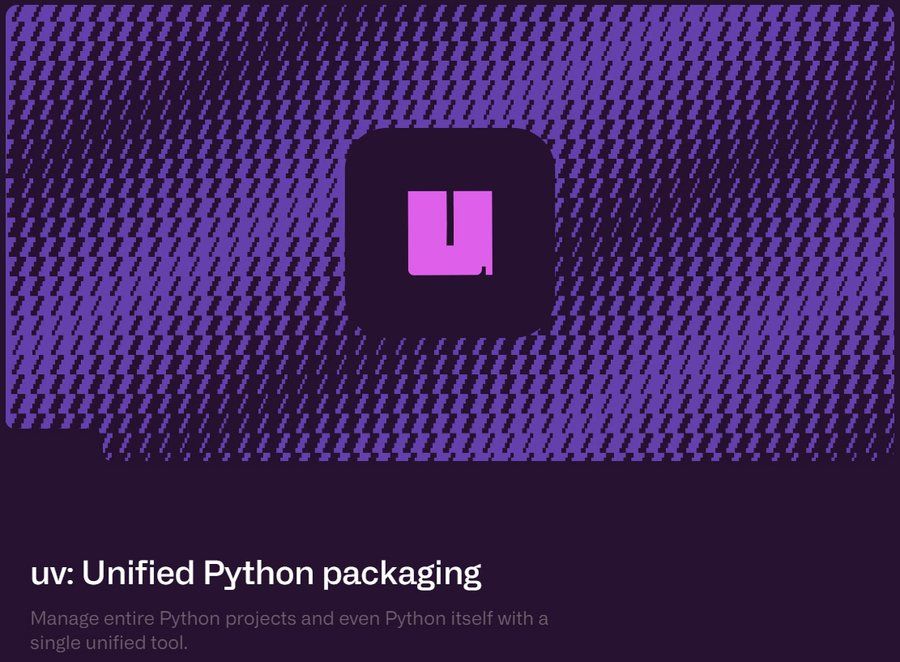

It's about cutting through all that chaos that we all know too well. A unified tool can only make things much better.

It's about cutting through all that chaos that we all know too well. A unified tool can only make things much better.

That's less context switching, which results in a much smoother development experience, it's about working smarter, not harder.

That's less context switching, which results in a much smoother development experience, it's about working smarter, not harder.

This drop-in replacement does not force you to change everything, it's just a lot smoother, you know?

This drop-in replacement does not force you to change everything, it's just a lot smoother, you know?

It's the little things that make a big difference to your productivity.

We all know how long it takes to get started with some projects, right?

It's the little things that make a big difference to your productivity.

We all know how long it takes to get started with some projects, right?

The speed of UV is so fast it might make you wonder if the install actually completed, it really is that quick.

The speed of UV is so fast it might make you wonder if the install actually completed, it really is that quick.

But what if there was a way to instantly speed up your workflow?

But what if there was a way to instantly speed up your workflow?

It like Gemini 1.5 Pro got a huge upgrade , adding a range of new features. The fact that it's twice as fast is very impressive, don't you think?

It like Gemini 1.5 Pro got a huge upgrade , adding a range of new features. The fact that it's twice as fast is very impressive, don't you think?

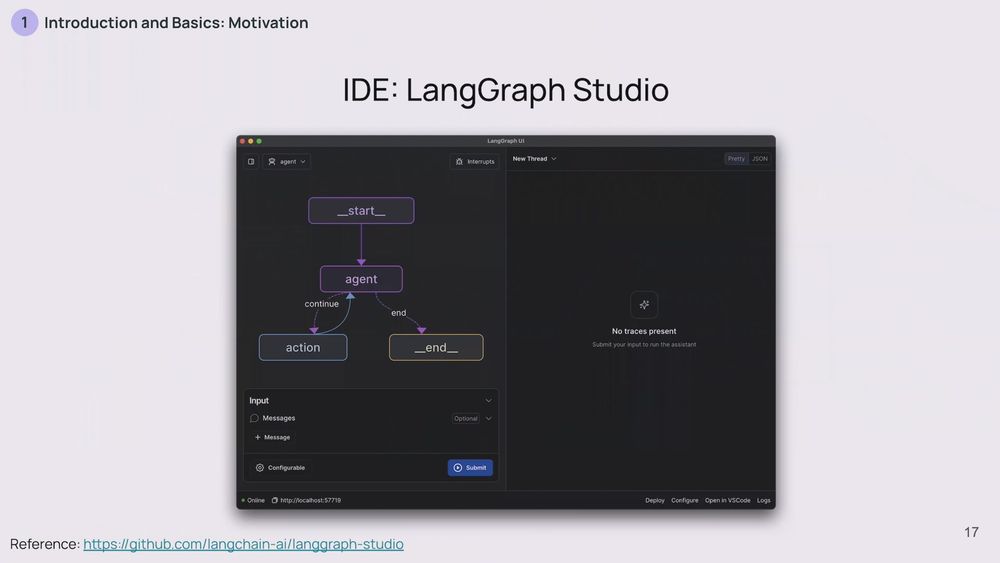

The primary distinction lies in how state is managed: while LLM serving platforms are typically stateless, agent serving platforms must be stateful, maintaining the agent's state on the server side.

The primary distinction lies in how state is managed: while LLM serving platforms are typically stateless, agent serving platforms must be stateful, maintaining the agent's state on the server side.

I used to spend HOURS manually extracting data from invoices. 🤯 Not anymore!

AutoExtract the AI invoice manager, which uses Google Gemini AI to extract information from any format—Excel, PDF, or Images.

Check it out:

#ai #openai #google #langchain

I used to spend HOURS manually extracting data from invoices. 🤯 Not anymore!

AutoExtract the AI invoice manager, which uses Google Gemini AI to extract information from any format—Excel, PDF, or Images.

Check it out:

#ai #openai #google #langchain