Easier to think of a database = storage layer + data model + query layer (language + optimizer)

Easier to think of a database = storage layer + data model + query layer (language + optimizer)

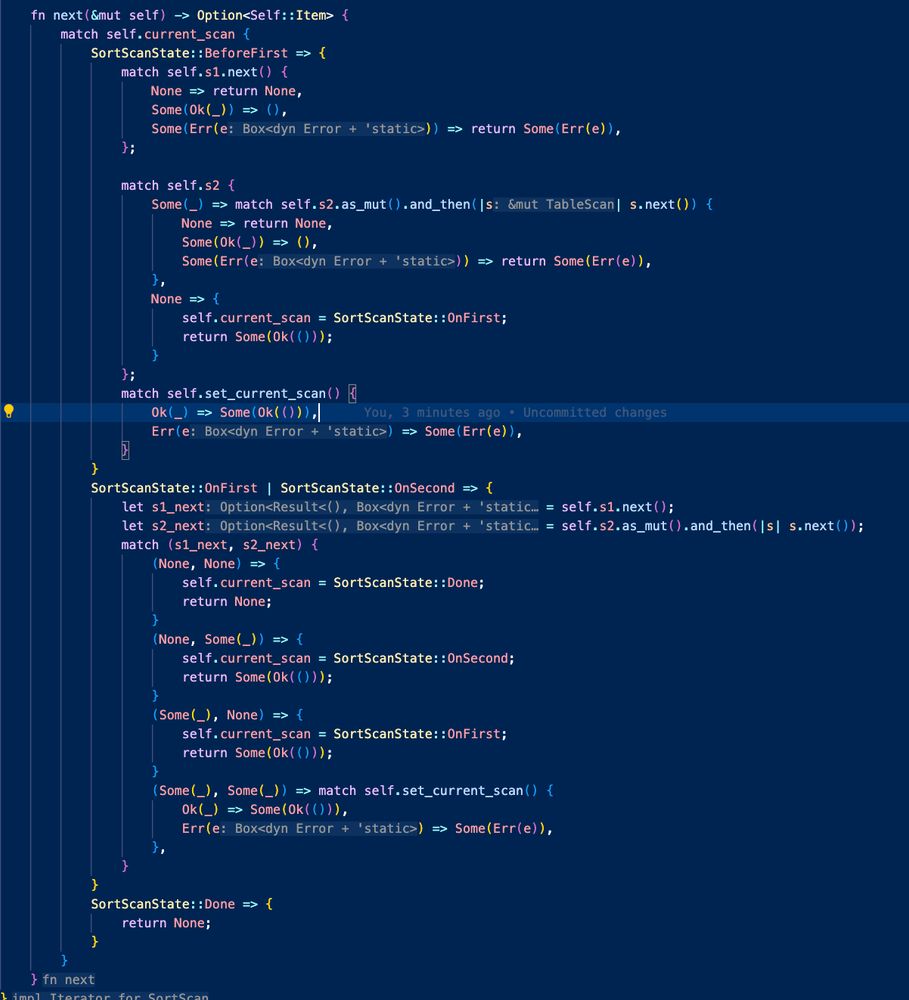

Makes composition so much nicer to look at and reason about

Makes composition so much nicer to look at and reason about

It isn't enough to have contiguous memory access, you also need to be aware of the cache hardware and how many entries can fit per cache line.

It isn't enough to have contiguous memory access, you also need to be aware of the cache hardware and how many entries can fit per cache line.

Also, using u64 instead of Strings leads to better performance since Strings are always heap allocated

Also, using u64 instead of Strings leads to better performance since Strings are always heap allocated

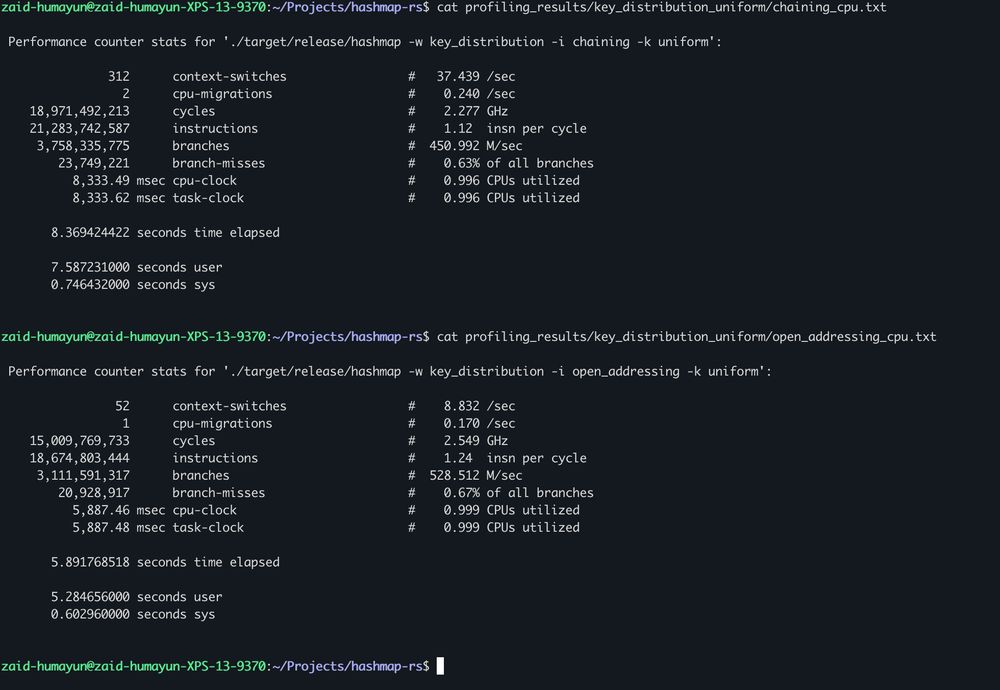

Open addressing ends up performing better but that's only due to fewer overall instructions

Open addressing ends up performing better but that's only due to fewer overall instructions

This should be more cache friendly since the entire memory is contiguously allocated

This should be more cache friendly since the entire memory is contiguously allocated

Fragmented memory should theoretically lead to poorer cache performance.

Fragmented memory should theoretically lead to poorer cache performance.

I wrote a small blog post and I'll cover the high level points in this 🧵

I wrote a small blog post and I'll cover the high level points in this 🧵

Presumably because I pay the cost of pointer chases to get the String data along with the cost of looking up the status separately

Presumably because I pay the cost of pointer chases to get the String data along with the cost of looking up the status separately

My struct for my open addressing variant is an enum which occupies 24 bytes when u64 is used as key-value. Each cache line is 64 bytes which means 2-3 per line

My struct for my open addressing variant is an enum which occupies 24 bytes when u64 is used as key-value. Each cache line is 64 bytes which means 2-3 per line

This is just plain strange...

This is just plain strange...

Chaining uses a LinkedList which is adding a lot of overhead in terms of instructions

Chaining uses a LinkedList which is adding a lot of overhead in terms of instructions