PhD student at Berkeley, previously CS at MIT.

https://rajivmovva.com/

Despite recent results, SAEs aren't dead! They can still be useful to mech interp, and also much more broadly: across FAccT, computational social science, and ML4H. 🧵

Despite recent results, SAEs aren't dead! They can still be useful to mech interp, and also much more broadly: across FAccT, computational social science, and ML4H. 🧵

Draft: arxiv.org/abs/2502.04382

Python package: github.com/rmovva/Hypot...

Demo: hypothesaes.org

9/9

Draft: arxiv.org/abs/2502.04382

Python package: github.com/rmovva/Hypot...

Demo: hypothesaes.org

9/9

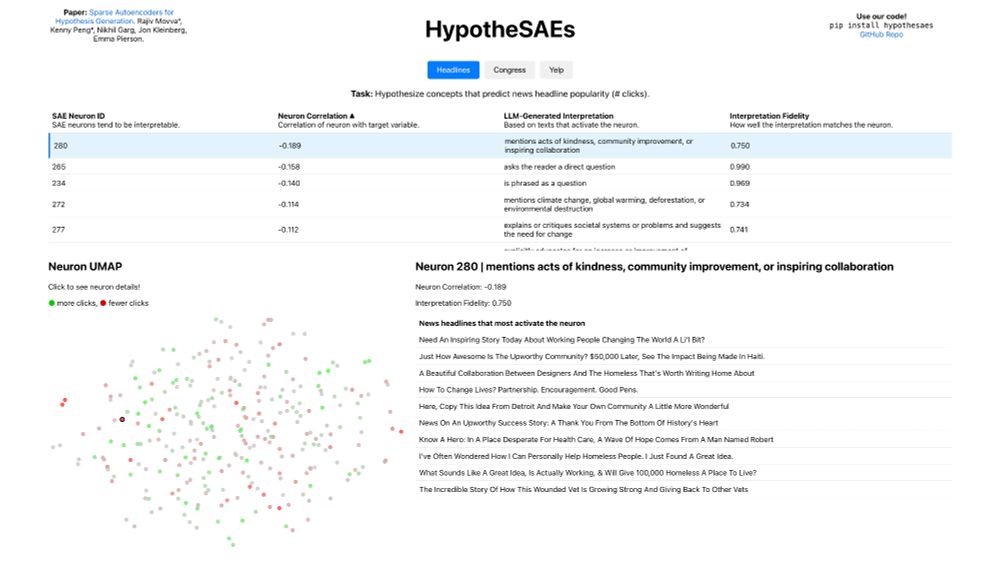

You can see every SAE neuron in UMAP space, colored by whether the neuron correlates positively or negatively with the target variable. 8/

You can see every SAE neuron in UMAP space, colored by whether the neuron correlates positively or negatively with the target variable. 8/

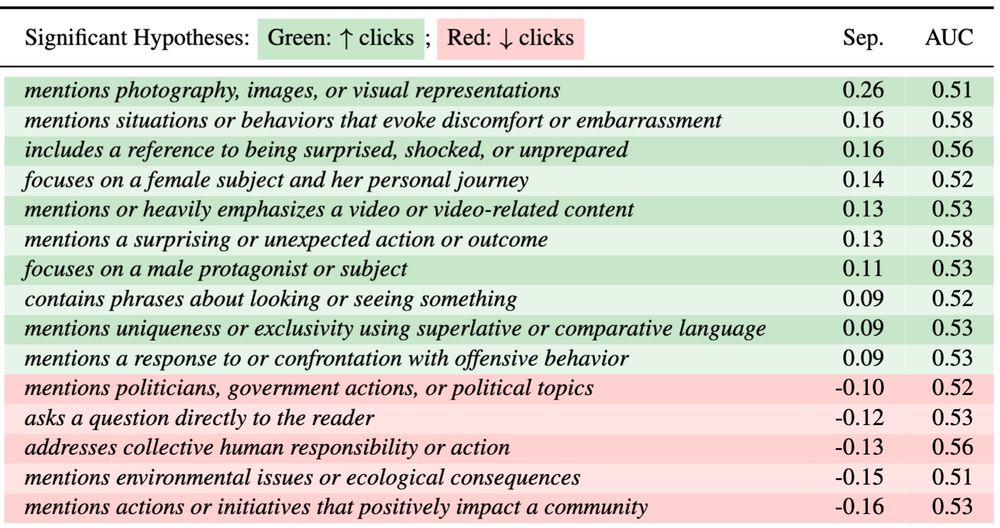

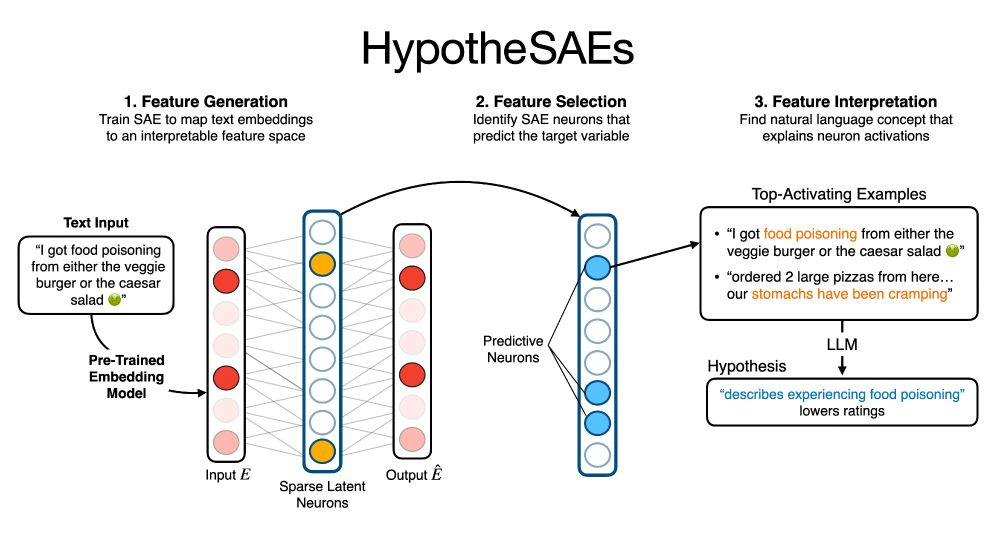

Our method, HypotheSAEs, produces interpretable text features that predict a target variable, e.g. features in news headlines that predict engagement. 🧵1/

Our method, HypotheSAEs, produces interpretable text features that predict a target variable, e.g. features in news headlines that predict engagement. 🧵1/