arXiv papers bot: @paper.bsky.social

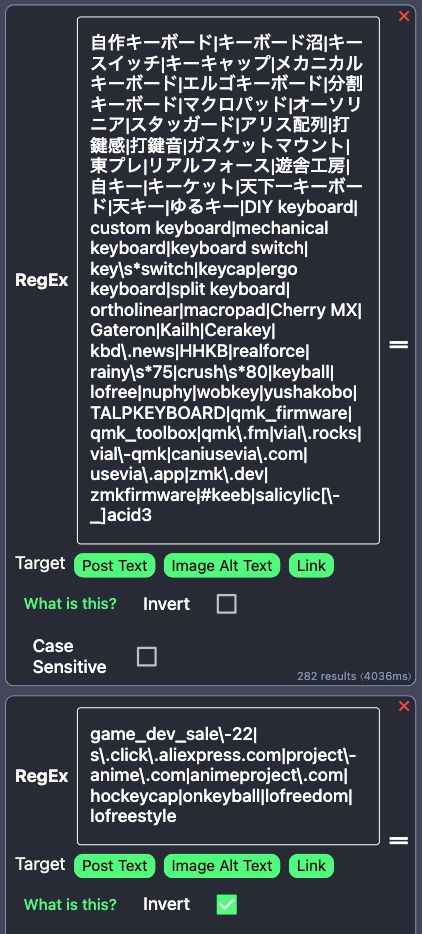

layer('japanese_eisuu', '英数 + ijkl').manipulators([

map('i').to('↑'),

map('j').to('←'),

map('k').to('↓'),

map('l').to('→'),

]),

それと久しぶりに Deno を使ってみたが、こういう簡単なプログラムならかなり楽。

github.com/susumuota/ka...

layer('japanese_eisuu', '英数 + ijkl').manipulators([

map('i').to('↑'),

map('j').to('←'),

map('k').to('↓'),

map('l').to('→'),

]),

それと久しぶりに Deno を使ってみたが、こういう簡単なプログラムならかなり楽。

github.com/susumuota/ka...

I have fixed @paper.bsky.social to allow 2000 chars.

I have fixed @paper.bsky.social to allow 2000 chars.

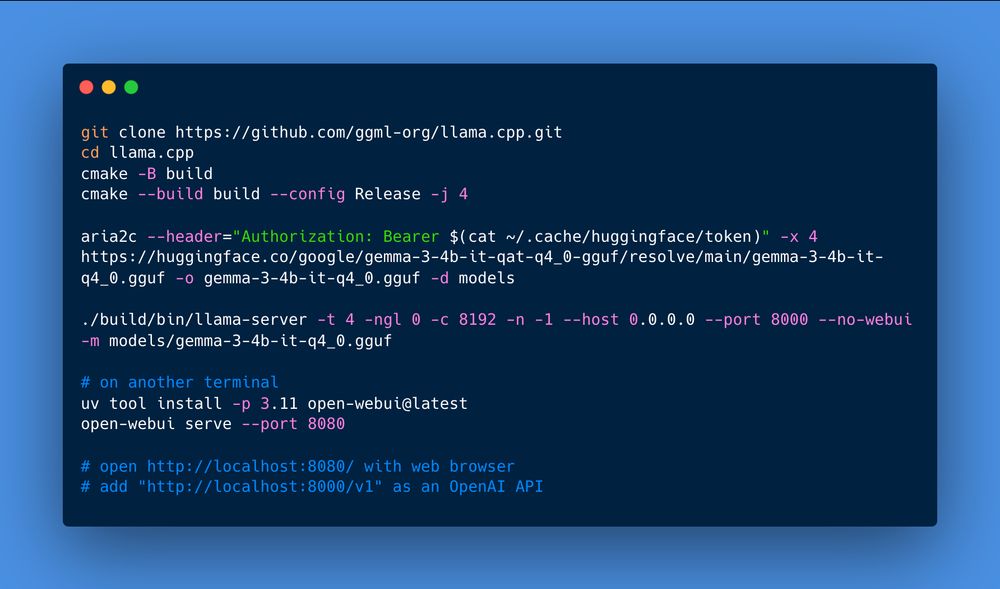

huggingface.co/google/gemma...

huggingface.co/google/gemma...

default: selenized-white

invert: selenized-dark

light: selenized-light

default: selenized-white

invert: selenized-dark

light: selenized-light

git diff --cached | sgpt "Generate a commit message following the Conventional Commits specification. e.g. feat, fix, chore, docs, etc."

git diff --cached | sgpt "Generate a commit message following the Conventional Commits specification. e.g. feat, fix, chore, docs, etc."

looks like a bit surreal 😆

looks like a bit surreal 😆

storage.googleapis.com/deepmind-med...

storage.googleapis.com/deepmind-med...

Model files not yet available.

Model files not yet available.

oracular == オラキュラ == オラオラ系

どんなロジックで翻訳してるのか謎。

元記事: https://www.nytimes.com/2023/07/18/magazine/wikipedia-ai-chatgpt.html

oracular == オラキュラ == オラオラ系

どんなロジックで翻訳してるのか謎。

元記事: https://www.nytimes.com/2023/07/18/magazine/wikipedia-ai-chatgpt.html

I have confirmed that Llama-2-13b-chat works well on my laptop PC (MacBook Pro 2020) with llama.cpp, although it looks heavily "censored". It's better to fine-tune llama-2-13b (without chat) with uncensored dataset.

I have confirmed that Llama-2-13b-chat works well on my laptop PC (MacBook Pro 2020) with llama.cpp, although it looks heavily "censored". It's better to fine-tune llama-2-13b (without chat) with uncensored dataset.

"Useless but mildly interesting language model using compressors built-in to Python."

It calculate the probability of the next token with

len(compress(training_data + prompt + next_token))

If the length is short, it means the probability is high.

https://github.com/Futrell/ziplm

"Useless but mildly interesting language model using compressors built-in to Python."

It calculate the probability of the next token with

len(compress(training_data + prompt + next_token))

If the length is short, it means the probability is high.

https://github.com/Futrell/ziplm

"llm-jeopardy: Automated prompting and scoring framework to evaluate LLMs using updated human knowledge prompts"

https://github.com/aigoopy/llm-jeopardy

"llm-jeopardy: Automated prompting and scoring framework to evaluate LLMs using updated human knowledge prompts"

https://github.com/aigoopy/llm-jeopardy

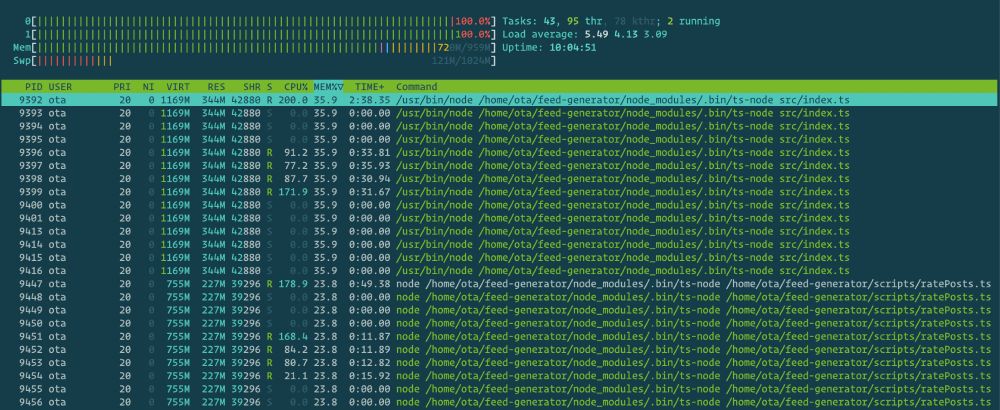

- NVIDIA L4 ($0.22/h)

- 14 hours

- total cost ~$3

Obviously eval/loss shows overfitting, but the MMLU score seems to improve...

Anyway, if it works well, there is no need to spend several days training with A100 machines costing a few hundred dollars.

- NVIDIA L4 ($0.22/h)

- 14 hours

- total cost ~$3

Obviously eval/loss shows overfitting, but the MMLU score seems to improve...

Anyway, if it works well, there is no need to spend several days training with A100 machines costing a few hundred dollars.