🔗 NDIF: https://ndif.us

🧰 NNsight API: https://nnsight.net

😸 GitHub: https://github.com/ndif-team/nnsight

ArXiv: arxiv.org/abs/2410.22366

Project Website: sdxl-unbox.epfl.ch/

ArXiv: arxiv.org/abs/2410.22366

Project Website: sdxl-unbox.epfl.ch/

For any questions about the application or the NDIF platform, please contact us at info@ndif.us.

For any questions about the application or the NDIF platform, please contact us at info@ndif.us.

1. Be in the first cohort of users to access models beyond our whitelist

2. Directly control which models are hosted on the NDIF backend

3. Receive guided support on their project from the NDIF team

4. Give feedback, guiding future user experience

1. Be in the first cohort of users to access models beyond our whitelist

2. Directly control which models are hosted on the NDIF backend

3. Receive guided support on their project from the NDIF team

4. Give feedback, guiding future user experience

www.youtube.com/@NDIFTeam

www.youtube.com/@NDIFTeam

🔗 nnsight.net/notebooks/m...

🔗 nnsight.net/notebooks/m...

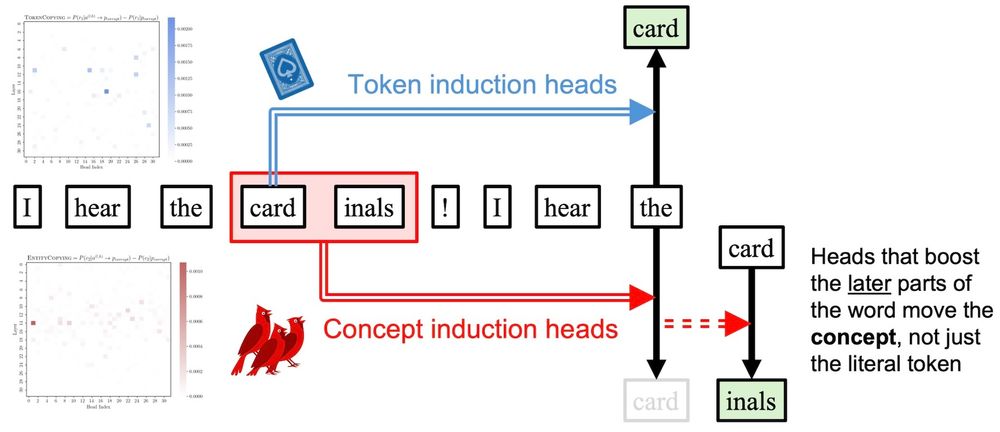

🔗 dualroute.baulab.info/

🔗 dualroute.baulab.info/