Joachim Baumann

@joachimbaumann.bsky.social

Postdoc @milanlp.bsky.social / Incoming Postdoc @stanfordnlp.bsky.social / Computational social science, LLMs, algorithmic fairness

The 94% LLM hacking success rate is achieved by annotating data with several model-prompt configs, then choosing the one that yields the desired result (70% if considering SOTA models only).

The 31-50% risk reflects well-intentioned researchers who just run one reasonable config w/o cherry-picking.

The 31-50% risk reflects well-intentioned researchers who just run one reasonable config w/o cherry-picking.

September 14, 2025 at 6:56 AM

The 94% LLM hacking success rate is achieved by annotating data with several model-prompt configs, then choosing the one that yields the desired result (70% if considering SOTA models only).

The 31-50% risk reflects well-intentioned researchers who just run one reasonable config w/o cherry-picking.

The 31-50% risk reflects well-intentioned researchers who just run one reasonable config w/o cherry-picking.

Thank you, Florian :) We use two methods, CDI and DSL. Both debias LLM annotations and reduce false positive conclusions to about 3-13%, on average, but at the cost of a much higher Type II risk (up to 92%). The human-only conclusions have a pretty low Type I risk as well, at a lower Type II risk.

September 14, 2025 at 6:55 AM

Thank you, Florian :) We use two methods, CDI and DSL. Both debias LLM annotations and reduce false positive conclusions to about 3-13%, on average, but at the cost of a much higher Type II risk (up to 92%). The human-only conclusions have a pretty low Type I risk as well, at a lower Type II risk.

Great question! Performance and LLM hacking risk are negatively correlated. So easy tasks do have lower risk. But even tasks with 96% F1 score showed up to 16% risk of wrong conclusions. Validation is important because high annotation performance doesn't guarantee correct conclusions.

September 12, 2025 at 4:15 PM

Great question! Performance and LLM hacking risk are negatively correlated. So easy tasks do have lower risk. But even tasks with 96% F1 score showed up to 16% risk of wrong conclusions. Validation is important because high annotation performance doesn't guarantee correct conclusions.

We used 199 different prompts total: some from prior work, others based on human annotation guidelines, and some simple semantic paraphrases

Even when LLMs correctly identify significant effects, estimated effect sizes still deviate from true values by 40-77% (see Type M risk, Table 3 and Figure 3)

Even when LLMs correctly identify significant effects, estimated effect sizes still deviate from true values by 40-77% (see Type M risk, Table 3 and Figure 3)

September 12, 2025 at 3:29 PM

We used 199 different prompts total: some from prior work, others based on human annotation guidelines, and some simple semantic paraphrases

Even when LLMs correctly identify significant effects, estimated effect sizes still deviate from true values by 40-77% (see Type M risk, Table 3 and Figure 3)

Even when LLMs correctly identify significant effects, estimated effect sizes still deviate from true values by 40-77% (see Type M risk, Table 3 and Figure 3)

Thank you to the amazing @paul-rottger.bsky.social @aurman21.bsky.social @albertwendsjo.bsky.social @florplaza.bsky.social @jbgruber.bsky.social @dirkhovy.bsky.social for this fun collaboration!!

September 12, 2025 at 10:33 AM

Thank you to the amazing @paul-rottger.bsky.social @aurman21.bsky.social @albertwendsjo.bsky.social @florplaza.bsky.social @jbgruber.bsky.social @dirkhovy.bsky.social for this fun collaboration!!

Why this matters: LLM hacking affects any field using AI for data analysis–not just computational social science!

Please check out our preprint, we'd be happy to receive your feedback!

#LLMHacking #SocialScience #ResearchIntegrity #Reproducibility #DataAnnotation #NLP #OpenScience #Statistics

Please check out our preprint, we'd be happy to receive your feedback!

#LLMHacking #SocialScience #ResearchIntegrity #Reproducibility #DataAnnotation #NLP #OpenScience #Statistics

September 12, 2025 at 10:33 AM

Why this matters: LLM hacking affects any field using AI for data analysis–not just computational social science!

Please check out our preprint, we'd be happy to receive your feedback!

#LLMHacking #SocialScience #ResearchIntegrity #Reproducibility #DataAnnotation #NLP #OpenScience #Statistics

Please check out our preprint, we'd be happy to receive your feedback!

#LLMHacking #SocialScience #ResearchIntegrity #Reproducibility #DataAnnotation #NLP #OpenScience #Statistics

The good news: we found solutions that help mitigate this:

✅ Larger, more capable models are safer (but no guarantee).

✅ Few human annotations beat many AI annotations.

✅ Testing several models and configurations on held-out data helps.

✅ Pre-registering AI choices can prevent cherry-picking.

✅ Larger, more capable models are safer (but no guarantee).

✅ Few human annotations beat many AI annotations.

✅ Testing several models and configurations on held-out data helps.

✅ Pre-registering AI choices can prevent cherry-picking.

September 12, 2025 at 10:33 AM

The good news: we found solutions that help mitigate this:

✅ Larger, more capable models are safer (but no guarantee).

✅ Few human annotations beat many AI annotations.

✅ Testing several models and configurations on held-out data helps.

✅ Pre-registering AI choices can prevent cherry-picking.

✅ Larger, more capable models are safer (but no guarantee).

✅ Few human annotations beat many AI annotations.

✅ Testing several models and configurations on held-out data helps.

✅ Pre-registering AI choices can prevent cherry-picking.

- Researchers using SOTA models like GPT-4o face a 31-50% chance of false conclusions for plausible hypotheses.

- Risk peaks near significance thresholds (p=0.05), where 70% of "discoveries" may be false.

- Regression correction methods often don't work as they trade off Type I vs. Type II errors.

- Risk peaks near significance thresholds (p=0.05), where 70% of "discoveries" may be false.

- Regression correction methods often don't work as they trade off Type I vs. Type II errors.

September 12, 2025 at 10:33 AM

- Researchers using SOTA models like GPT-4o face a 31-50% chance of false conclusions for plausible hypotheses.

- Risk peaks near significance thresholds (p=0.05), where 70% of "discoveries" may be false.

- Regression correction methods often don't work as they trade off Type I vs. Type II errors.

- Risk peaks near significance thresholds (p=0.05), where 70% of "discoveries" may be false.

- Regression correction methods often don't work as they trade off Type I vs. Type II errors.

We tested 18 LLMs on 37 social science annotation tasks (13M labels, 1.4M regressions). By trying different models and prompts, you can make 94% of null results appear statistically significant–or flip findings completely 68% of the time.

Importantly this also concerns well-intentioned researchers!

Importantly this also concerns well-intentioned researchers!

September 12, 2025 at 10:33 AM

We tested 18 LLMs on 37 social science annotation tasks (13M labels, 1.4M regressions). By trying different models and prompts, you can make 94% of null results appear statistically significant–or flip findings completely 68% of the time.

Importantly this also concerns well-intentioned researchers!

Importantly this also concerns well-intentioned researchers!

Not at this point, but the preprint should be ready soon

July 30, 2025 at 5:50 AM

Not at this point, but the preprint should be ready soon

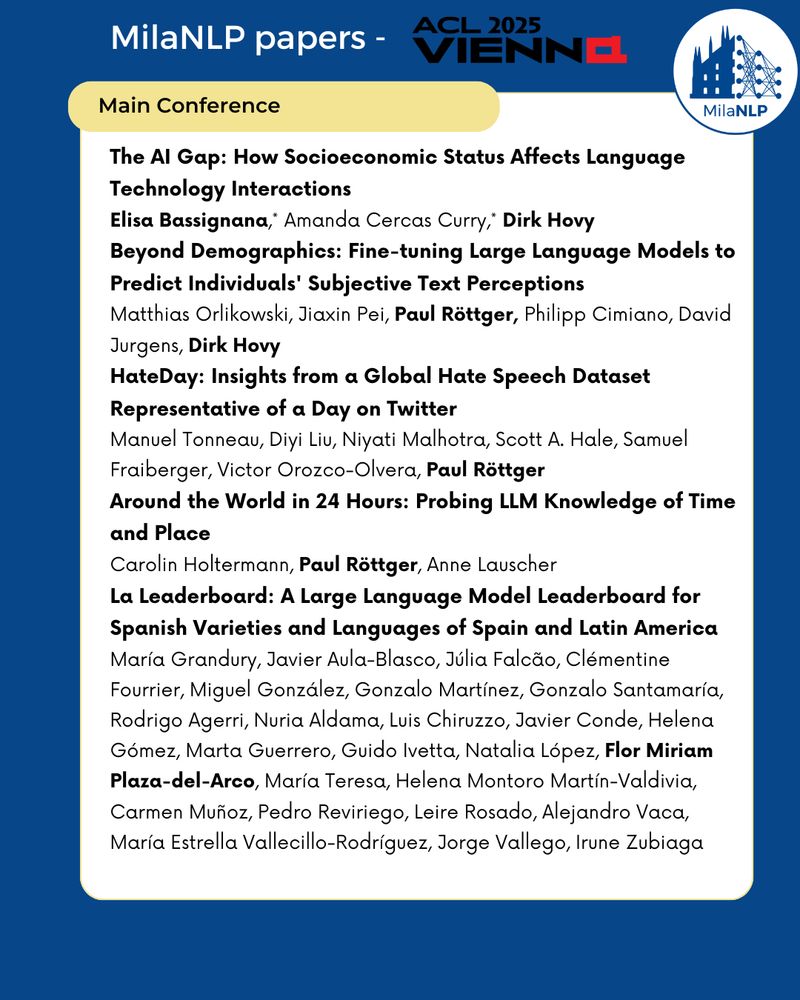

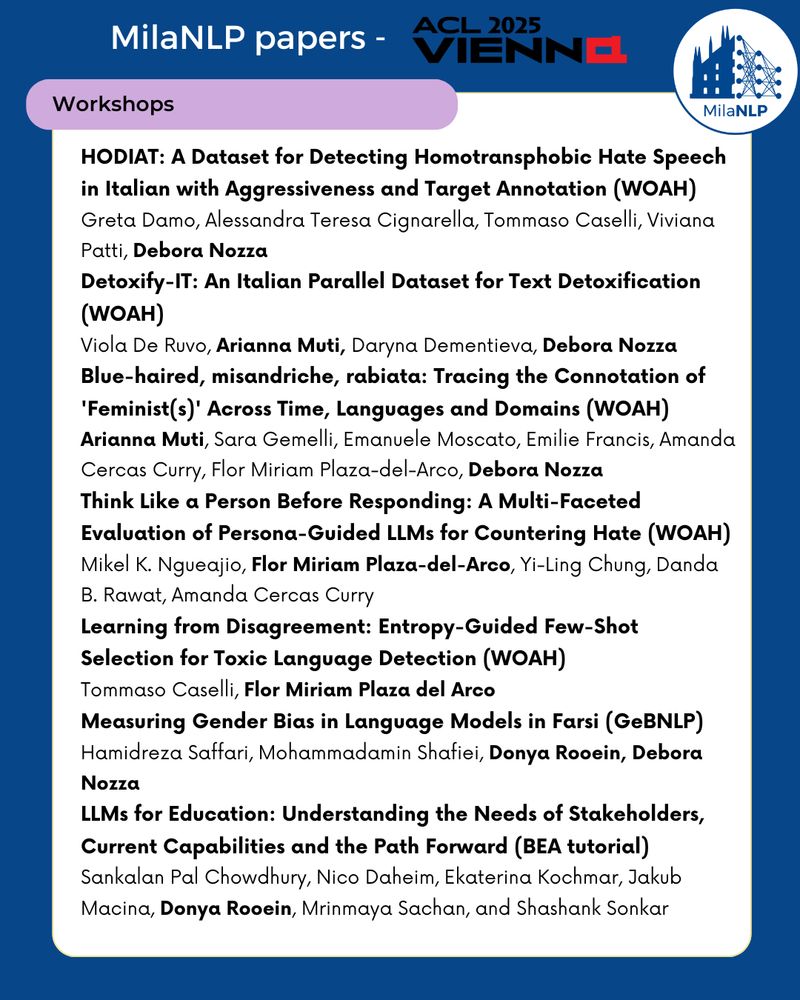

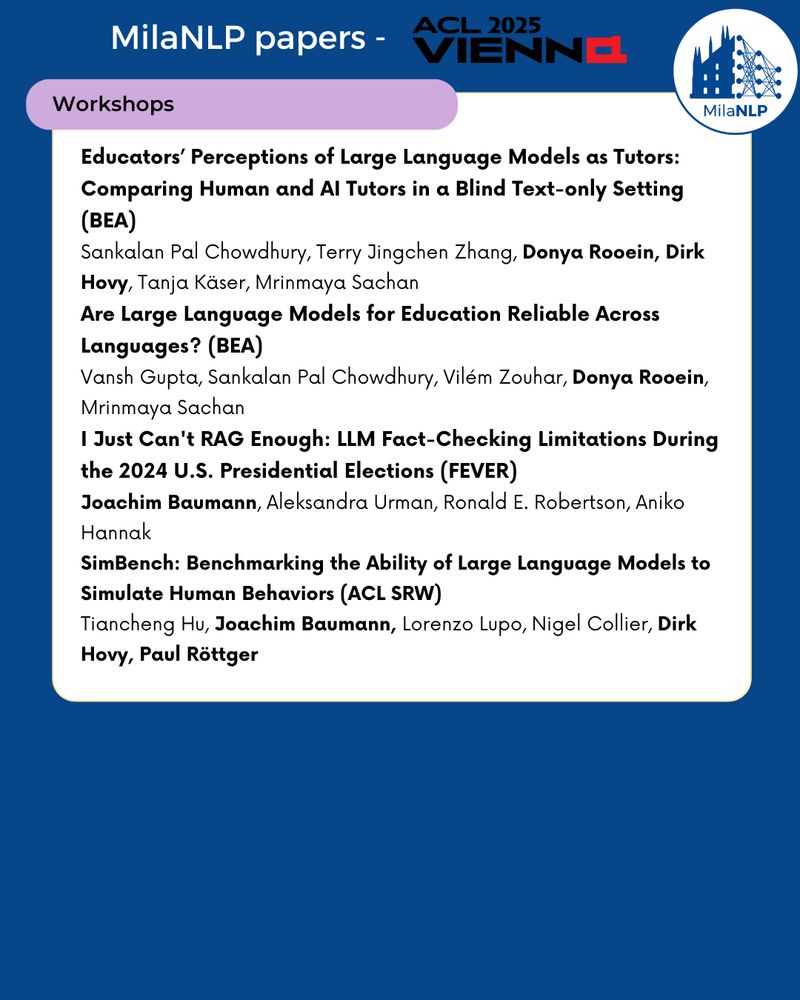

The @milanlp.bsky.social group is presenting 15 papers (+ a toturial) at this year's #ACL2025 , go check them out :)

bsky.app/profile/mila...

bsky.app/profile/mila...

🎉 The @milanlp.bsky.social lab is excited to present 15 papers and 1 tutorial at #ACL2025 & workshops! Grateful to all our amazing collaborators, see everyone in Vienna! 🚀

July 29, 2025 at 12:13 PM

The @milanlp.bsky.social group is presenting 15 papers (+ a toturial) at this year's #ACL2025 , go check them out :)

bsky.app/profile/mila...

bsky.app/profile/mila...

Shoutout to @tiancheng.bsky.social for yesterday's stellar presentation of our work benchmarking LLMs' ability to simulate group-level human behavior: bsky.app/profile/tian...

SimBench: Benchmarking the Ability of Large

Language Models to Simulate Human Behaviors, SRW Oral, Monday, July 28, 14:00-15:30

Language Models to Simulate Human Behaviors, SRW Oral, Monday, July 28, 14:00-15:30

July 29, 2025 at 12:11 PM

Shoutout to @tiancheng.bsky.social for yesterday's stellar presentation of our work benchmarking LLMs' ability to simulate group-level human behavior: bsky.app/profile/tian...

I'm at #ACL2025 this week:📍Find me at the FEVER workshop, *Thursday 11am* 📝 presenting: "I Just Can't RAG Enough" - our ongoing work with @aurman21.bsky.social & @rer.bsky.social & Anikó Hannák, showing that RAG does not solve LLM fact-checking limitations!

July 29, 2025 at 12:10 PM

I'm at #ACL2025 this week:📍Find me at the FEVER workshop, *Thursday 11am* 📝 presenting: "I Just Can't RAG Enough" - our ongoing work with @aurman21.bsky.social & @rer.bsky.social & Anikó Hannák, showing that RAG does not solve LLM fact-checking limitations!