- Anne Collins (Professor @ucberkeleyofficial.bsky.social)

- Niels Leadholm (Research Manager @thousandbrains.org)

Things are heating up!!

See the flyer for details👇

🌎 sensorimotorai.github.io/debates/

🧠🤖🧠📈

- Anne Collins (Professor @ucberkeleyofficial.bsky.social)

- Niels Leadholm (Research Manager @thousandbrains.org)

Things are heating up!!

See the flyer for details👇

🌎 sensorimotorai.github.io/debates/

🧠🤖🧠📈

➡️ This suggests we need to upgrade—or even replace—RL with a more general theory of active learning that's not solely reward-based

✅Which is why we are launching the RL Debates

More info: sensorimotorai.github.io/debates/

🧵[4/5]

🧠🤖🧠📈

➡️ This suggests we need to upgrade—or even replace—RL with a more general theory of active learning that's not solely reward-based

✅Which is why we are launching the RL Debates

More info: sensorimotorai.github.io/debates/

🧵[4/5]

🧠🤖🧠📈

This figure from Sutton & Barto's classic textbook summarizes the standard view👇

🤔 But what if the premise of an 'external, scalar reward' is a simplification we need to move past?

🧵[3/5]

This figure from Sutton & Barto's classic textbook summarizes the standard view👇

🤔 But what if the premise of an 'external, scalar reward' is a simplification we need to move past?

🧵[3/5]

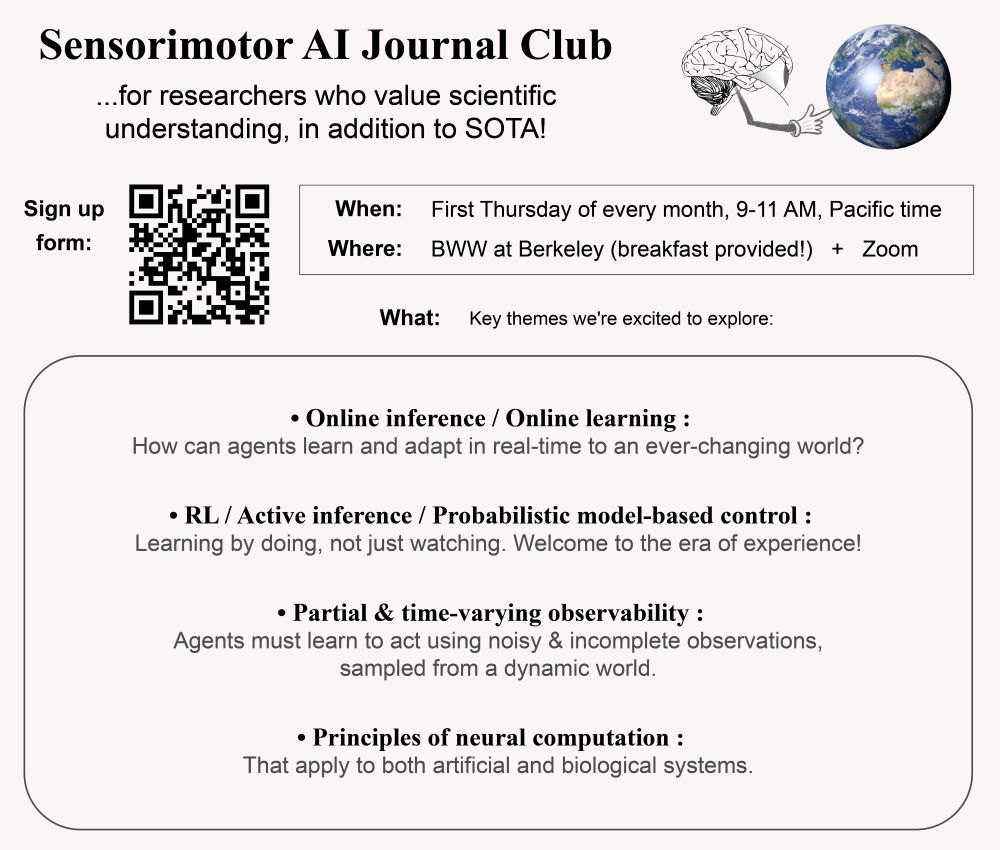

💬 Join us on Slack: join.slack.com/t/sensorimot...

💡 The main motivation: recognizing how central "action" is in all things intelligence

Perception, cognition, and knowledge are fundamentally intertwined with "action"

🧵[2/5]

💬 Join us on Slack: join.slack.com/t/sensorimot...

💡 The main motivation: recognizing how central "action" is in all things intelligence

Perception, cognition, and knowledge are fundamentally intertwined with "action"

🧵[2/5]

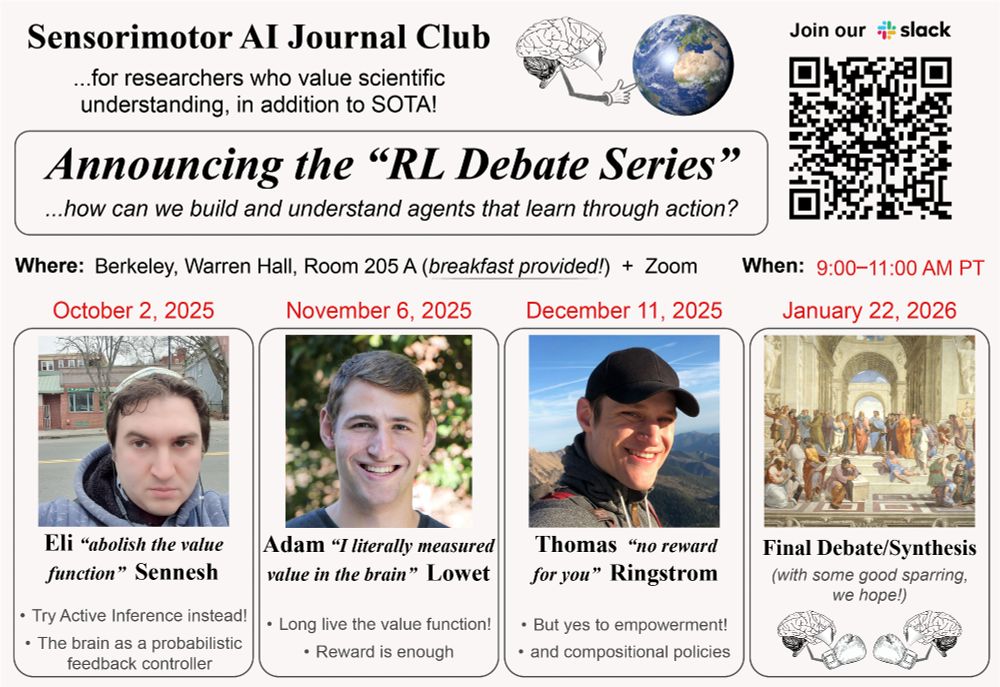

📢 To address these, the Sensorimotor AI Journal Club is launching the "RL Debate Series"👇

w/ @elisennesh.bsky.social, @noreward4u.bsky.social, @tommasosalvatori.bsky.social

🧵[1/5]

🧠🤖🧠📈

📢 To address these, the Sensorimotor AI Journal Club is launching the "RL Debate Series"👇

w/ @elisennesh.bsky.social, @noreward4u.bsky.social, @tommasosalvatori.bsky.social

🧵[1/5]

🧠🤖🧠📈

(...which, we might as well just call the "AI Club for Non-conformists"👇😎)

📽️ full presentation: youtube.com/watch?v=efc7...

🧵[1/4]

🧠🤖🧠📈

(...which, we might as well just call the "AI Club for Non-conformists"👇😎)

📽️ full presentation: youtube.com/watch?v=efc7...

🧵[1/4]

🧠🤖🧠📈

w/ Kaylene Stocking, Tommaso Salvatori, and @elisennesh.bsky.social

Sign up link: forms.gle/o5DXD4WMdhTg...

More details below 🧵[1/5]

🧠🤖🧠📈

w/ Kaylene Stocking, Tommaso Salvatori, and @elisennesh.bsky.social

Sign up link: forms.gle/o5DXD4WMdhTg...

More details below 🧵[1/5]

🧠🤖🧠📈

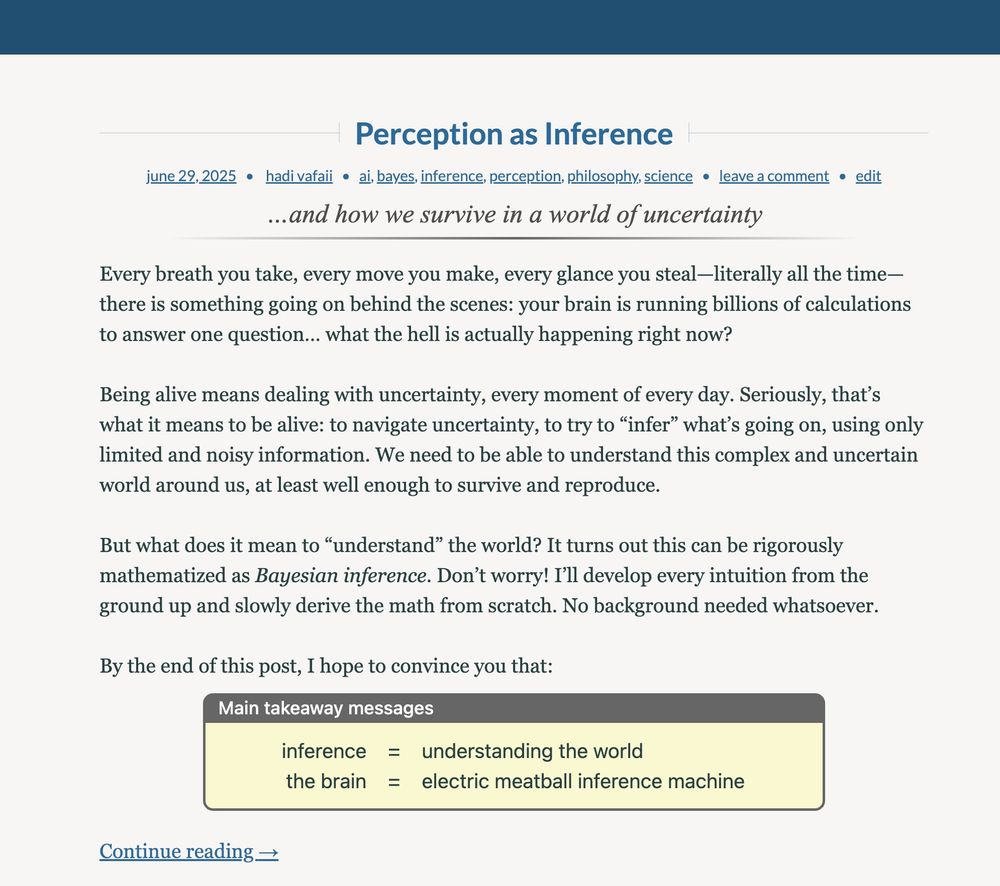

1⃣ introduce Bayes' theorem, and provide a visual proof

2⃣ apply it to explain both optical illusions and social delusions

But first, you need to read Part 1 as a prerequisite 🙂 Here's the link again: mysterioustune.com/2025/06/29/p...

[5/6]🧵

🧠🤖🧠📈

1⃣ introduce Bayes' theorem, and provide a visual proof

2⃣ apply it to explain both optical illusions and social delusions

But first, you need to read Part 1 as a prerequisite 🙂 Here's the link again: mysterioustune.com/2025/06/29/p...

[5/6]🧵

🧠🤖🧠📈

🧠 Plato wrote about this back in 380 BC

✅ But now, we have the mathematics of Bayesian posterior inference to reason about this concept

If you’ve also wondered about this, you’re in good company. At least a 2400+ year old company 😉

[4/6]🧵

🧠 Plato wrote about this back in 380 BC

✅ But now, we have the mathematics of Bayesian posterior inference to reason about this concept

If you’ve also wondered about this, you’re in good company. At least a 2400+ year old company 😉

[4/6]🧵

💡But did you know John Locke first thought of this example way back in 1690?

Read my blog to learn about the rich history behind this idea.

[3/6]🧵

💡But did you know John Locke first thought of this example way back in 1690?

Read my blog to learn about the rich history behind this idea.

[3/6]🧵

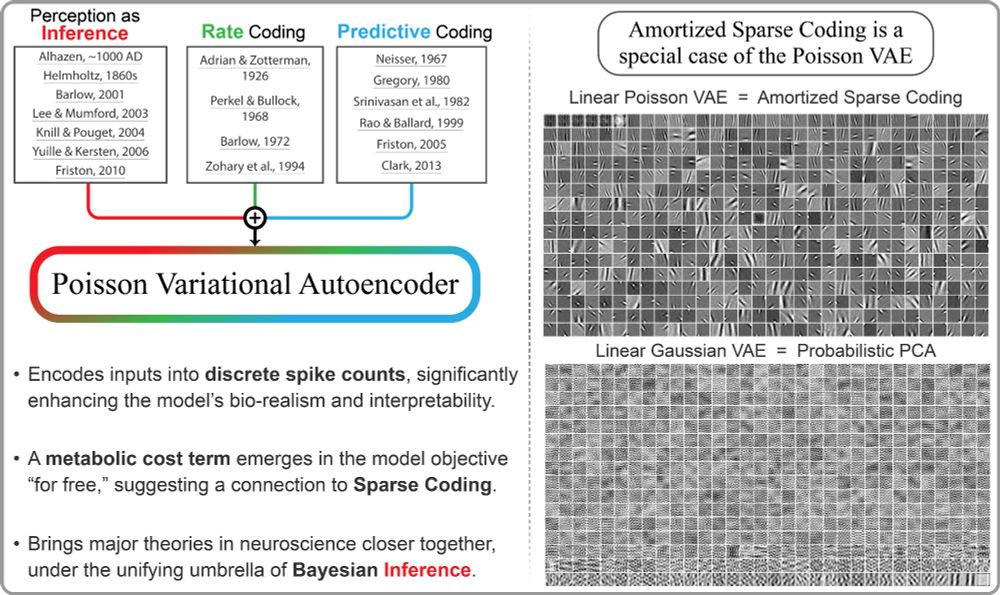

✅ Sparse Coding

✅ Predictive Coding

✅ Free Energy Principle

& more!

In my new blog post, I build the intuition behind this idea from ground up 👉[1/6]🧵

🧠🤖🧠📈

✅ Sparse Coding

✅ Predictive Coding

✅ Free Energy Principle

& more!

In my new blog post, I build the intuition behind this idea from ground up 👉[1/6]🧵

🧠🤖🧠📈

✅The exp nonlinearity and emergent divisive normalization likely underlie iP-VAE's effectiveness — similar to xLSTM (openreview.net/forum?id=ARA...)

🧵[11/n]

✅The exp nonlinearity and emergent divisive normalization likely underlie iP-VAE's effectiveness — similar to xLSTM (openreview.net/forum?id=ARA...)

🧵[11/n]

For example, contrast-dependent response latency of V1 neurons (Carandini et al., 1997):

(compare to Fig. 3A here: doi.org/10.1523/jneu...)

🧵[9/n]

For example, contrast-dependent response latency of V1 neurons (Carandini et al., 1997):

(compare to Fig. 3A here: doi.org/10.1523/jneu...)

🧵[9/n]

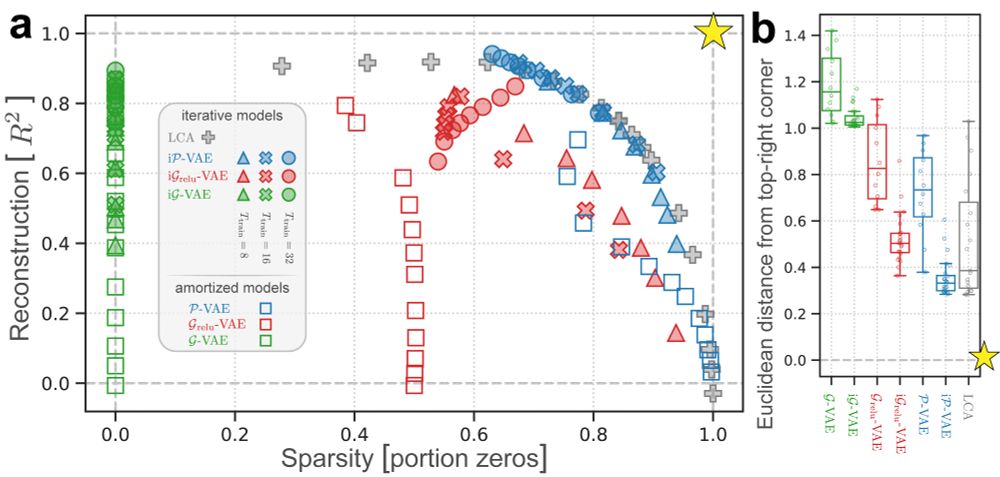

✅iP-VAE and LCA (a classic sparse coding algorithm) find the best overall reconstruction-sparsity trade-off

✅All iterative VAEs outperform their standard amortized counterparts (despite using 25x fewer parameters)

🧵[8/n]

✅iP-VAE and LCA (a classic sparse coding algorithm) find the best overall reconstruction-sparsity trade-off

✅All iterative VAEs outperform their standard amortized counterparts (despite using 25x fewer parameters)

🧵[8/n]

✅All models converge beyond their training regime (T_train = 16, T_test = 1000)

✅iP-VAE finds the best compromise between reconstruction fidelity and sparsity

🧵[7/n]

✅All models converge beyond their training regime (T_train = 16, T_test = 1000)

✅iP-VAE finds the best compromise between reconstruction fidelity and sparsity

🧵[7/n]

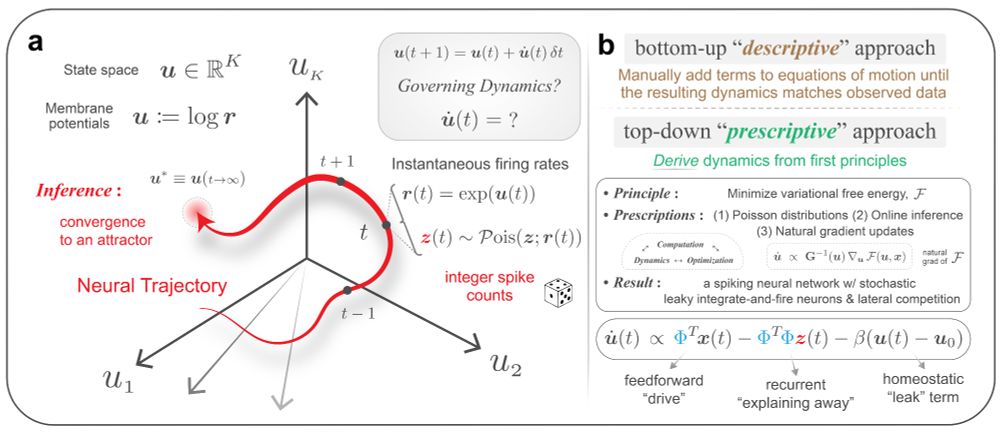

Let me emphasize:

✅ We "derived" this. Just from F minimization. Without putting any of those terms in there by hand.

🧵[6/n]

Let me emphasize:

✅ We "derived" this. Just from F minimization. Without putting any of those terms in there by hand.

🧵[6/n]

We need specific "prescriptions" to guide top-down algorithm development. Otherwise, we risk falling into this trap:

P.S. I recently learned this is called #Bayesplaining 🙂

🧵[4/n]

We need specific "prescriptions" to guide top-down algorithm development. Otherwise, we risk falling into this trap:

P.S. I recently learned this is called #Bayesplaining 🙂

🧵[4/n]

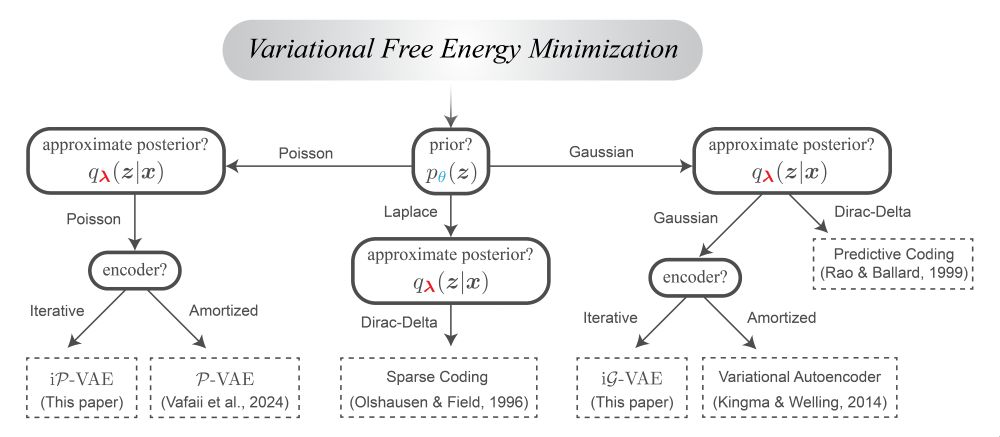

We start by recognizing the free energy (F) principle as our best candidate for a unified theory of biological and artificial intelligence.

Here's why👇

🧵[3/n]

We start by recognizing the free energy (F) principle as our best candidate for a unified theory of biological and artificial intelligence.

Here's why👇

🧵[3/n]

In a new preprint, we:

✅ "Derive" a spiking recurrent network from variational principles

✅ Show it does amazing things like out-of-distribution generalization

👉[1/n]🧵

w/ co-lead Dekel Galor & PI @jcbyts.bsky.social

🧠🤖🧠📈

In a new preprint, we:

✅ "Derive" a spiking recurrent network from variational principles

✅ Show it does amazing things like out-of-distribution generalization

👉[1/n]🧵

w/ co-lead Dekel Galor & PI @jcbyts.bsky.social

🧠🤖🧠📈

From: openreview.net/forum?id=ekt...

From: openreview.net/forum?id=ekt...

We are each gifted a finite amount of time on this earth.

How will you spend yours? Chasing fleeting distractions? Or contributing to humanity's deepest, longest ongoing quest—minimizing our collective KL divergence?

Your choice.

🧵[15/n]

🧠🤖🧠📈 #AI

We are each gifted a finite amount of time on this earth.

How will you spend yours? Chasing fleeting distractions? Or contributing to humanity's deepest, longest ongoing quest—minimizing our collective KL divergence?

Your choice.

🧵[15/n]

🧠🤖🧠📈 #AI

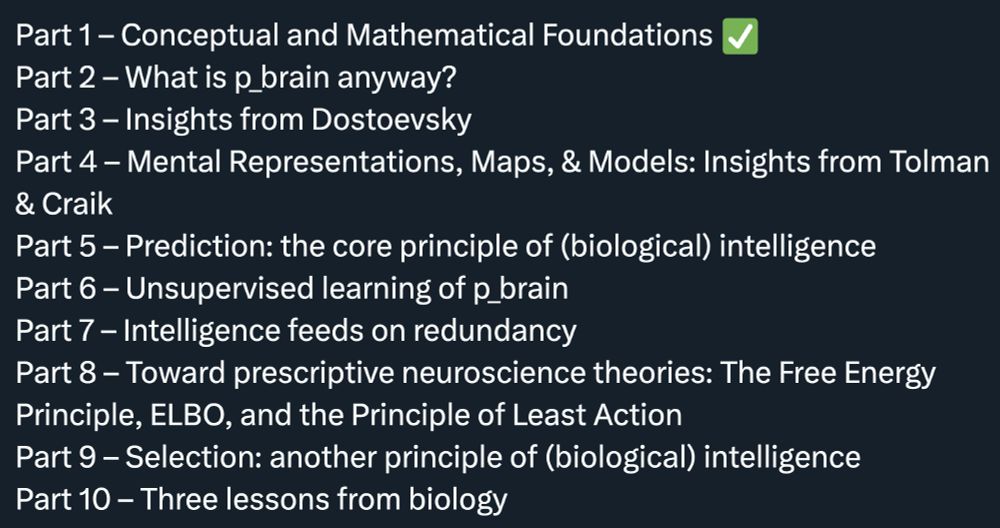

In that sense, all of science and philosophy merge into a single pursuit:

➡️ Humanity's collective KL minimization.

🧵[14/n]

In that sense, all of science and philosophy merge into a single pursuit:

➡️ Humanity's collective KL minimization.

🧵[14/n]

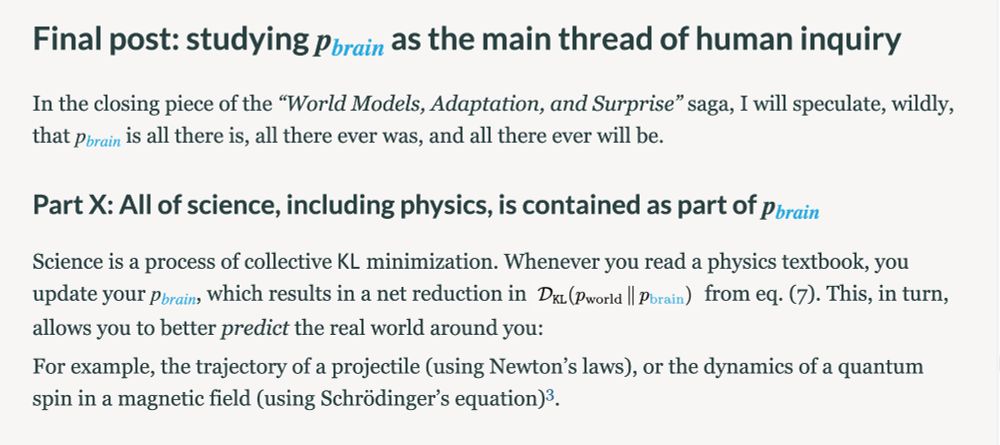

It turns out, I need 10 more parts to finish the full story arc. Here’s what I have in mind next:

🧵[13/n]

It turns out, I need 10 more parts to finish the full story arc. Here’s what I have in mind next:

🧵[13/n]