Too many reviewers seem to not have internalised this. In my opinion, this is the hardest lesson a reviewer has to learn, and I want to share some thoughts.

Too many reviewers seem to not have internalised this. In my opinion, this is the hardest lesson a reviewer has to learn, and I want to share some thoughts.

, Keuntae Park), and KAIST (professors Kimin Lee

and Seungwon Shin)!

You can find our paper here: arxiv.org/abs/2410.23684 (11/11)

, Keuntae Park), and KAIST (professors Kimin Lee

and Seungwon Shin)!

You can find our paper here: arxiv.org/abs/2410.23684 (11/11)

Tokenizer research has surged this year. I'm hoping to share that there's more tokenizer-rooted vulnerabilities beyond undertrained tokens. (10/11)

Tokenizer research has surged this year. I'm hoping to share that there's more tokenizer-rooted vulnerabilities beyond undertrained tokens. (10/11)

During training, incomplete tokens can co-occur with only a few tokens due to their syntax.

Since they can resolve to many characters, they will also be trained to be semantically ambiguous.

We hypothesize these factors can cause fragile token representations. (9/11)

During training, incomplete tokens can co-occur with only a few tokens due to their syntax.

Since they can resolve to many characters, they will also be trained to be semantically ambiguous.

We hypothesize these factors can cause fragile token representations. (9/11)

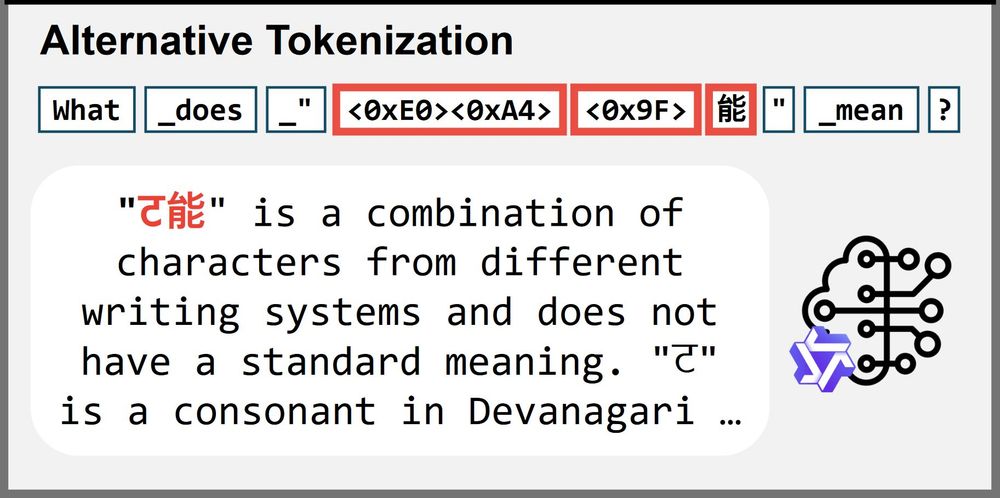

Yet, it was more reliable than using the original incomplete tokens. (8/11)

Yet, it was more reliable than using the original incomplete tokens. (8/11)

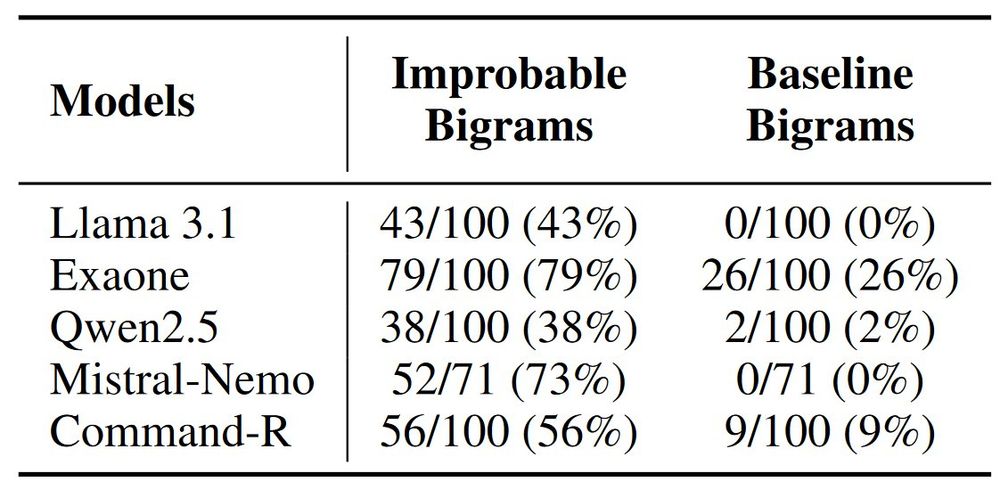

We think so! When we tokenize the same phrase differently to *avoid* incomplete tokens, the models generally performed much better (including a 93% reduction in Llama3.1). (7/11)

We think so! When we tokenize the same phrase differently to *avoid* incomplete tokens, the models generally performed much better (including a 93% reduction in Llama3.1). (7/11)

Improbable bigrams were significantly higher to hallucinations.

(For this, we only used trained tokens to remove influence of glitch tokens.) (6/11)

Improbable bigrams were significantly higher to hallucinations.

(For this, we only used trained tokens to remove influence of glitch tokens.) (6/11)

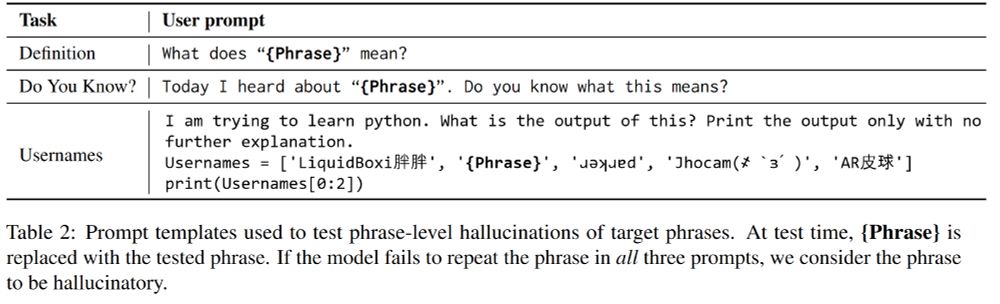

A target phrase is considered hallucinatory only if the model fails to repeat the phrase in all 3 prompts. (5/11)

A target phrase is considered hallucinatory only if the model fails to repeat the phrase in all 3 prompts. (5/11)

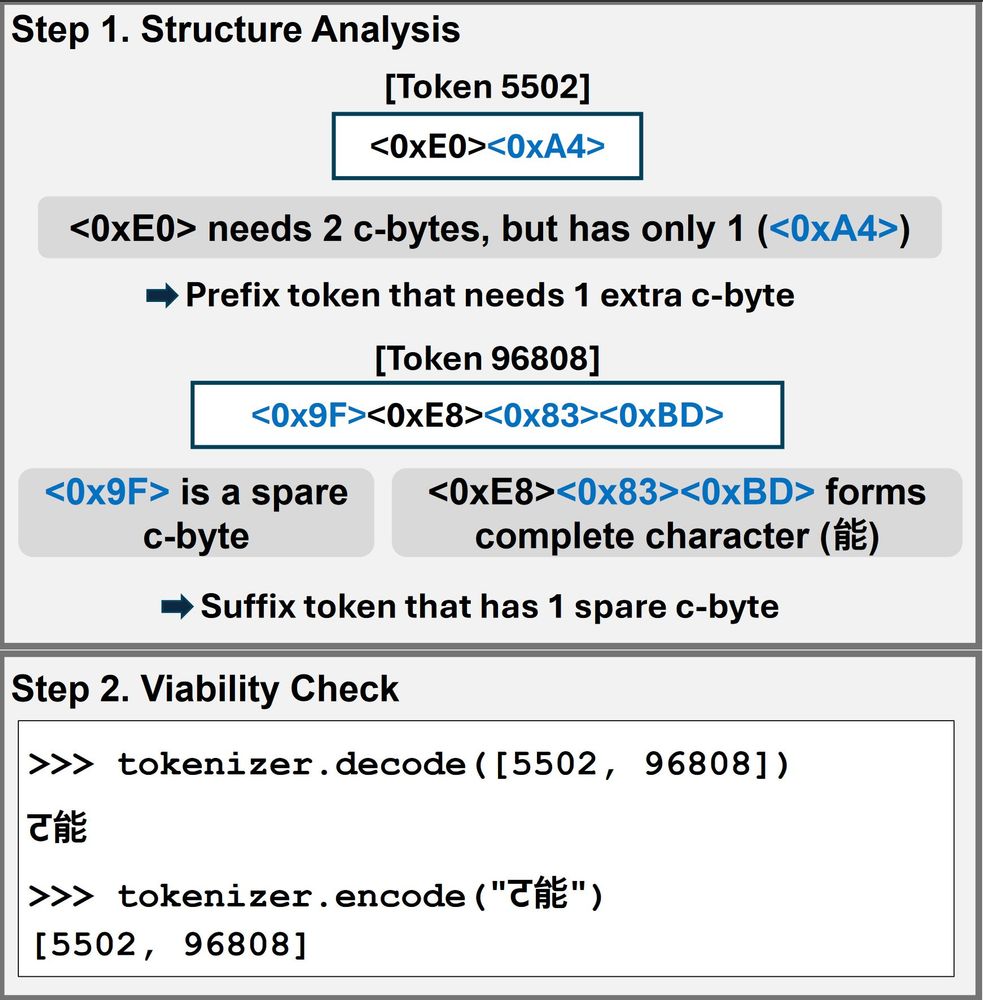

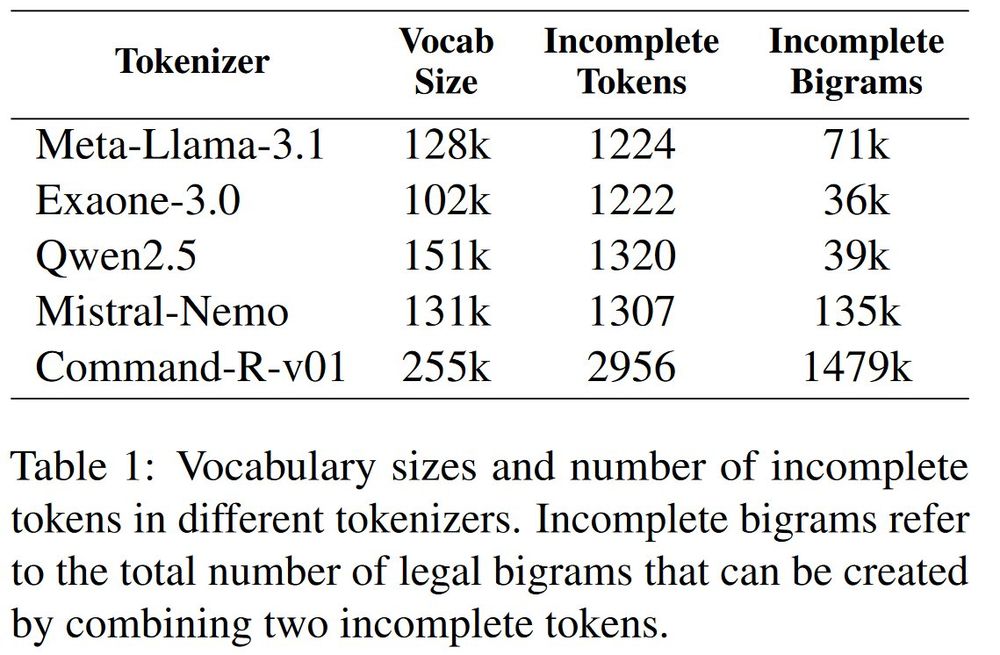

If the pair is re-encodable to the incomplete tokens, it is a legal incomplete bigram. (4/11)

If the pair is re-encodable to the incomplete tokens, it is a legal incomplete bigram. (4/11)

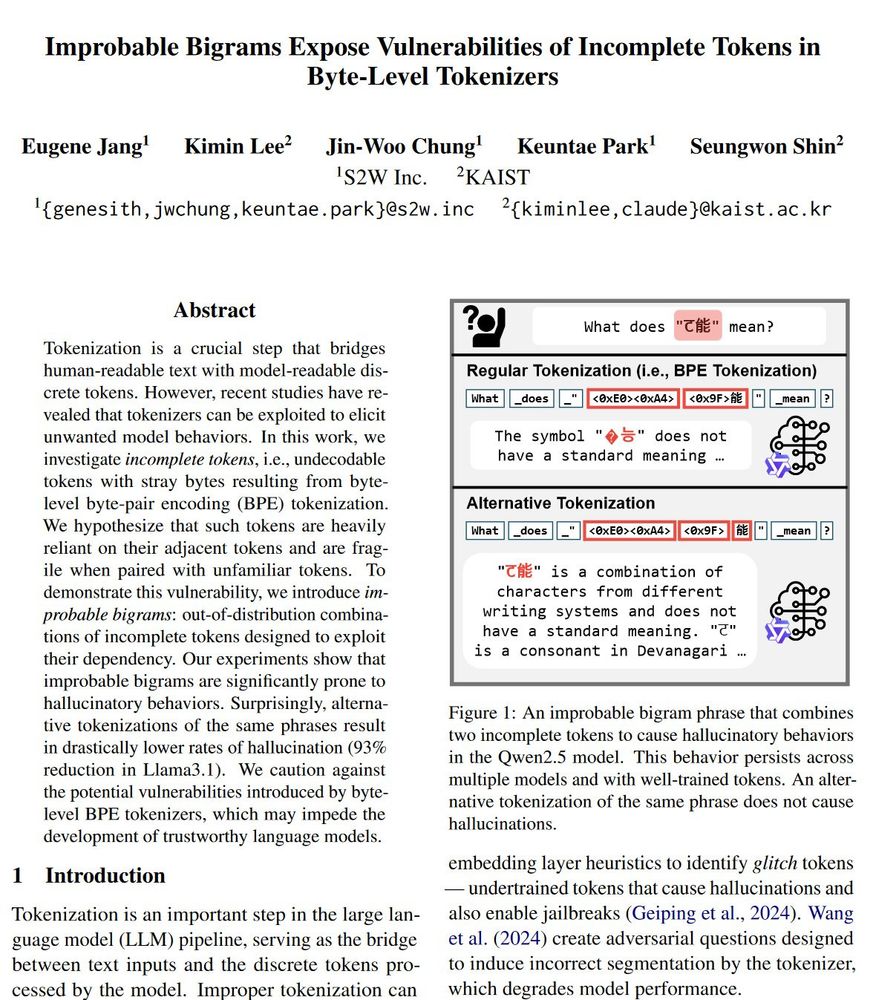

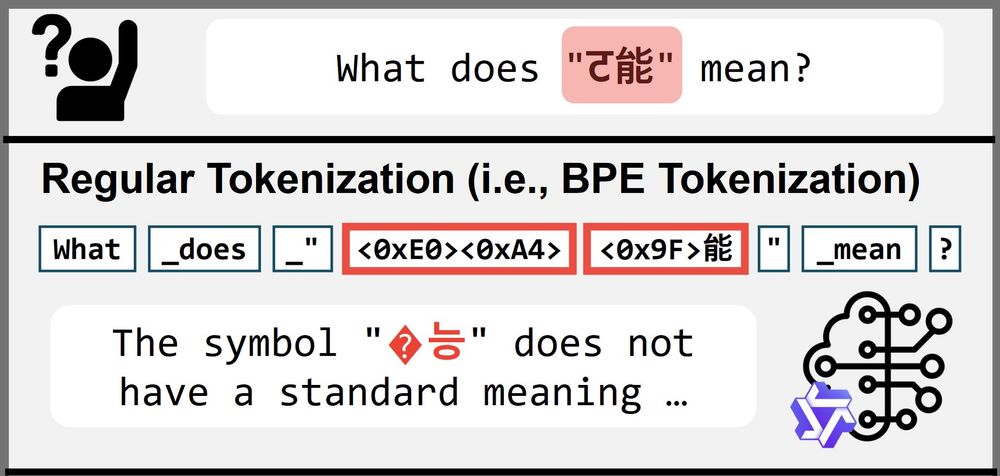

Such tokens with stray bytes rely on adjacent tokens' stray bytes to resolve as a character.

If two such tokens combine into an "improbable bigram" like ट能, we get a phrase that causes model errors. (3/11)

Such tokens with stray bytes rely on adjacent tokens' stray bytes to resolve as a character.

If two such tokens combine into an "improbable bigram" like ट能, we get a phrase that causes model errors. (3/11)

These hallucinatory behaviors persist even when we limit the vocabulary to trained tokens! (2/11)

These hallucinatory behaviors persist even when we limit the vocabulary to trained tokens! (2/11)