@ Blei Lab & Columbia University.

Working on probabilistic ML | uncertainty quantification | LLM interpretability.

Excited about everything ML, AI and engineering!

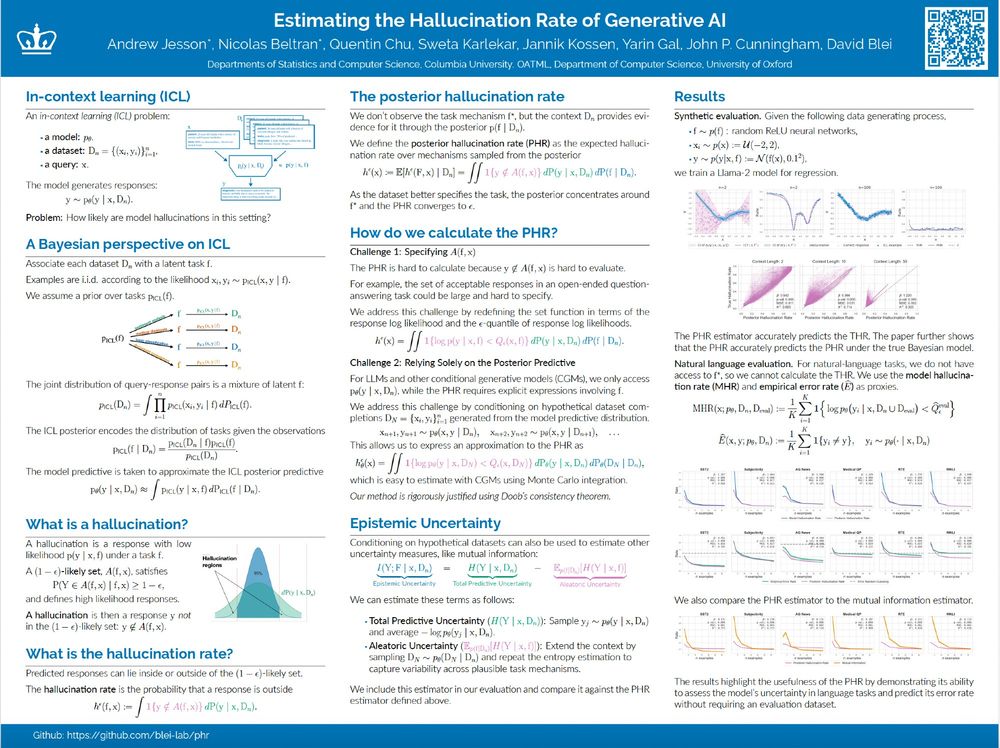

We will be presenting Estimating the Hallucination Rate of Generative AI at NeurIPS. Come if you'd like to chat about epistemic uncertainty for In-Context Learning, or uncertainty more generally. :)

Location: East Exhibit Hall A-C #2703

Time: Friday @ 4:30

Paper: arxiv.org/abs/2406.07457

We will be presenting Estimating the Hallucination Rate of Generative AI at NeurIPS. Come if you'd like to chat about epistemic uncertainty for In-Context Learning, or uncertainty more generally. :)

Location: East Exhibit Hall A-C #2703

Time: Friday @ 4:30

Paper: arxiv.org/abs/2406.07457

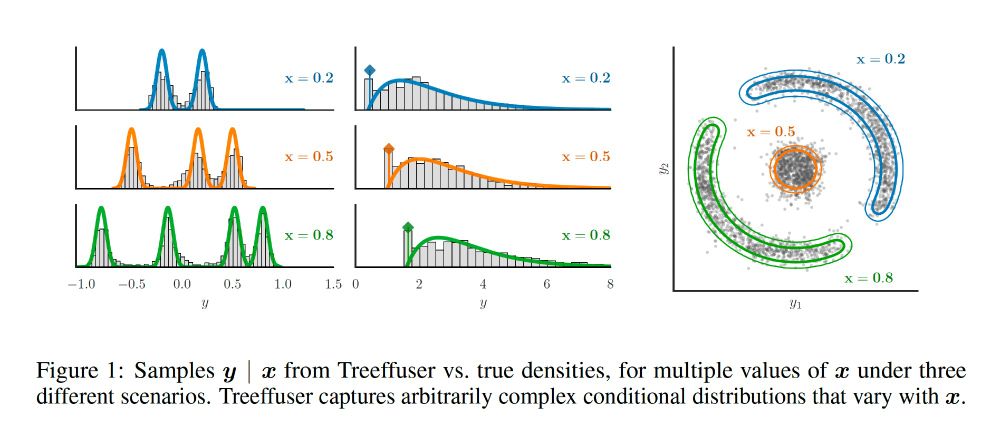

tinyurl.com/treeffuser-s...

tinyurl.com/treeffuser-s...

(7/8)

(7/8)

paper: arxiv.org/abs/2406.07658

(6/8)

paper: arxiv.org/abs/2406.07658

(6/8)

(5/8)

(5/8)

paper: arxiv.org/abs/2406.07658

repo: github.com/blei-lab/tre...

🧵(1/8)

paper: arxiv.org/abs/2406.07658

repo: github.com/blei-lab/tre...

🧵(1/8)