Follow me on X: https://x.com/MaziyarPanahi

BC4CHEMD,

BC5CDR (chem + disease),

BC2GM,

JNLPBA,

BioNLP 2013 CG,

GELLUS,

FSU,

CLL,

Anatomy (AnatEM),

Linnaeus,

Species‑800,

NCBI‑Disease.

pick a size to match latency/accuracy needs and your deployment constraints.

🧵 (5/6)

BC4CHEMD,

BC5CDR (chem + disease),

BC2GM,

JNLPBA,

BioNLP 2013 CG,

GELLUS,

FSU,

CLL,

Anatomy (AnatEM),

Linnaeus,

Species‑800,

NCBI‑Disease.

pick a size to match latency/accuracy needs and your deployment constraints.

🧵 (5/6)

domain‑adapted for clinical/biomedical text while keeping flexible zero‑shot behavior.

seamless with gliner and the @hf.co ecosystem.

🧵 (4/6)

domain‑adapted for clinical/biomedical text while keeping flexible zero‑shot behavior.

seamless with gliner and the @hf.co ecosystem.

🧵 (4/6)

define labels at inference, no retraining.

go from “disease” to “gene mutation” to “device” by changing the label list.

perfect for shifting schemas across hospitals, projects,

and ontologies without new annotation cycles.

🧵 (3/6)

define labels at inference, no retraining.

go from “disease” to “gene mutation” to “device” by changing the label list.

perfect for shifting schemas across hospitals, projects,

and ontologies without new annotation cycles.

🧵 (3/6)

across 91 base→fine‑tuned pairs,

average F1 jumps from 0.519 → 0.809 (+0.290, ~80% relative).

consistent gains for chemicals, diseases, anatomy, genes/proteins, and oncology corpora.

🧵 (2/6)

across 91 base→fine‑tuned pairs,

average F1 jumps from 0.519 → 0.809 (+0.290, ~80% relative).

consistent gains for chemicals, diseases, anatomy, genes/proteins, and oncology corpora.

🧵 (2/6)

Apache‑2.0 licensed and ready to use

Built on GLiNER and covering 12+ biomedical datasets

🧵 (1/6)

Apache‑2.0 licensed and ready to use

Built on GLiNER and covering 12+ biomedical datasets

🧵 (1/6)

Plus, they integrate seamlessly with Hugging Face and PyTorch.

Plus, they integrate seamlessly with Hugging Face and PyTorch.

iPhone is useless without developers' work. Stop taking money from developers; they already ensure your overpriced devices sell!

iPhone is useless without developers' work. Stop taking money from developers; they already ensure your overpriced devices sell!

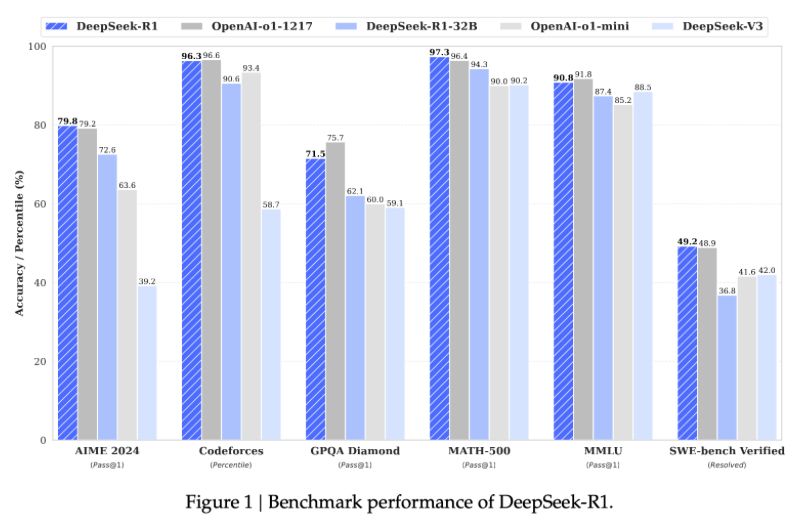

🚀 DeepSeek-R1’s journey highlights key challenges with PRM (Process Reward Models) and MCTS (Monte Carlo Tree Search). From annotation hurdles to scaling limits, the path to scalable AI reasoning is full of learnings.

#AI #ReinforcementLearning #rl

🚀 DeepSeek-R1’s journey highlights key challenges with PRM (Process Reward Models) and MCTS (Monte Carlo Tree Search). From annotation hurdles to scaling limits, the path to scalable AI reasoning is full of learnings.

#AI #ReinforcementLearning #rl

"Does Prompt Formatting Have Any Impact on LLM Performance?" - arxiv.org/pdf/2411.10541

"Does Prompt Formatting Have Any Impact on LLM Performance?" - arxiv.org/pdf/2411.10541

• Gradient steps: 16

• Micro batch size: 4

• Gradient steps: 8

• Micro batch size: 3

• Gradient steps: 16

• Micro batch size: 3

• Gradient steps: 16

• Micro batch size: 4

• Gradient steps: 8

• Micro batch size: 3

• Gradient steps: 16

• Micro batch size: 3

My 3B LLM collection on @huggingface.bsky.social :

• 3 new instruction-tuned models

• 3 French language models (Project Baguette)

• 6 specialized French legal models (LoiLlama & LoiQwen)

• 54M-token French legal synthetic dataset

My 3B LLM collection on @huggingface.bsky.social :

• 3 new instruction-tuned models

• 3 French language models (Project Baguette)

• 6 specialized French legal models (LoiLlama & LoiQwen)

• 54M-token French legal synthetic dataset