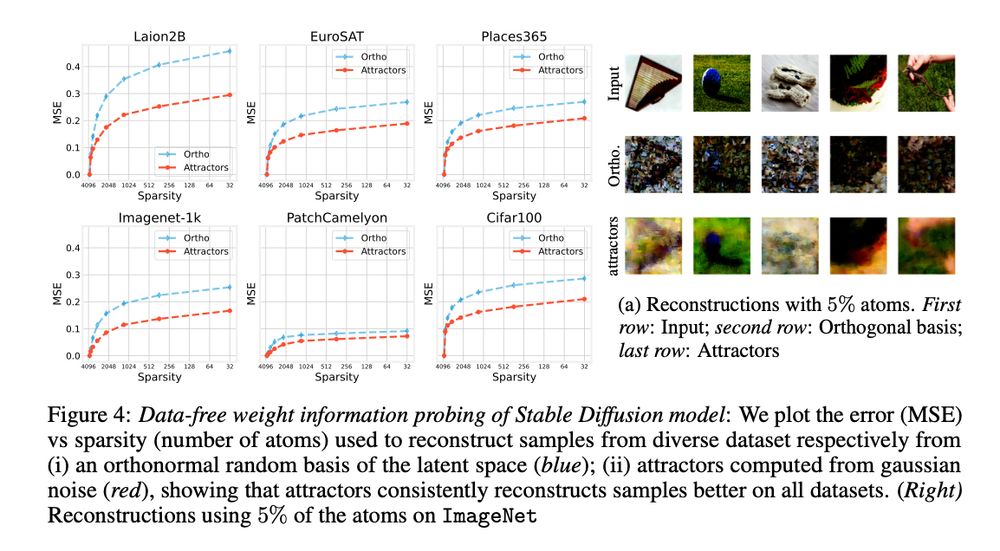

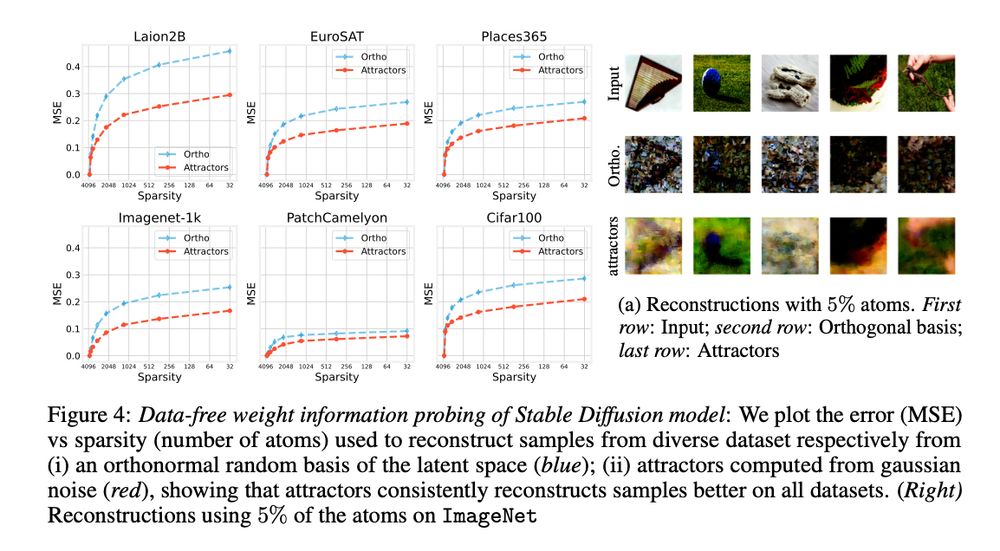

This representation retains properties of the model, revealing memorization and generalization regimes, and characterizing distribution shifts

📜: arxiv.org/abs/2505.22785

This representation retains properties of the model, revealing memorization and generalization regimes, and characterizing distribution shifts

📜: arxiv.org/abs/2505.22785

(4/N)

(4/N)

(3/N)

(3/N)

(2/N)

(2/N)

We show how neural models can be aligned by matching function spaces on representation manifolds, providing a unified framework for model comparison, matching, and information transfer.

📜: arxiv.org/abs/2406.14183

👇🧵

We show how neural models can be aligned by matching function spaces on representation manifolds, providing a unified framework for model comparison, matching, and information transfer.

📜: arxiv.org/abs/2406.14183

👇🧵