https://jorge-a-mendez.github.io

They are more promising as fast "idea generators", where a formal planner verifies their output.

Paper: arxiv.org/abs/2510.001...

Code & Data: github.com/jorge-a-mend...

They are more promising as fast "idea generators", where a formal planner verifies their output.

Paper: arxiv.org/abs/2510.001...

Code & Data: github.com/jorge-a-mend...

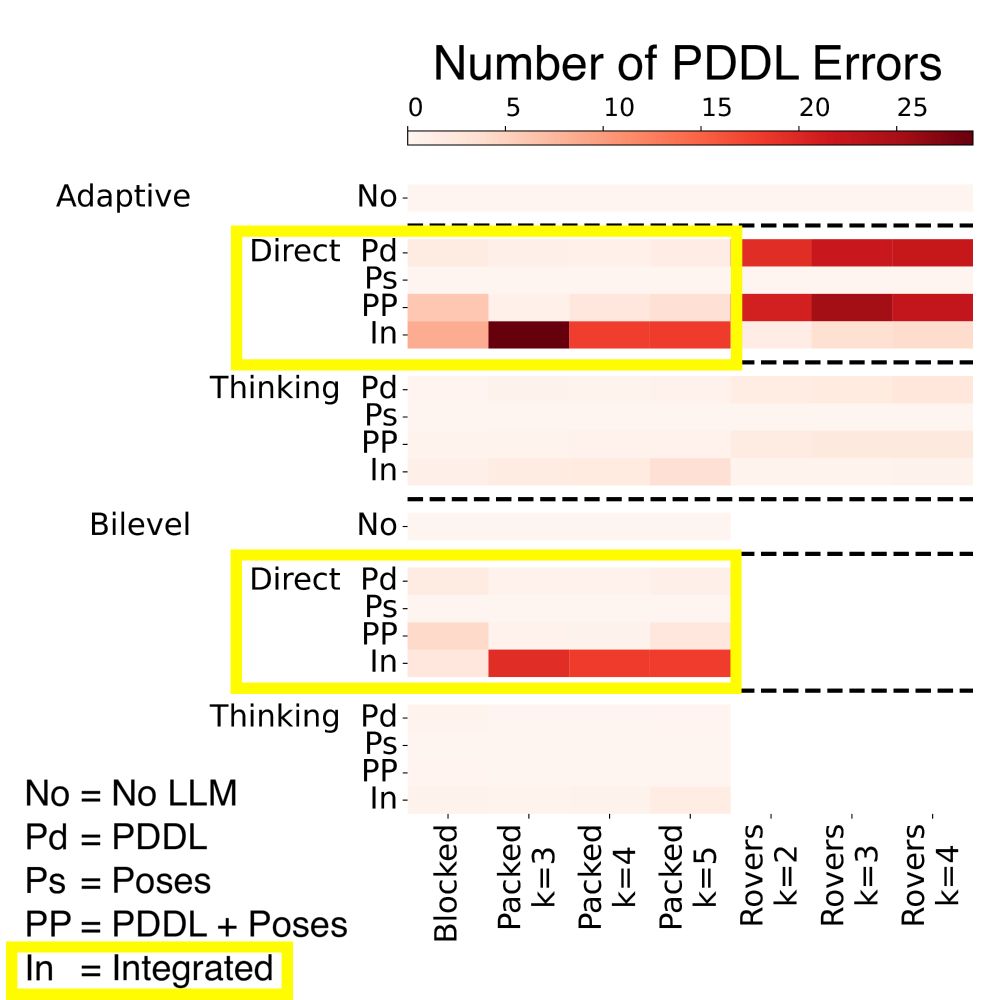

When the prompt included geometric details, the LLM made more PDDL errors. The extra info "distracts" the model from the logical constraints. Are these systems sensitive to prompt engineering!

When the prompt included geometric details, the LLM made more PDDL errors. The extra info "distracts" the model from the logical constraints. Are these systems sensitive to prompt engineering!

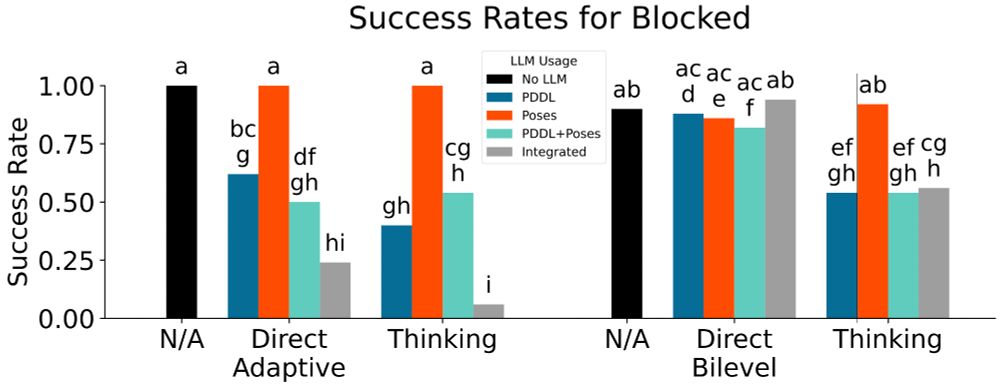

"Thinking" isn't always better! Non-reasoning LLMs outperformed reasoning ones.

Why? It's more efficient for the LLM generate plans quickly and have a formal TAMP system verify and correct them.

"Thinking" isn't always better! Non-reasoning LLMs outperformed reasoning ones.

Why? It's more efficient for the LLM generate plans quickly and have a formal TAMP system verify and correct them.

Excited to share our preprint, "A Systematic Study of Large Language Models for Task and Motion Planning With PDDLStream"!

16 LLM planners using Gemini, 4950 TAMP problems.

🧵👇

Excited to share our preprint, "A Systematic Study of Large Language Models for Task and Motion Planning With PDDLStream"!

16 LLM planners using Gemini, 4950 TAMP problems.

🧵👇