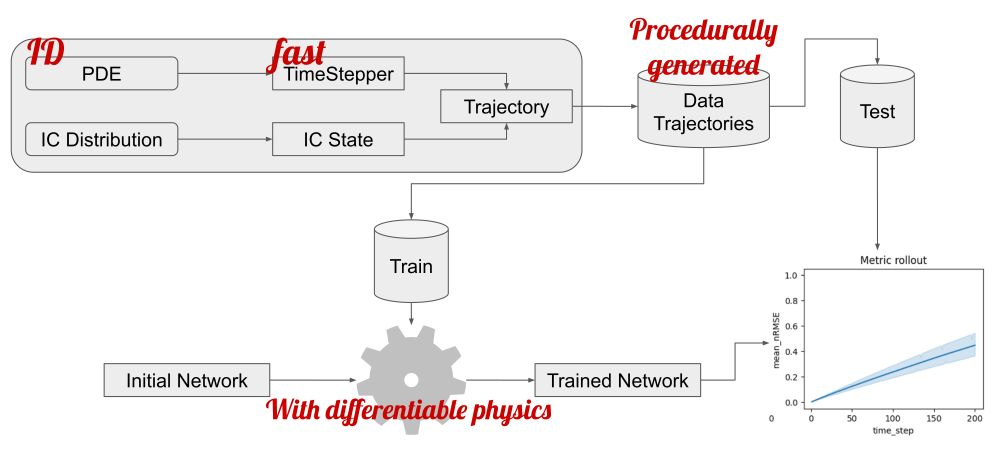

📃 Paper: arxiv.org/pdf/2510.23111

Feel free to stop by at NeurIPS in San Diego during the poster session on Friday evening 4:30 p.m. PST — 7:30 p.m. PST at # 2106. 😊 I am looking forward to the exchange!

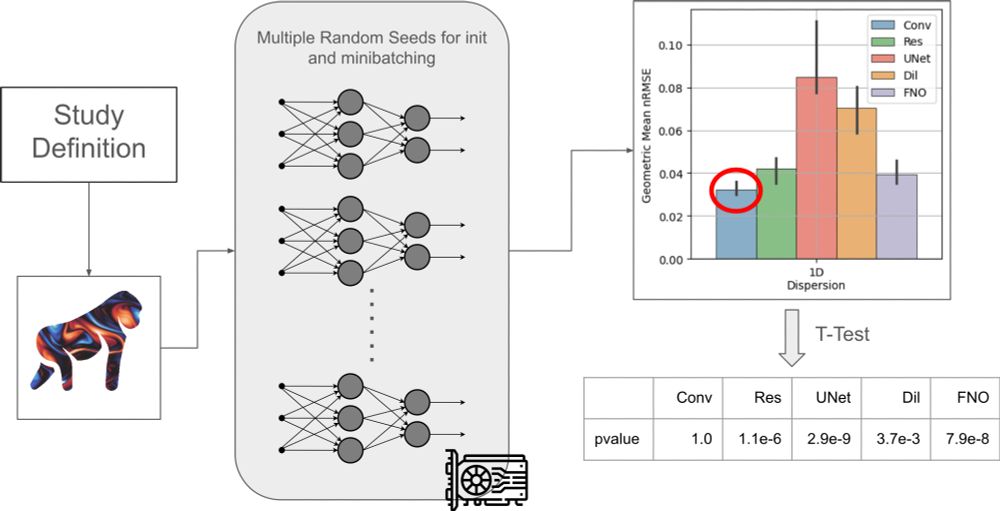

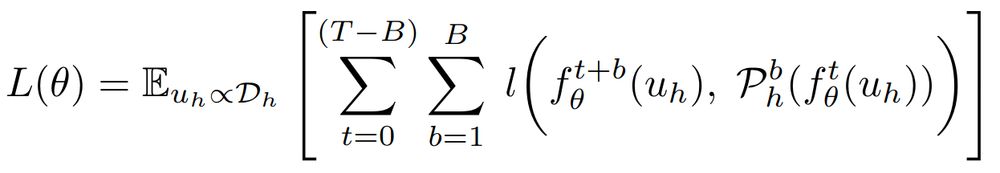

📃 Paper: arxiv.org/pdf/2510.23111

Feel free to stop by at NeurIPS in San Diego during the poster session on Friday evening 4:30 p.m. PST — 7:30 p.m. PST at # 2106. 😊 I am looking forward to the exchange!

At #EurIPS we are looking forward to welcoming presentations of all accepted NeurIPS papers, including a new “Salon des Refusés” track for papers which were rejected due to space constraints!

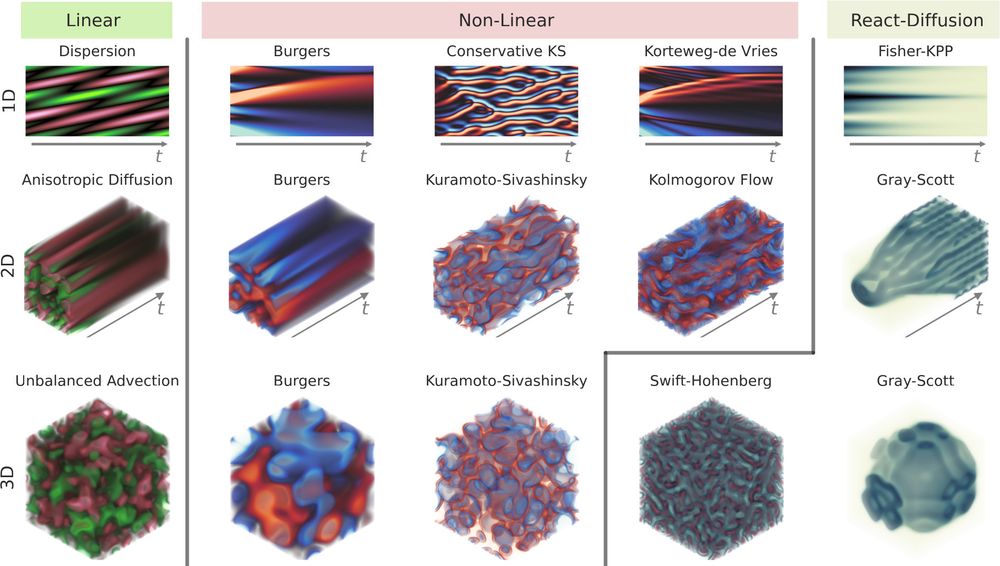

arxiv.org/abs/2411.00180

arxiv.org/abs/2411.00180