It still likes primate brain too much apparently.

It still likes primate brain too much apparently.

I thought it was corrected in 3. Apparently not. Good there is a long way to fool scientists with good anatomical knowledge.

Imo, area locations were consistent with this image, isn't it?

I thought it was corrected in 3. Apparently not. Good there is a long way to fool scientists with good anatomical knowledge.

Imo, area locations were consistent with this image, isn't it?

Well clearly Gemini 3 is still strongly attracted to draw human or primate brains. The teeth look better though

Well clearly Gemini 3 is still strongly attracted to draw human or primate brains. The teeth look better though

My knowledge of mouse anatomy, is quite limited as you can see. The location of the cortices looked good to me. Any opinion about that?

My knowledge of mouse anatomy, is quite limited as you can see. The location of the cortices looked good to me. Any opinion about that?

Doing figures will never be the same.

Doing figures will never be the same.

I used nano banana to make scientific figures. With 2.5 it was repetitively putting a human brain inside the 🐭 head.

Now it draws an accurate mouse brain anatomy even seems to locate correctly the cortical areas. Big jump imo

I used nano banana to make scientific figures. With 2.5 it was repetitively putting a human brain inside the 🐭 head.

Now it draws an accurate mouse brain anatomy even seems to locate correctly the cortical areas. Big jump imo

Austrian night trains are amazing. I tested the new mini-sleeper cabin (economy class).

5 ⭐ sleeping experience, but a bit small to eat the (included!) breakfast 🍞 ☕

Austrian night trains are amazing. I tested the new mini-sleeper cabin (economy class).

5 ⭐ sleeping experience, but a bit small to eat the (included!) breakfast 🍞 ☕

Here is a toy math model: 2 area system can be feedforward or recurrent (hypothetical mechanism 1 or 2) and produce the same activity distribution.

With an opto inactivation you separate the two hypothesis right away. Is that convincing?

Here is a toy math model: 2 area system can be feedforward or recurrent (hypothetical mechanism 1 or 2) and produce the same activity distribution.

With an opto inactivation you separate the two hypothesis right away. Is that convincing?

This is bc, mathematically, the effect of μ-perturbations is one taylor expansion away from RNN grads. So -- if RNN is robust -- grads of the RNN approx grads in the recorded circuit. Cool !

6/8

This is bc, mathematically, the effect of μ-perturbations is one taylor expansion away from RNN grads. So -- if RNN is robust -- grads of the RNN approx grads in the recorded circuit. Cool !

6/8

We simulate a read-write opto experiment setup where a robust RNN is used to target optimal μ-perturbations and change simulated mouse behavior in real-time.

(We also think it's a bit crazy... but it works in simulation)

5/8

We simulate a read-write opto experiment setup where a robust RNN is used to target optimal μ-perturbations and change simulated mouse behavior in real-time.

(We also think it's a bit crazy... but it works in simulation)

5/8

The results are consistent with the artificial data. Dale's law, local inhibition (and spikes) make the model more robust.

4/8

The results are consistent with the artificial data. Dale's law, local inhibition (and spikes) make the model more robust.

4/8

Empirically, features that improve robustness the best are:

- Dale's law: E/I weights are +/-

- Local inhibition: I do not project to other areas

Other features improve less:

- Replacing σ with spikes

- Sparsity prior

3/8

Empirically, features that improve robustness the best are:

- Dale's law: E/I weights are +/-

- Local inhibition: I do not project to other areas

Other features improve less:

- Replacing σ with spikes

- Sparsity prior

3/8

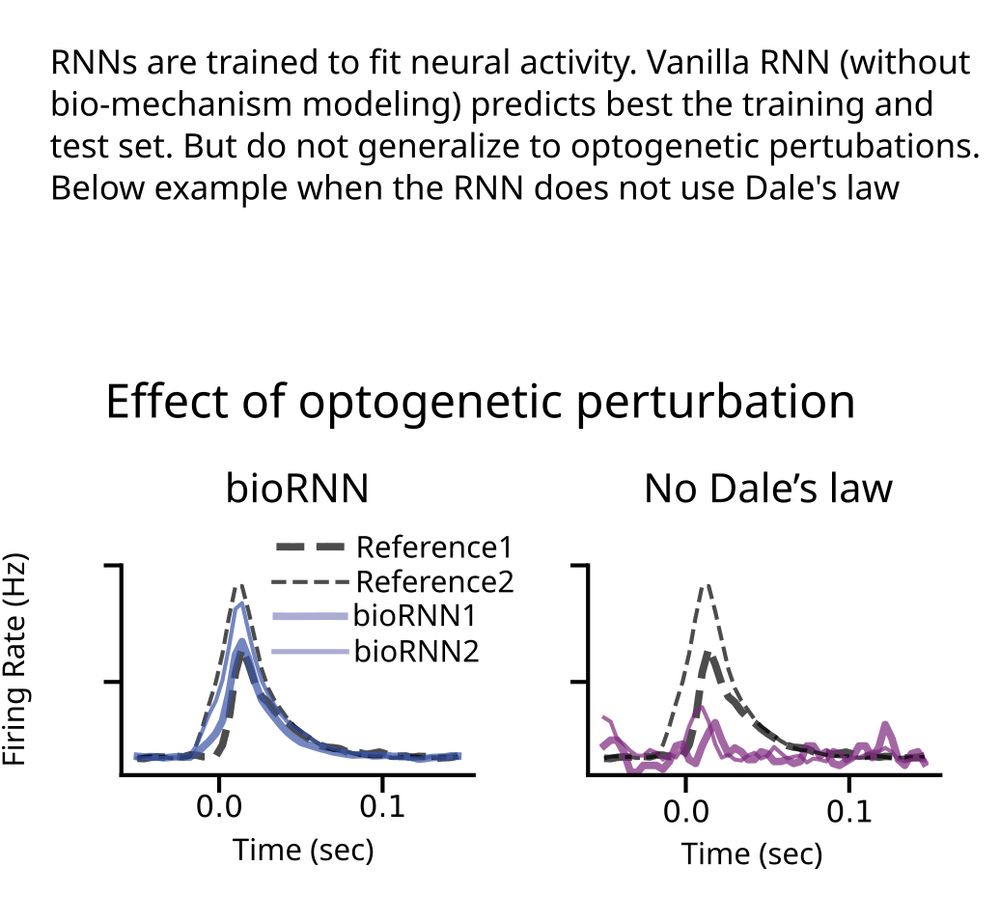

Vanilla σRNN predict very well before perturbation, but their response after perturbation is very wrong.

2/8

Vanilla σRNN predict very well before perturbation, but their response after perturbation is very wrong.

2/8

Is mechanism modeling dead in the AI era?

ML models trained to predict neural activity fail to generalize to unseen opto perturbations. But mechanism modeling can solve that.

We say "perturbation testing" is the right way to evaluate mechanisms in data-constrained models

1/8

Is mechanism modeling dead in the AI era?

ML models trained to predict neural activity fail to generalize to unseen opto perturbations. But mechanism modeling can solve that.

We say "perturbation testing" is the right way to evaluate mechanisms in data-constrained models

1/8

Empirically, features that improve robustness to perturbations are:

- Dale's law: E/I weights are +/-

- Local inhibition: I do not project to other areas

Other features improve less:

- Replacing σ with spikes

- Sparsity prior

3/8

Empirically, features that improve robustness to perturbations are:

- Dale's law: E/I weights are +/-

- Local inhibition: I do not project to other areas

Other features improve less:

- Replacing σ with spikes

- Sparsity prior

3/8

Vanilla σRNN predict very well before perturbation, but their response after perturbation is very wrong.

2/8

Vanilla σRNN predict very well before perturbation, but their response after perturbation is very wrong.

2/8

This publication was only possible because of the hard work of Christos Sourmpis. Congrats!

Thank you also to Carl Petersen and Wulfram Gersnter for the guidance and support.

This publication was only possible because of the hard work of Christos Sourmpis. Congrats!

Thank you also to Carl Petersen and Wulfram Gersnter for the guidance and support.

The network is constrained to data from 28 recording sessions and data across relevant sensory and motor cortices is used.

The model even has to produce coherent Jaw movement.

The network is constrained to data from 28 recording sessions and data across relevant sensory and motor cortices is used.

The model even has to produce coherent Jaw movement.

Trail Matching: how to fit a large spiking neural network to thousands of recorded neurons.

Trail Matching: how to fit a large spiking neural network to thousands of recorded neurons.