athiyadeviyani.github.io

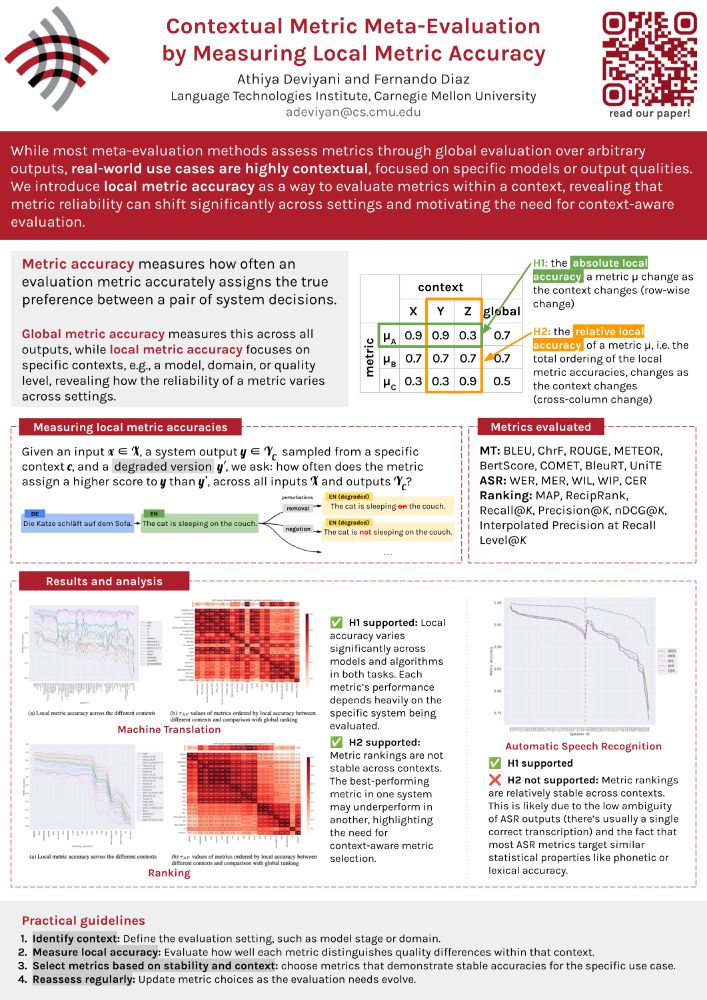

✅ H1 supported: Local accuracy still changes.

❌ H2 not supported: Metric rankings stay pretty stable.

This is probably because ASR outputs are less ambiguous, and metrics focus on similar properties, such as phonetic or lexical accuracy.

(🧵8/9)

✅ H1 supported: Local accuracy still changes.

❌ H2 not supported: Metric rankings stay pretty stable.

This is probably because ASR outputs are less ambiguous, and metrics focus on similar properties, such as phonetic or lexical accuracy.

(🧵8/9)

✅ H1 supported: Local accuracy varies a lot across systems and algorithms.

✅ H2 supported: Metric rankings shift between contexts.

🚨 Picking a metric based purely on global performance is risky!

Choose wisely. 🧙🏻♂️

(🧵7/9)

✅ H1 supported: Local accuracy varies a lot across systems and algorithms.

✅ H2 supported: Metric rankings shift between contexts.

🚨 Picking a metric based purely on global performance is risky!

Choose wisely. 🧙🏻♂️

(🧵7/9)

📝 Machine Translation (MT)

🎙 Automatic Speech Recognition (ASR)

📈 Ranking

We cover popular metrics like BLEU, COMET, BERTScore, WER, METEOR, nDCG, and more!

(🧵6/9)

📝 Machine Translation (MT)

🎙 Automatic Speech Recognition (ASR)

📈 Ranking

We cover popular metrics like BLEU, COMET, BERTScore, WER, METEOR, nDCG, and more!

(🧵6/9)

🧪H1: The absolute local accuracy of a metric changes as the context changes

🧪H2: The relative local accuracy (how metrics rank against each other) also changes across contexts

(🧵5/9)

🧪H1: The absolute local accuracy of a metric changes as the context changes

🧪H2: The relative local accuracy (how metrics rank against each other) also changes across contexts

(🧵5/9)

We create y′ using perturbations that simulate realistic degradations automatically.

(🧵4/9)

We create y′ using perturbations that simulate realistic degradations automatically.

(🧵4/9)

🌍 Global accuracy averages this over all outputs.

🔎 Local accuracy zooms in on a specific context (like a model, domain, or quality level).

Contexts are just meaningful slices of your data.

(🧵3/9)

🌍 Global accuracy averages this over all outputs.

🔎 Local accuracy zooms in on a specific context (like a model, domain, or quality level).

Contexts are just meaningful slices of your data.

(🧵3/9)

In our #NAACL2025 paper (w/ @841io.bsky.social), we show why global evaluations are not enough and why context matters more than you think.

📄 aclanthology.org/2025.finding...

#NLP #Evaluation

(🧵1/9)

In our #NAACL2025 paper (w/ @841io.bsky.social), we show why global evaluations are not enough and why context matters more than you think.

📄 aclanthology.org/2025.finding...

#NLP #Evaluation

(🧵1/9)