raxIT.ai

Cool story. We actually tested it.

#GPT5 #OpenAI #AISafety #ResponsibleAI #AIBenchmarking #ModelEvaluation #GrayZoneBench #AI

Cool story. We actually tested it.

#GPT5 #OpenAI #AISafety #ResponsibleAI #AIBenchmarking #ModelEvaluation #GrayZoneBench #AI

Look at what OpenAI did for their new open models:

- Million-dollar red team attacks

- Bio-security partnerships

- External safety audits

- .. many more

#AISafety #OpenAI #RealTalk #AISecurity

Look at what OpenAI did for their new open models:

- Million-dollar red team attacks

- Bio-security partnerships

- External safety audits

- .. many more

#AISafety #OpenAI #RealTalk #AISecurity

#AI #AISafety #AISecurity #Security #Governance #ChainOfThought

#AI #AISafety #AISecurity #Security #Governance #ChainOfThought

#startups

#startups

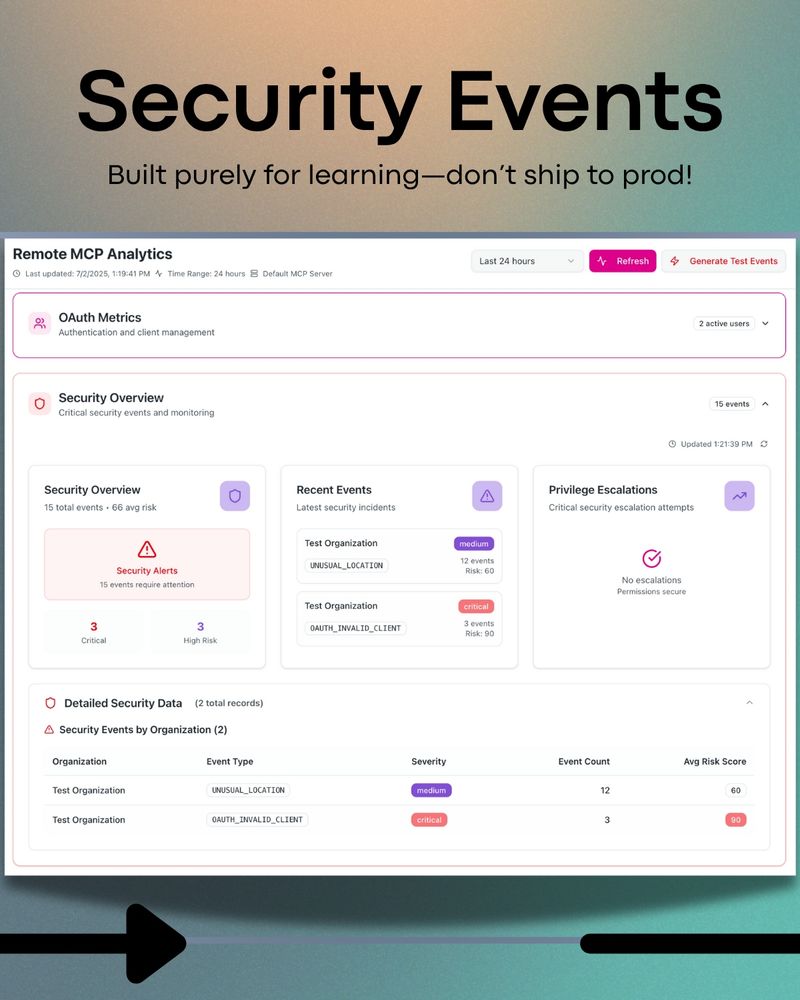

Shipped: full authorization flow, metrics, alerts, silent token refresh, and an admin dashboard. Swipe the carousel for the walkthrough.

#AISecurity #OAuth2 #MCP #ModelContextProtocol #Authorization #BuildInPublic

Shipped: full authorization flow, metrics, alerts, silent token refresh, and an admin dashboard. Swipe the carousel for the walkthrough.

#AISecurity #OAuth2 #MCP #ModelContextProtocol #Authorization #BuildInPublic

#AIsecurity #PromptInjection #AI #Cybersecurity

#AIsecurity #PromptInjection #AI #Cybersecurity

raxit.ai/blogs/127m-a...

raxit.ai/blogs/127m-a...

🔥 AI is changing the game—but are you SURE your AI is safe by design?

🔥 AI is changing the game—but are you SURE your AI is safe by design?

#RewardHacking #AIRisks #EnterpriseAI

#RewardHacking #AIRisks #EnterpriseAI

#AITransparency #AIEthics #ModelSafety #ResponsibleAI #ChainOfThought #AIRiskManagement #AISecurityByDesign

#AITransparency #AIEthics #ModelSafety #ResponsibleAI #ChainOfThought #AIRiskManagement #AISecurityByDesign

Client: "We need the safest AI for our healthcare app."

Us: "Perfect, a system with Constitutional Classifiers would be ideal."

Client: "Great, let's use that."

Client: "We need the safest AI for our healthcare app."

Us: "Perfect, a system with Constitutional Classifiers would be ideal."

Client: "Great, let's use that."