I'm Giuseppe, currently working at AMD on open-source ML quantization.

If that's something you're interested in, check out: github.com/Xilinx/brevi...

We support all the fanciest SOTA quantization algorithm, with a focus on modularity and composability.

We recently published a small paper analyzing how post-training model expansion can add more flexibility during quantization time.

It is possible to improve the accuracy of your quantized model by slightly increasing your model size, without retraining.

We recently published a small paper analyzing how post-training model expansion can add more flexibility during quantization time.

It is possible to improve the accuracy of your quantized model by slightly increasing your model size, without retraining.

It helps to put things in perspective and step away from the sometimes unjustified LLM hype.

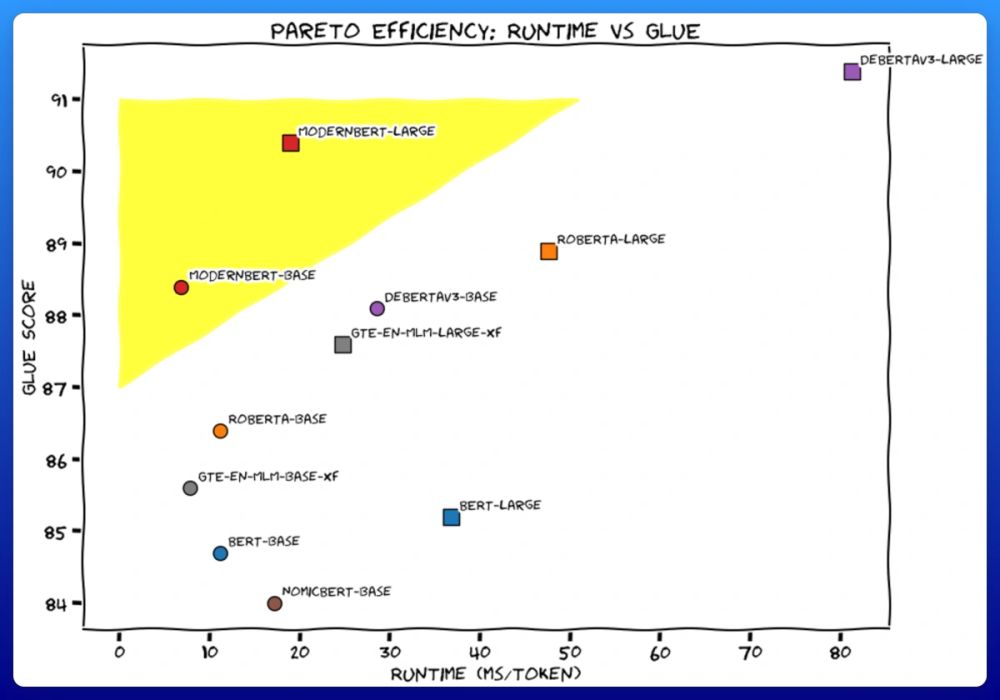

We trained 2 new models. Like BERT, but modern. ModernBERT.

Not some hypey GenAI thing, but a proper workhorse model, for retrieval, classification, etc. Real practical stuff.

It's much faster, more accurate, longer context, and more useful. 🧵

It helps to put things in perspective and step away from the sometimes unjustified LLM hype.

If you're interested in accumulation aware quantization or you want to see some interesting results on 4bit LLM quantization with Integer/minifloat/MX, check it out!

But so hard.

Well-cited "SOTA" methods typically crash often. They tend to be very computational expensive. Both make a systematic study impossible.

Finally, reviewers always ask for more methods, and more "SOTA".

But so hard.

Well-cited "SOTA" methods typically crash often. They tend to be very computational expensive. Both make a systematic study impossible.

Finally, reviewers always ask for more methods, and more "SOTA".

```

from atproto import *

def f(m): print(m.header, parse_subscribe_repos_message())

FirehoseSubscribeReposClient().start(f)

```

```

from atproto import *

def f(m): print(m.header, parse_subscribe_repos_message())

FirehoseSubscribeReposClient().start(f)

```

Lots of new things, including:

- QAT/PTQ for minifloat

- QAT/PTQ for MX Int and MX Float

- Compatibility with huggingface's accelerate

- New PTQ techniques (HQQ, Channel splitting)

Lots of new things, including:

- QAT/PTQ for minifloat

- QAT/PTQ for MX Int and MX Float

- Compatibility with huggingface's accelerate

- New PTQ techniques (HQQ, Channel splitting)

www.forbes.com/sites/moinro...

www.forbes.com/sites/moinro...

I'm Giuseppe, currently working at AMD on open-source ML quantization.

If that's something you're interested in, check out: github.com/Xilinx/brevi...

We support all the fanciest SOTA quantization algorithm, with a focus on modularity and composability.

I'm Giuseppe, currently working at AMD on open-source ML quantization.

If that's something you're interested in, check out: github.com/Xilinx/brevi...

We support all the fanciest SOTA quantization algorithm, with a focus on modularity and composability.